Your AI coding agent doesn't write TDD. Left to its default behavior, it skips the spec, skips the plan, and starts generating code the moment you finish your prompt. Superpowers is a framework built specifically to fix that — not by making the model smarter, but by making it impossible for the model to skip the discipline that every senior engineer knows you need before touching the codebase.

What Is Superpowers? (One Paragraph)

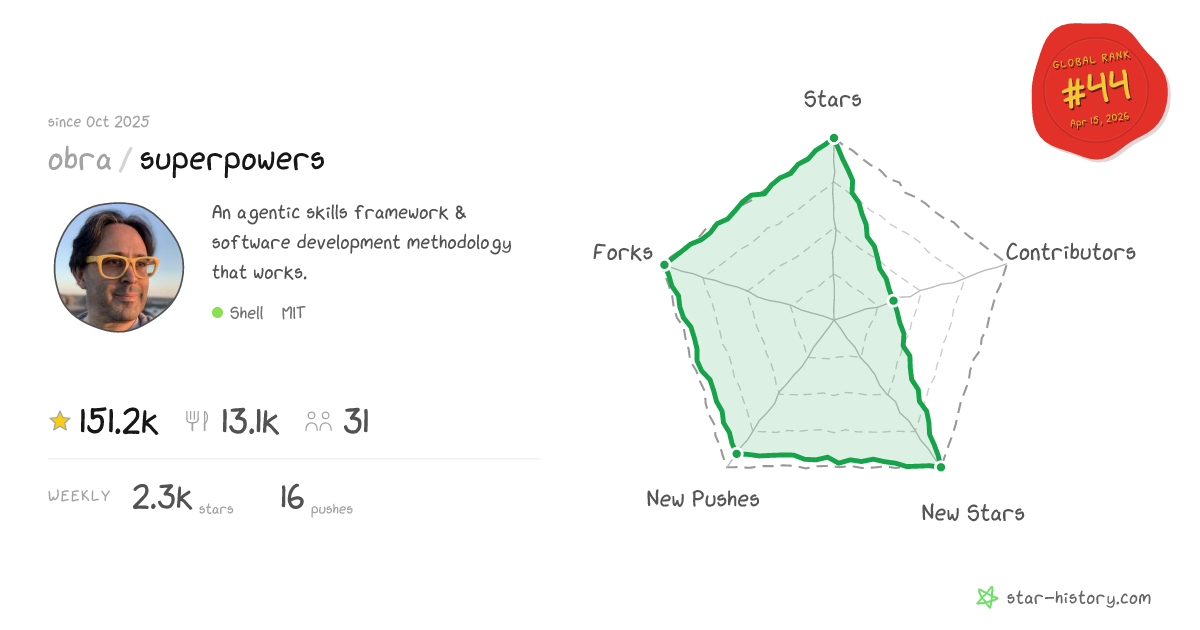

Superpowers is an open-source agentic skills framework for AI coding agents, built by Jesse Vincent and the team at Prime Radiant. It installs a set of composable "skills" — mandatory behavioral guides written as SKILL.md files — that your coding agent must read and follow before taking action. The default skill stack enforces a structured workflow: brainstorm the design first, create an isolated git worktree, write a micro-task plan with verification steps, then execute via subagents. Available on GitHub under MIT license, with 150K+ stars as of April 2026. It runs on Claude Code, Codex, Cursor, Gemini CLI, OpenCode, and Copilot CLI.

What problem it solves — why AI agents rush straight to code

The failure mode Superpowers targets is specific and well-documented by anyone who's used coding agents in production: you give the agent a feature request, and it immediately starts writing code. It picks a library you didn't want, creates files in the wrong location, and misses two requirements because it never asked what you actually needed. By the time you notice, you're debugging output you didn't spec rather than shipping code you designed.

The root cause isn't intelligence — it's default behavior. Language models are trained to be immediately helpful. That training instinct drives them to produce output as fast as possible. What gets skipped is everything a senior engineer does first: ask the right clarifying questions, sketch the architecture, write down what you're going to build before you build it. Superpowers intercepts that default instinct at the session level and routes it through structured gates instead.

Who built it — Jesse Vincent / Prime Radiant

Jesse Vincent is the creator of Request Tracker (the open-source ticketing system used widely across the Perl community and beyond), was responsible for Perl 5 language releases, and co-founded Keyboardio. He built the first version of Superpowers in October 2025 — the same week Anthropic launched its plugin system for Claude Code — and has been running it in active production development since. Prime Radiant, the company he founded in early 2026, is now the organizational home for the project. As Simon Willison has noted publicly, Vincent is among the most systematic users of coding agents he's encountered, which explains the methodology-first orientation of what he built.

How the Skill System Works

Composable skills — what they are and how they trigger automatically

A "skill" in Superpowers is a SKILL.md file containing a structured behavioral guide: when to activate, what steps to follow, what verification to perform. The agent reads the relevant skill and follows it. That's the whole mechanism. No custom model, no special inference layer — just structured instructions the agent is required to consult.

The key word is "required." The master skill (using-superpowers SKILL.md) is injected at every session start via a session hook. Its actual text includes: "IF A SKILL APPLIES TO YOUR TASK, YOU DO NOT HAVE A CHOICE. YOU MUST USE IT. This is not negotiable. This is not optional. You cannot rationalize your way out of this." The model checks for relevant skills before any response — including before answering clarifying questions. If a skill exists for what you're asking, it is invoked.

Skills trigger automatically based on context detection, not explicit user commands. When you describe something that sounds like starting a feature ("help me build X," "I want to add Y"), the brainstorming skill activates. When you've agreed on a design and the agent needs to start working, the git worktree skill activates. The session-start hook is what makes this invisible to the user: you don't type a command to engage Superpowers — it's already running from the moment the session opens.

There's an important priority hierarchy: user instructions in CLAUDE.md, GEMINI.md, or AGENTS.md take precedence over skills. If your project file says "don't use TDD," the skill that enforces TDD defers to your instruction. Skills override default model behavior, but they don't override explicit user configuration.

The core workflow: brainstorm → plan → execute-plans → TDD

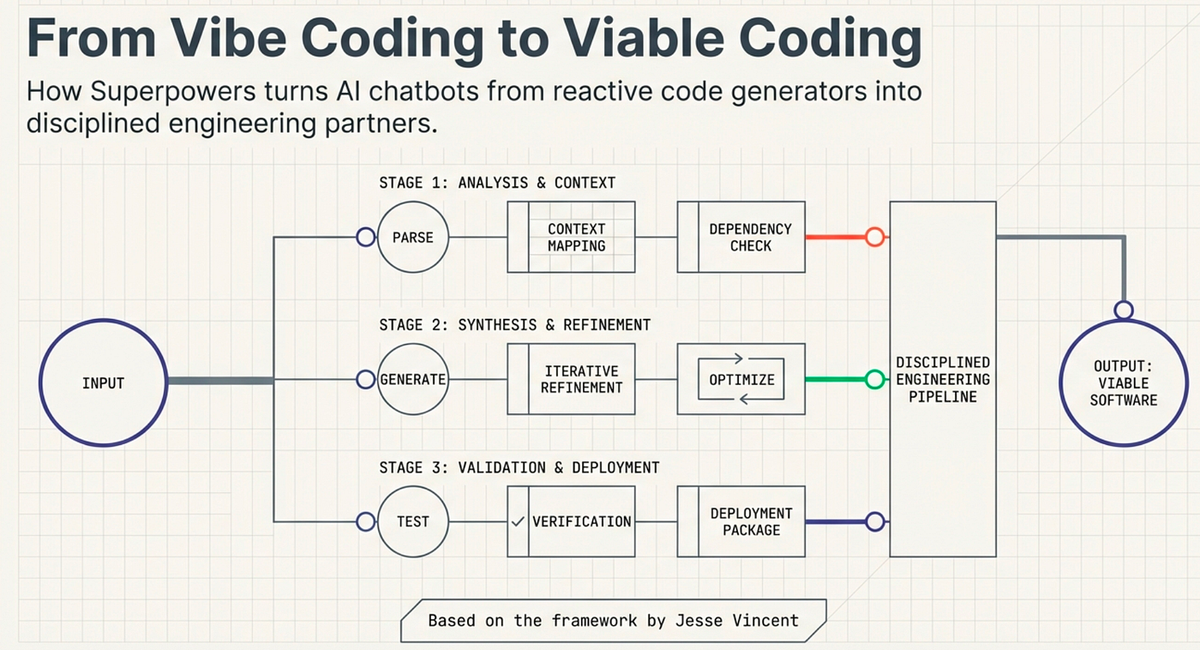

The full workflow Superpowers enforces follows four mandatory phases:

Brainstorm — Before any code, the agent asks what you're actually trying to build. It works through the design with you in chunks short enough to actually read and respond to, surfaces edge cases, explores alternatives, and produces a written design document only when you've explicitly signed off.

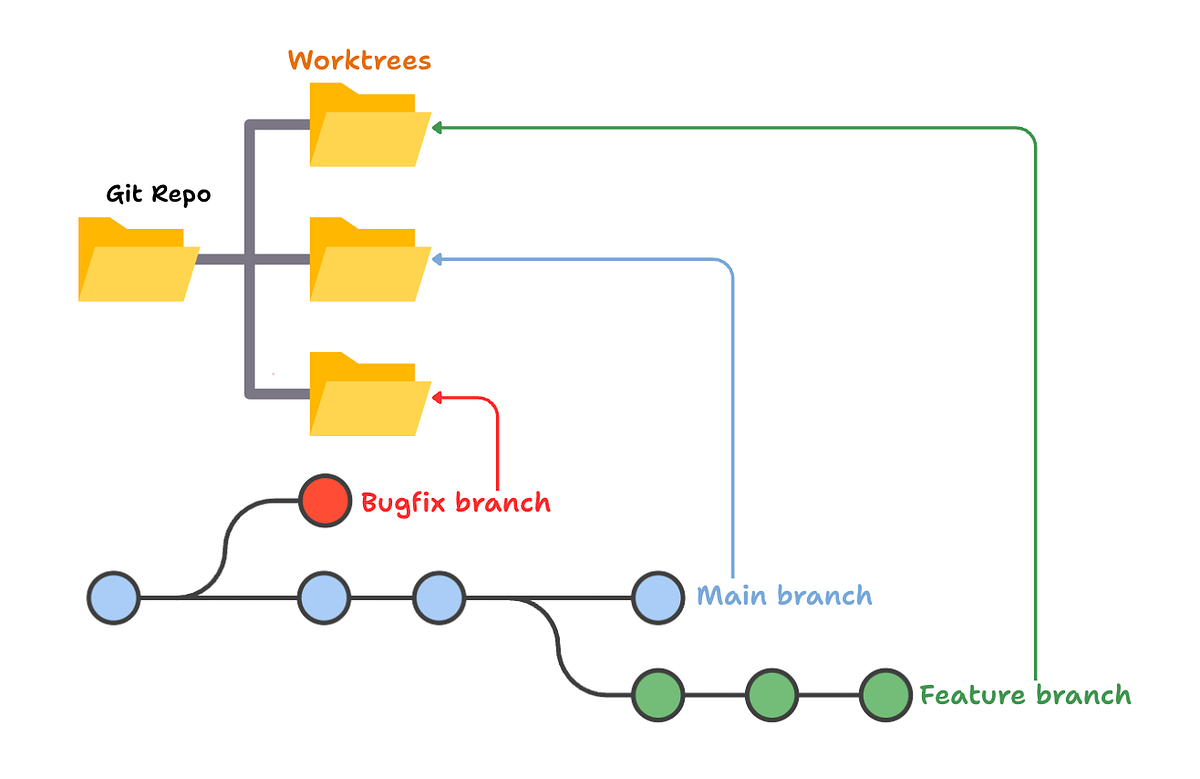

Isolated workspace — Once the design is approved, the git worktree skill creates a new branch in an isolated worktree. Your main branch is untouched. The agent runs project setup and verifies a clean test baseline before anything is written.

Plan — The writing-plans skill produces a micro-task implementation plan written for what the skill describes as "an enthusiastic junior engineer with poor taste, no judgment, no project context, and an aversion to testing." Every task is 2–5 minutes of work, has exact file paths, complete expected code, and a verification step. The plan is granular enough that nothing can be misinterpreted.

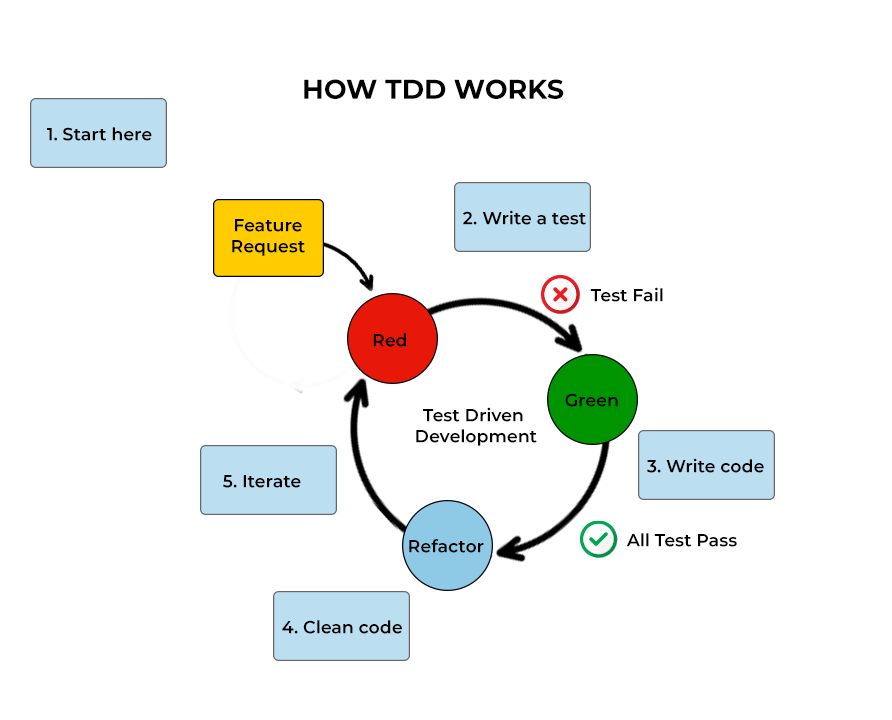

Execute with TDD — Subagents work through each task in the plan with strict red/green/refactor discipline: write the test first, watch it fail, write the code, watch it pass. Each subagent starts fresh (no context drift from earlier tasks) and goes through two review passes — spec compliance, then code quality — before its output is accepted.

Key Skills in the Default Stack

brainstorming — design gate before any code

The brainstorming skill activates when the agent detects you're starting something new. Its job is to turn a vague feature request into a validated design document through Socratic dialogue. The agent doesn't just ask "what do you want?" — it surfaces architectural tradeoffs, asks about edge cases you haven't mentioned, and presents the emerging design in digestible sections for your explicit approval. Nothing proceeds until you've signed off. This is the gate that prevents the most expensive failure mode: starting to build the wrong thing.

using-git-worktrees — isolated execution environment

Each feature development session gets its own git worktree — an isolated directory on its own branch, separate from your main working tree. The skill handles smart directory selection, safety verification, and project setup. You can have the agent working on a feature in a worktree while you continue development on main without conflicts. When the work is done, the finishing-a-development-branch skill guides you through the merge/PR/cleanup options.

writing-plans — bite-sized tasks with verification steps

The plan the agent writes is deliberately over-specified. Every task includes exact file paths, complete expected code, and explicit verification steps — the level of detail you'd need if the executor had zero context about the project. This looks like overhead until you realize why it works: it forces the planning phase to surface every assumption before execution starts. Ambiguities that would have caused rework in mid-implementation get resolved in cheap text form during planning.

subagent-driven-development — parallel agent execution

On platforms that support subagents (Claude Code and Codex currently), each plan task is executed by a fresh subagent rather than the main agent continuing in its existing context. This solves context drift — the tendency of long-running agents to gradually deviate from the original spec as their context window fills with irrelevant history. Each subagent receives only the task it needs to complete plus the relevant plan section. Two review passes follow: the first checks spec compliance, the second checks code quality.

For platforms without native subagent support (Gemini CLI and others), the executing-plans skill provides a sequential alternative. Since a recent update per the release notes, the writing-plans skill no longer offers a choice between the two — if subagent capability is detected, subagent-driven-development is required.

What Superpowers Is Not

Not a new model or tool — a behavior layer on top of existing agents

Superpowers doesn't modify model weights, add a custom inference layer, or change how the underlying model reasons. It's a set of SKILL.md files and a session hook. The agent doing the work is still Claude, Codex, or whatever platform you're running — Superpowers just determines what that agent does before it starts writing code. The framework is model-agnostic by design: when Anthropic ships a smarter Claude, Superpowers benefits automatically. The constraint being enforced is procedural, not architectural.

This distinction matters for evaluating whether to adopt it. You are not betting on a new model provider or a proprietary inference stack. You are adopting a development methodology encoded as structured prompts. If the methodology stops working for your team, you remove the plugin. Nothing in your infrastructure changes.

Where it slows you down — simple tasks, exploratory work

The brainstorm → plan → execute pipeline adds real overhead: 10–20 minutes of structured dialogue before code starts. For a two-line bug fix, that overhead is absurd. For exploratory prototyping where you're deliberately trying things out without a clear spec, the forced brainstorming phase fights against what you're trying to do. Superpowers works best when you have a clear feature or refactor to accomplish and you want it done reliably. It's the wrong tool for "let me just try something and see what happens."

Jesse Vincent has been explicit about this in his own writing on the framework: skills are meant to be invoked when they apply, and there are task types where none apply. The framework is designed to stay out of the way when it shouldn't be running.

Supported Platforms

Superpowers supports a range of coding agent platforms, with varying levels of integration:

Claude Code — primary platform, full subagent support, official Anthropic marketplace listing since January 2026 (installable via claude plugin add obra/superpowers), session-start hook fully supported.

Codex — full subagent support, manual configuration via providers array.

Cursor — plugin support, sequential executing-plans (no subagent capability).

Gemini CLI — supported with tool mapping reference (Gemini CLI tool names differ from Claude Code); falls back to executing-plans since no subagent support.

OpenCode and Copilot CLI — supported; Copilot CLI received session-start hook support in a recent release.

Platform capabilities determine which execution mode is available: subagent-driven-development (parallel, fresh context per task) on Claude Code and Codex; executing-plans (sequential) on everything else.

When It's Worth Using

Use Superpowers when:

- You're building a feature with enough scope that starting without a spec would cost you debugging time later

- You've had agents drift from the original requirement mid-implementation and want that prevented structurally

- You want TDD enforced without having to remind the agent on every task

- You're working on a codebase where incorrect initial assumptions are expensive to unwind

- You're delegating significant work to an agent you want running autonomously for an extended session

Skip it (or invoke skills selectively) when:

- The task is a targeted one or two-line fix where the problem is already specified

- You're intentionally exploring solution space without a committed direction

- The time cost of the planning phase exceeds the likely time cost of unstructured execution for this specific task

The overhead question is the right one to ask. Superpowers earns its planning cost on complex tasks where unstructured agents would have produced wrong output requiring significant correction. For trivial tasks, it doesn't.

FAQ

Does it work with Claude Code?

Yes — Claude Code is the primary platform. Installation is available through the Anthropic official plugin marketplace (claude plugin add obra/superpowers) or via the GitHub repo directly. The session-start hook integrates fully, skills auto-trigger, and subagent-driven-development is available since Claude Code supports native subagents.

Does the overhead pay off for quick bug fixes?

No. The brainstorming and planning phases are designed for feature-level work, not targeted bug fixes. For a small, well-specified fix, running Superpowers adds unnecessary process. The framework is aware of this — the using-superpowers master skill checks context before invoking anything, and simple requests that don't match the "building something" pattern may not trigger the full workflow. But if you want certainty it's out of the way for small tasks, just run Claude Code without the plugin for that session.

Is it free / open source?

MIT license, completely free. No usage limits, no subscription, no data collection from your sessions. The skills repository (obra/superpowers-skills) is also MIT and community-editable — you can fork it, modify skills for your project, or contribute back. The plugin itself is a lightweight shim that manages a local clone of the skills repo; skills update automatically on session start.

How is it different from CLAUDE.md or AGENTS.md?

CLAUDE.md and AGENTS.md are project-specific instruction files — you write them yourself to give Claude persistent context about your project, preferences, and constraints. They're documentation that the agent reads at session start. Superpowers skills are procedural workflows: they don't just tell the agent what your project is, they specify what process to follow when doing specific task types. The two systems are complementary and explicitly designed to coexist. Superpowers skills defer to CLAUDE.md instructions where they conflict — if your project file overrides a skill behavior, the project file wins.

Related Reading

- Claude Code vs Verdent: Multi-Agent Architecture Compared — How Superpowers' subagent-driven-development relates to multi-agent orchestration architectures like Verdent's parallel worktree system.

- LLM Knowledge Base for Coding Agents: Beyond RAG — Persistent context strategies that complement Superpowers' session-level skill injection.

- What Is G0DM0D3? — Another open-source framework that uses SKILL.md-style composable behavioral guides for a different use case (multi-model evaluation).

- Claw Code: Claude Code, OpenClaw, and What Each Actually Does — The Claude Code ecosystem context where Superpowers operates.

- godmod3 Review 2026 — Comparison point: how other open-source agent tools approach structured workflow enforcement.