Two months ago, I was deep in a gnarly microservices refactor — the kind where touching one service breaks three others. I ran the same task through both Claude Code and Verdent on the same afternoon. Same codebase. Same prompt. Completely different results.

That afternoon forced me to stop treating these two tools as interchangeable. They're not. As a principal-level engineer who's been stress-testing AI coding agents on real production workloads, I want to give you an honest breakdown of where each tool wins, where it falls apart, and exactly who should be using which.

Let's get into it.

Quick Verdict

If you need a one-paragraph answer: Claude Code is a terminal-native, deeply integrated agentic coder with strong codebase reasoning and a familiar subscription model. Verdent is a dedicated multi-agent orchestration platform with true parallel execution, Git Worktree isolation, and an explicit plan-before-code workflow. For solo devs or teams doing exploratory work and single-stream tasks, Claude Code is hard to beat. For complex, multi-module projects where code safety and parallel execution matter, Verdent has a structural edge.

| Feature | Claude Code | Verdent |

|---|---|---|

| Multi-agent support | ✅ Agent Teams (experimental, Opus 4.6+) | ✅ Built-in parallel agents (core feature) |

| Plan-first execution | Partial (manual workflow) | ✅ Dedicated Plan Mode |

| IDE integration | VS Code, JetBrains, terminal, browser | VS Code, JetBrains, Verdent Deck (desktop) |

| Git isolation | Requires manual worktree setup | ✅ Automatic Git Worktree isolation |

| Code verification loop | Manual test-fix cycle | ✅ Built-in multi-round generate→test→fix |

| Pricing model | Subscription ($20–$200/mo) + optional API tokens | Credit-based ($19–$179/mo) |

| SWE-bench Verified (pass@1) | ~72% (Claude Opus 4.6, Epoch AI, Mar 2026) | 76.1% (Verdent official technical report, Nov 2025) |

| Terminal-native | ✅ Yes | ❌ No (IDE + desktop app) |

What Is Claude Code?

Core architecture and how it runs tasks

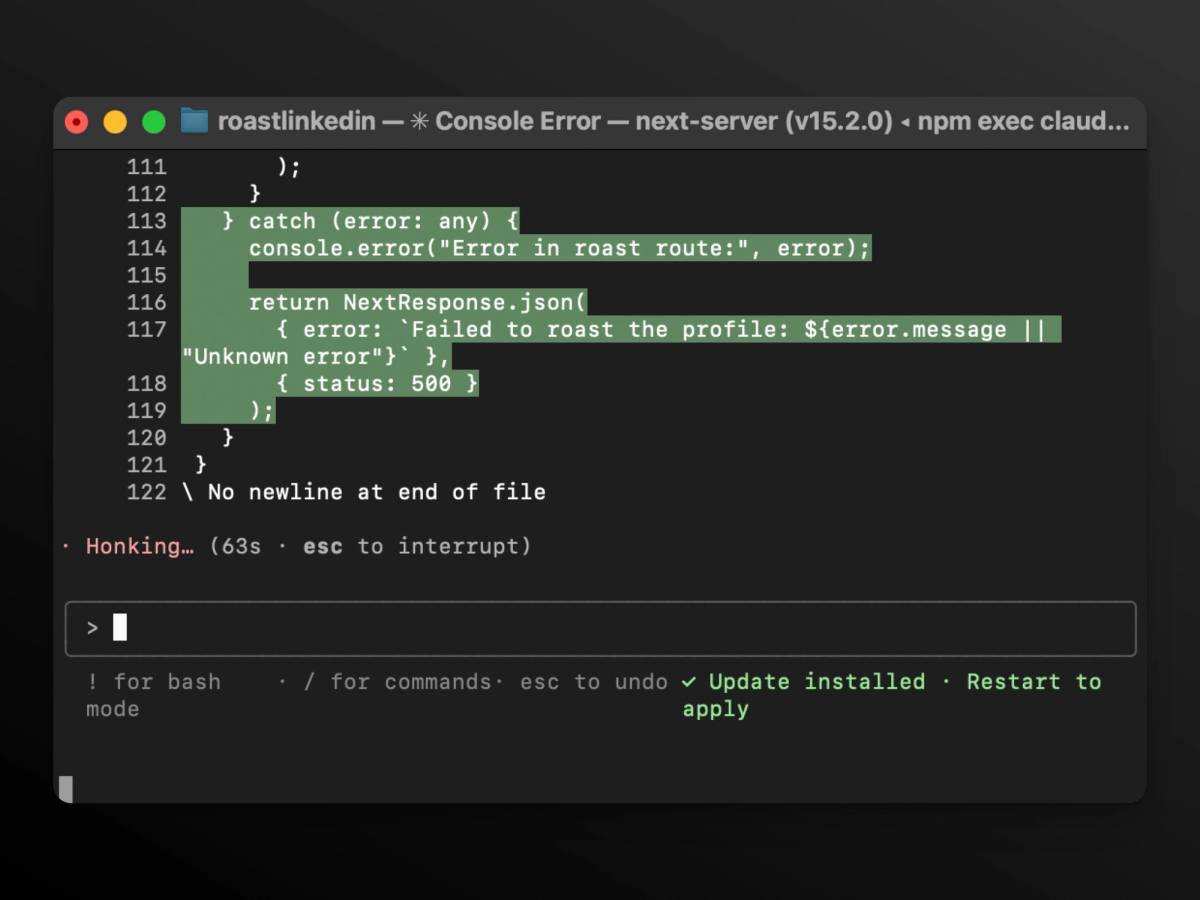

Claude Code is Anthropic's terminal-based agentic coding tool. It reads your entire codebase, runs shell commands, edits files, and executes multi-step tasks autonomously. Under the hood, it uses Claude's models (Sonnet 4.6 or Opus 4.6) with a massive context window — up to 1M tokens via Opus 4.6 (beta) — to maintain project-wide understanding.

The key architectural unit is the subagent: a specialized assistant that runs in its own context window with its own system prompt, tool access, and permissions. Claude Code's official subagent documentation explains that subagents are defined via Markdown files with YAML frontmatter, and can be scoped to specific tasks like code review, security auditing, or database queries.

One important constraint: subagents cannot spawn other subagents. They report results back to the main agent, not to each other. This is fine for most tasks — but it creates a bottleneck in complex parallel workflows.

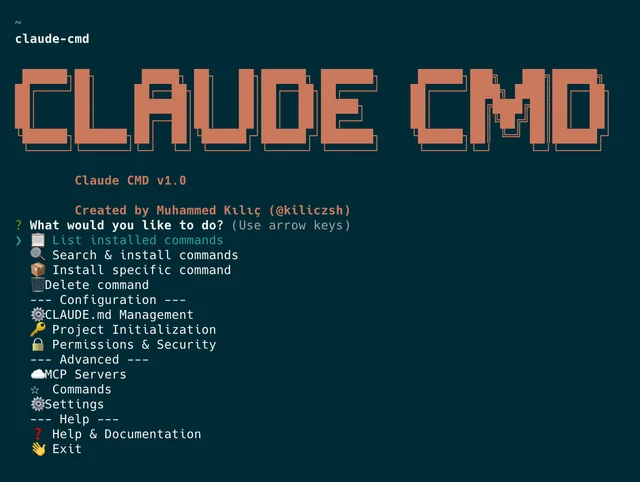

Where it runs (terminal, VS Code, JetBrains, browser)

Claude Code runs natively in the terminal (claude CLI), and integrates with VS Code and JetBrains. There's also a browser interface. This flexibility is a genuine advantage — most of my workflow already lives in the terminal, and Claude Code slots right in.

Multi-agent subagent model — how it works in practice

Since the Opus 4.6 release in February 2026, Claude Code ships with an experimental feature called Agent Teams: one session acts as team lead, coordinates via a shared task list, and spawns teammates that run in their own context windows. Critically, Agent Teams teammates can message each other directly — unlike subagents, which can only report back to the main agent.

But here's the catch: Agent Teams is still experimental, disabled by default, and has documented reliability issues for complex coordination. To enable it, you need:

// settings.json

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}For most production workflows, you're still working with subagents — which means sequential task reporting, not true parallel execution.

What Is Verdent?

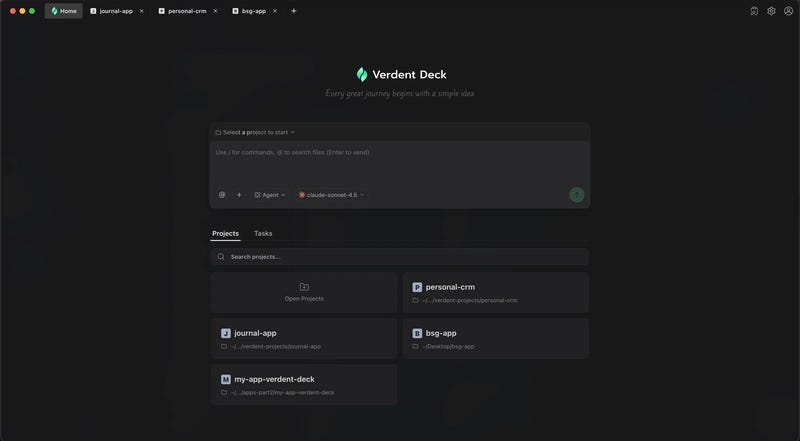

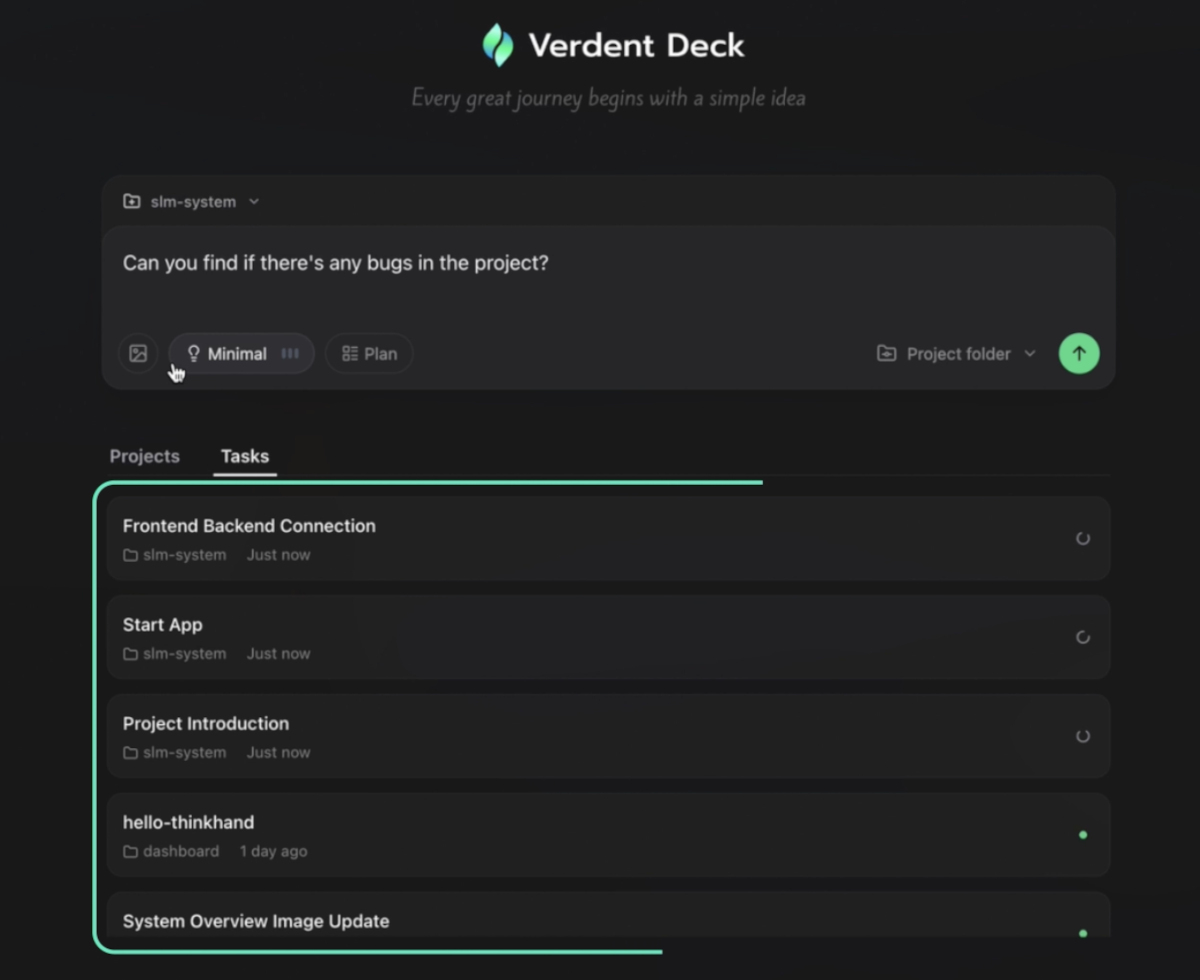

Multi-agent parallel execution and Verdent Deck

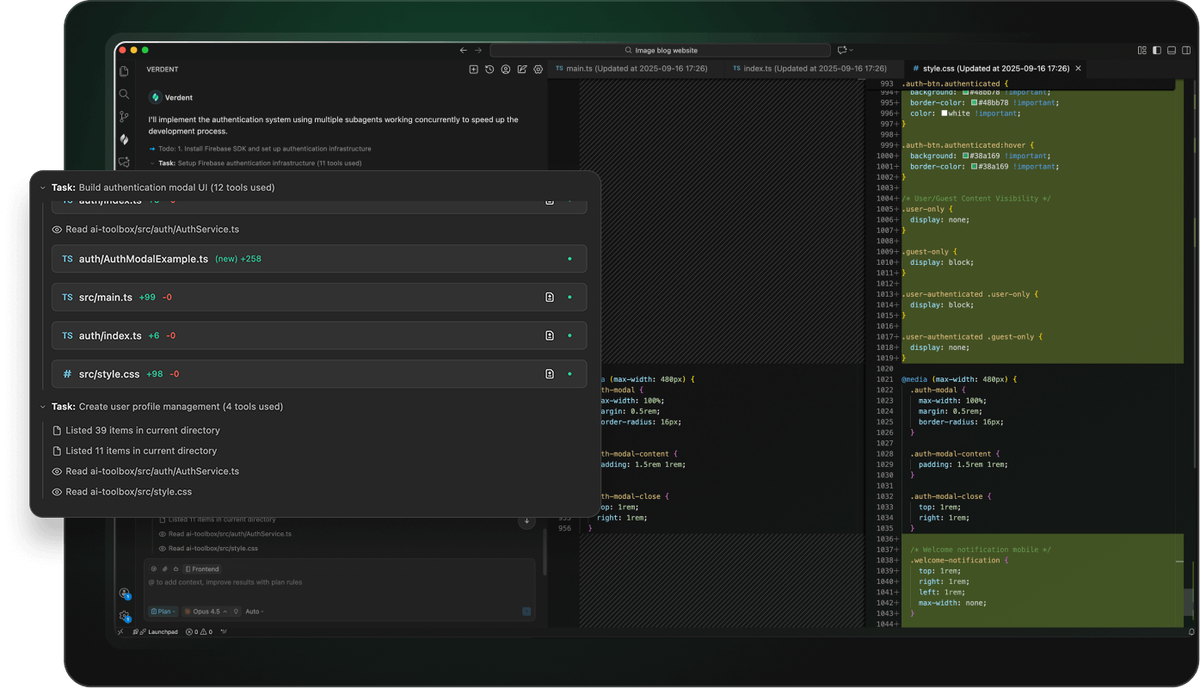

Verdent's core thesis is different from Claude Code's. It doesn't extend a conversation model into agentic territory — it was architected from day one around multi-agent parallel execution. Multiple agents run simultaneously in isolated Git worktrees, handling different parts of a task or codebase concurrently.

Verdent Deck is the macOS desktop app (Windows support coming) that gives you a live dashboard across all running agents. You can monitor progress, review diffs, and intervene without losing context. Credits are shared between Verdent Deck and the VS Code extension on the same account.

Plan Mode and Git Worktree isolation

The workflow Verdent enforces is: plan first, then execute. When you submit a vague requirement like "refactor the auth service to support OAuth2," Verdent's Plan Mode doesn't immediately start generating code. It asks clarifying questions, produces a requirements doc, and breaks work into verifiable subtasks before any agent touches a file.

Git Worktree isolation is the safety net. Each agent operates in its own isolated worktree — so a failing agent can't pollute another agent's work or your main branch. This is automatic, not something you configure manually.

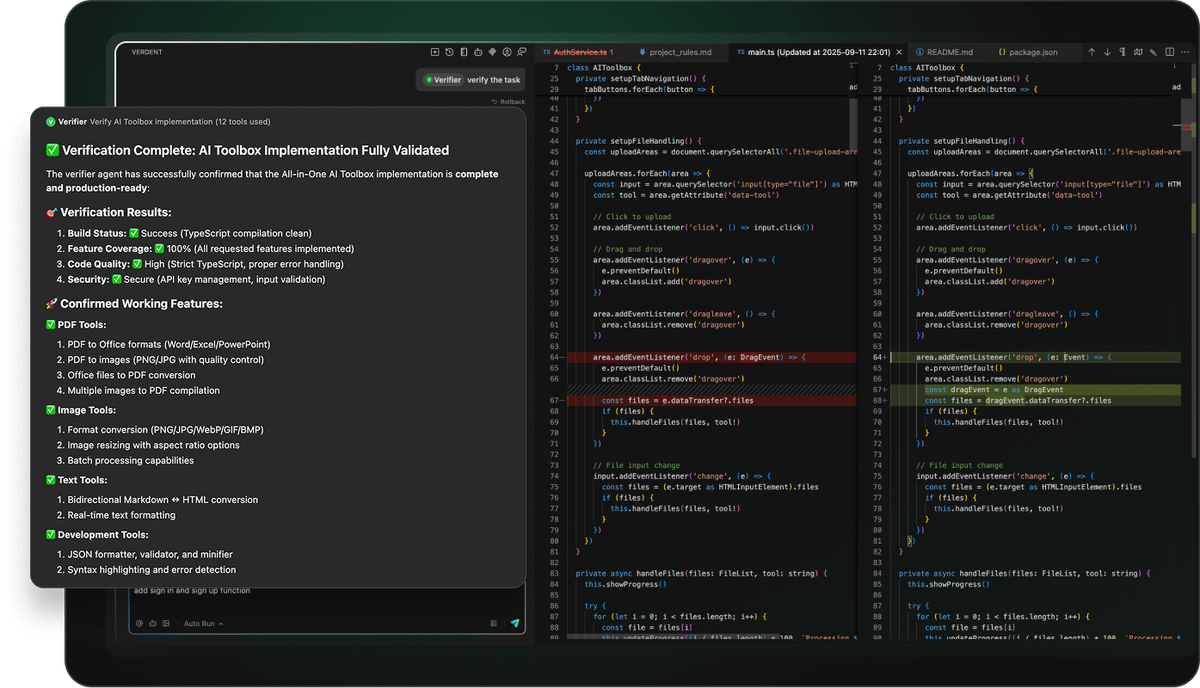

The Code Verification Loop closes the quality gap: agents run generate→test→fix cycles internally, and only surface results that pass. In my testing on a mid-complexity API refactor, this caught two regressions before I ever saw a diff.

Pricing tiers (Starter / Pro / Max)

Verdent uses a credit-based model. As of March 2026, with the current 2× credit bonus active:

| Plan | Price | Credits/month | Frontier model requests |

|---|---|---|---|

| Free trial | $0 | 100 (7 days) | Limited |

| Starter | $19/mo | 640 | Up to 1,000 |

| Pro | $59/mo | 2,000 | Up to 3,000 |

| Max | $179/mo | 6,000 | Up to 10,000 |

Top-up credits are available (240 credits for $20, never expire). Per Verdent's official pricing docs, credits are shared across Verdent Deck and the VS Code extension, and all plans include Claude Sonnet 4.5, GPT-5, and GPT-5-Codex. The 2× bonus means current prices are effectively half what they'll eventually cost — take that into account when planning long-term budgets.

Head-to-Head: Multi-Agent Execution

How Claude Code spawns subagents vs Verdent's parallel agent model

Claude Code's subagents are sequential by default. The main agent delegates a task, the subagent runs it, returns results, and the main agent processes before delegating the next task. Agent Teams breaks this — teammates run in parallel and communicate directly — but it requires Opus 4.6 (Max plan minimum) and is experimental.

Verdent's parallel execution is the default mode, not an experimental flag. From your first task, agents run concurrently in isolated worktrees. You don't need to configure anything or unlock a feature tier.

Task decomposition and coordination differences

Here's the real-world difference I've observed:

Claude Code excels at architectural reasoning within a single context window. Give it a complex, interconnected problem and it thinks through it deeply. The subagent model works well when tasks are clearly sequential — explore, then implement, then review.

Verdent's Plan Mode excels at decomposing vague or broad requirements into parallel workstreams. When requirements are fuzzy (which is most of real work), the planning step prevents agents from running in wrong directions and wasting credits.

Where each approach breaks down

Claude Code failure mode: Context window saturation. On very large codebases, even Opus 4.6's 1M token window can get expensive fast — independent cost analyses show heavy API use can exceed $3,650/month vs. $200 on the Max subscription. And Agent Teams' coordination overhead grows non-linearly past 3–4 teammates.

Verdent failure mode: Credit unpredictability on complex tasks. Plan Mode is smart but credits burn faster on premium models, and the credit consumption per task isn't always predictable upfront. Also, Verdent Deck is currently Mac-only, which is a hard blocker for Windows-first teams.

Project-Level Context and Code Safety

Claude Code's codebase understanding

This is Claude Code's strongest suit. With up to 1M tokens of context (Opus 4.6, beta), it can load entire codebases and reason across them holistically. In my testing on a 12K-line TypeScript monorepo, Claude Code surfaced cross-file dependency issues that other tools missed entirely. Anthropic's published case study with Rakuten tells the story clearly: a machine learning engineer gave Claude Code a single task — implement an activation vector extraction method inside vLLM, a library with 12.5 million lines of code across multiple languages. Claude Code completed the job in 7 hours of autonomous work, achieving 99.9% numerical accuracy against the reference implementation. The same team reported cutting average time-to-market from 24 working days to 5 days — a 79% reduction.

Verdent's Git Worktree isolation and verification loop

Verdent's safety model is structural, not probabilistic. Git Worktree isolation means an agent can't accidentally modify your main branch or conflict with another agent's concurrent changes. It's the same isolation model that experienced engineers enforce manually — Verdent just makes it automatic.

The Code Verification Loop adds a second layer: agents self-test and iterate before surfacing results. Combined, these two features address the two most common complaints about AI-generated code in production — broken main branches and quality roulette.

Pricing Compared

Claude Code subscription + token costs

As of March 2026, per Anthropic's official pricing and the Claude Code cost management docs:

| Plan | Monthly cost | Claude Code access | Notes |

|---|---|---|---|

| Pro | $20/mo | ✅ Yes | Sonnet 4.6, 5× Free capacity |

| Max 5× | $100/mo | ✅ Full | Opus 4.6, 25× Free capacity |

| Max 20× | $200/mo | ✅ Full | Opus 4.6, zero-latency priority |

| Team Premium | $150/mo per seat | ✅ Yes | Min. 5 users |

For API users, Opus 4.6 runs $5/M input, $25/M output tokens. Heavy coding sessions can push costs to $3,650/month via API — the Max subscription at $200 is typically ~18× cheaper for heavy daily use. Use prompt caching aggressively; the Batch API saves 50% for non-real-time workflows.

Verdent credit-based tiers

Verdent's credit model is predictable on a monthly basis but variable per task. Premium model tasks burn credits faster. The 2× bonus currently active makes Pro ($59/mo for 2,000 credits) the best cost-performance ratio for most professional workflows.

Cost-per-task estimate for a mid-complexity project

Let's define "mid-complexity": a 3-service API refactor with new test coverage, roughly 800–1,200 lines of code changed.

Claude Code (Pro, $20/mo): Feasible for moderate sessions. Heavy multi-file work will hit rate limits; upgrade to Max 5× ($100/mo) for uninterrupted work at this scale. Per Anthropic's official Claude Code cost documentation, the average developer spends $6/day in API-equivalent tokens, with 90% staying under $12/day — roughly $100–200/month on Sonnet 4.6. On the API (pay-as-you-go), Opus 4.6 runs $5/$25 per million input/output tokens; heavy daily sessions easily exceed $360/month at the 90th percentile without prompt caching, making the Max 20× subscription at $200 the better deal for that usage profile.

Verdent (Pro, $59/mo): Per independent testing, Pro handles 80–120 outputs/month with 98% completion rate. A mid-complexity refactor lands at 15–25 credit-tasks depending on plan depth. At 2,000 credits/month, you have comfortable headroom for 5–8 projects this size.

Bottom line: For light-to-medium solo work, Claude Code Pro at $20/mo wins on pure price. For teams or parallel multi-project workflows, Verdent Pro at $59/mo or Max at $179/mo is more cost-effective per delivered feature.

Who Should Use Claude Code vs Verdent?

Use Claude Code if…

- You live in the terminal and want minimal workflow disruption

- You need deep, holistic reasoning across a large, interconnected codebase

- You're on a tight budget and primarily do single-stream tasks

- You want the flexibility of API access for CI/CD pipelines and automation

- You're comfortable with experimental features and want to push multi-agent boundaries yourself

Use Verdent if…

- You're working on multi-module, long-horizon projects where parallel execution saves real time

- You want an enforced plan-before-code workflow that catches requirement ambiguity early

- Code safety matters — you need Git isolation and verification by default, not by discipline

- You prefer a visual dashboard (Verdent Deck) to monitor and manage concurrent agents

- You're on a Mac (Verdent Deck) and running multiple projects simultaneously

Decision checklist for teams

- Is your project split into clearly independent modules? → Verdent's parallel model pays off

- Do you need terminal-native CI/CD integration? → Claude Code wins

- Is your team on Mac (M-series)? → Verdent Deck is available

- Do you work on Windows-first? → Claude Code until Verdent ships Windows support

- Are you budget-constrained and doing solo work? → Claude Code Pro at $20/mo

- Do you need automatic code safety without manual worktree setup? → Verdent

FAQ

Is Claude Code better than Verdent for large codebases?

For reading and reasoning across large codebases, Claude Code's 1M token context window (Opus 4.6, beta) is currently unmatched. It can hold entire large repos in context. Verdent handles large projects through decomposition and isolation rather than raw context size. If you need whole-codebase reasoning in one pass, Claude Code has the edge. If you need multiple agents safely making changes in parallel, Verdent is the better fit.

Does Verdent support terminal-based workflows like Claude Code?

No. Verdent works through a VS Code extension and the Verdent Deck desktop app. If your workflow is terminal-centric or you need to integrate AI coding into CLI pipelines and scripts, Claude Code is the right choice.

Which tool has better multi-agent parallelism?

Verdent, by a clear margin for production use. Verdent's parallel execution is a core, stable feature. Claude Code's Agent Teams is experimental, requires Opus 4.6 (Max plan), and must be manually enabled. For teams that need reliable parallel agent coordination today, Verdent is ahead.

Can I use both in the same workflow?

Yes — and honestly, there's a case for it. Use Claude Code for deep codebase analysis and architectural reasoning (its 1M context window is genuinely powerful for this), then hand off implementation to Verdent's parallel agents for safe, verified execution. The tools don't conflict, and they complement each other's weak points.

How do their code verification approaches compare?

Claude Code relies on you to run tests and catch failures — it'll iterate if you tell it something broke, but there's no automatic test-fix loop built in. Verdent's Code Verification Loop runs automatically: agents generate, test, and fix in cycles before surfacing results. If production code quality is a hard requirement, Verdent's approach is more systematic and requires less developer babysitting.

The Bottom Line

Neither tool is universally better. Claude Code is the sharper instrument for reasoning through complex, interconnected code with massive context. Verdent is the more disciplined system for parallel execution with safety guarantees baked in.

The question isn't which one is smarter — it's which one fits your actual workflow. For most senior engineers I'd recommend: start with Claude Code Pro at $20/mo to get comfortable with agentic workflows, then add Verdent when you're regularly managing multi-stream, multi-module work where code safety and planning discipline pay dividends.

Both have free trials. Run the same real task through both. That afternoon I spent doing exactly that was more informative than any benchmark.

Further Reading

Multi-agent coding tools in 2026→https://www.verdent.ai/guides/multi-agent-coding-tools

Claude Code in real terminal workflows→https://www.verdent.ai/guides/claude-code-bridge-terminal-ai-agents

Verdent subagents and execution model→https://www.verdent.ai/guides/verdent-subagents-tutorial-2026

Claude Skills vs MCP agents→https://www.verdent.ai/guides/claude-skills-vs-mcp-agents-comparison

Best AI coding assistants in 2026→https://www.verdent.ai/guides/best-ai-coding-assistant-2026