Moonshot AI released Kimi K2.6 on April 20, 2026: 1 trillion parameters, 32B active, open-weight, native multimodal, four variants from quick chat to 300-agent parallel swarms. If you run multi-step coding agents or are evaluating open-weight alternatives to Claude and GPT-5.4, this one is worth understanding. If you don't, it isn't urgent.

What follows covers the architecture, the four variants, what actually changed from K2.5, the license terms, and the honest limits.

What Kimi K2.6 Is in One Paragraph

Kimi K2.6 is a 1-trillion-parameter Mixture-of-Experts model from Beijing-based Moonshot AI, released open-weight under a Modified MIT License. It activates 32 billion parameters per token during inference, supports a 262,144-token context window, and ships natively in INT4 quantization. The model handles text, images, and video in the same architecture without separate vision modules. Four variants cover different use cases: Instant for speed, Thinking for deep reasoning, Agent for autonomous research and document tasks, and Agent Swarm for large-scale parallel work. The weights are on Hugging Face and the API is at platform.moonshot.ai.

Architecture at a Glance

1T MoE, 32B active, 384 experts

K2.6 inherits the same core architecture as K2 and K2.5: a sparse Mixture-of-Experts design with 384 total experts, of which 8 are routed per token plus 1 shared expert. This gives it the parameter breadth of a 1T-parameter model at the inference cost of roughly a 32B dense model. The architecture uses the Muon optimizer (MuonClip), which Moonshot developed originally for K2 to stabilize training at trillion-parameter scale — MoE models are prone to attention explosions and loss spikes at that size, and MuonClip was built to prevent them.

SwiGLU (Swish-Gated Linear Unit) serves as the activation function — more hardware-efficient than earlier alternatives and used across several other major open-weight families including Meta's Llama series.

256K context, MLA, INT4

The practical context window is 262,144 tokens. Moonshot's stated improvement over K2.5 is not the size — K2.5 already had 256K — but stability at that length. Long-horizon coding tasks tend to degrade as context fills; K2.6 targets that degradation specifically.

| Capability | K2.5 | K2.6 |

|---|---|---|

| Max parallel sub-agents | 100 | 300 |

| Max tool call steps | 1,500 | 4,000 |

| Max autonomous run length | Not specified | 12+ hours |

| Video input | No | Yes |

| Architecture | Same K2 MoE base | Same K2 MoE base |

Multi-head Latent Attention (MLA) reduces the memory footprint of the KV cache compared to standard multi-head attention, which matters at scale for long contexts and multi-agent setups.

The INT4 quantization is native: Moonshot used Quantization-Aware Training (QAT) during the post-training phase, meaning the model learned representations compatible with 4-bit weights rather than being compressed afterward. The practical result is roughly 2x inference speed and 50% less GPU memory versus FP16, with Moonshot claiming negligible quality loss. The INT4 weights come in at approximately 594GB on Hugging Face.

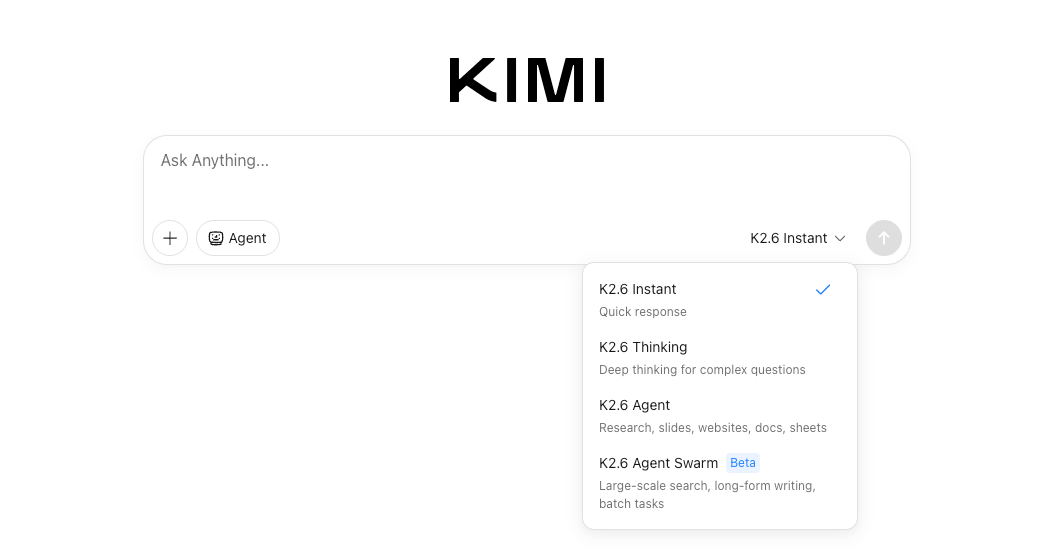

The Four Variants and When Each One Fits

Moonshot ships K2.6 as four variants accessible from kimi.com and the API. They share the same model weights but differ in decoding configuration, tool permissions, and how the thinking budget is allocated.

K2.6 Instant

Fast responses without a reasoning trace. Temperature runs lower, top-p is tighter, and the model skips the chain-of-thought phase entirely. The practical use case is quick lookups, short code completions, and anything where latency matters more than depth. If you're building an autocomplete or a simple Q&A surface, this is the right entry point.

K2.6 Thinking

Full reasoning mode. The model interleaves chain-of-thought with tool calls, allocating a compute budget to the reasoning trace before producing output. This is the variant that produces K2.6's benchmark scores — the Hugging Face model card notes that all K2.6 evaluations were conducted with thinking mode enabled. Use it for complex debugging, architecture decisions, multi-file code analysis, or anything where you need the model to reason before acting.

K2.6 Agent

Autonomous task execution with full tool access: web search, code interpreter, file operations, and browser. The intended use cases are research, document generation (slides, reports, spreadsheets), website creation from a prompt, and multi-step workflows where you give the model a goal rather than a task. This is closer to "run this project" than "answer this question."

K2.6 Agent Swarm

The most operationally distinct variant. Agent Swarm scales horizontally to 300 parallel sub-agents, each capable of up to 4,000 coordinated steps, with the full run able to extend beyond 12 hours. A complex prompt gets decomposed into parallel, domain-specialized subtasks — research, analysis, coding, design — each handled by a dynamically instantiated agent, with results integrated by the lead orchestrator.

Agent Swarm is not a general-purpose upgrade over Agent. It's a different operational model: you give it a large, decomposable goal and let it run. The overhead of spinning up and coordinating 300 sub-agents is not worth it for tasks a single agent can handle in minutes. It's designed for batch tasks, long-form output, or large-scale search that would take a single agent hours sequentially.

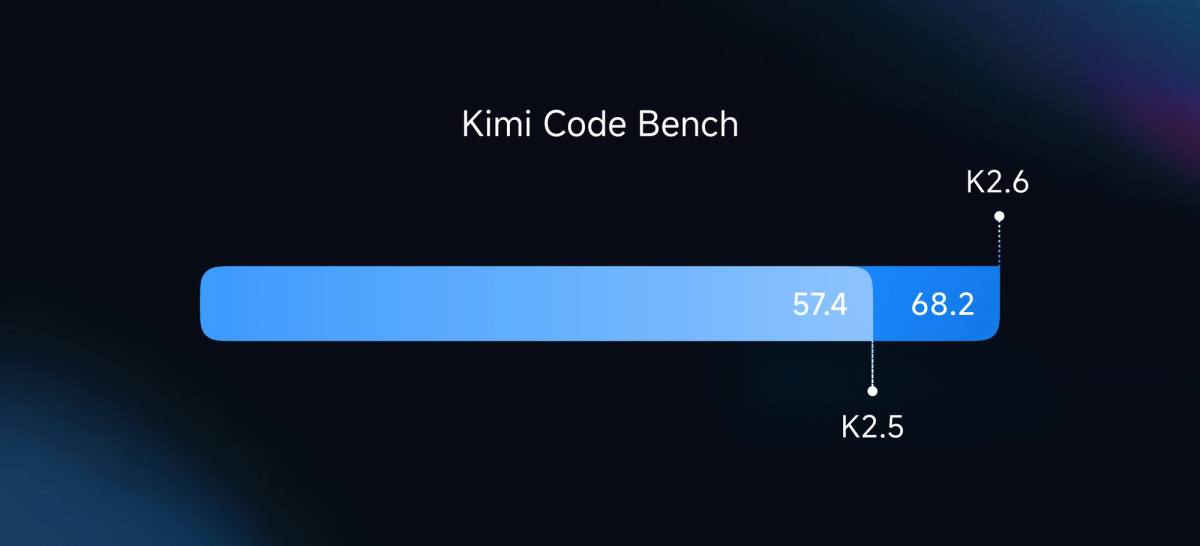

What's Actually New vs K2.5

300 sub-agents, 12-hour execution, native multimodal

K2.5, released in January 2026, introduced Agent Swarm as a concept: self-directed parallel agents coordinating toward a shared goal. K2.6 expands the ceiling significantly.

The architecture is unchanged — K2.6's deployment guide on Hugging Face explicitly states "Kimi-K2.6 has the same architecture as Kimi-K2.5, and the deployment method can be directly reused." The difference is in posttraining: more training compute applied to long-horizon stability, instruction following, and swarm coordination. Moonshot did not disclose exactly how much additional training was done for K2.6.

Native video input is the other notable addition. K2.5 handled images; K2.6 adds video (mp4, mov, avi, webm, and others, recommended up to 2K resolution). The vision encoder is native to the model's pretraining rather than a bolt-on module.

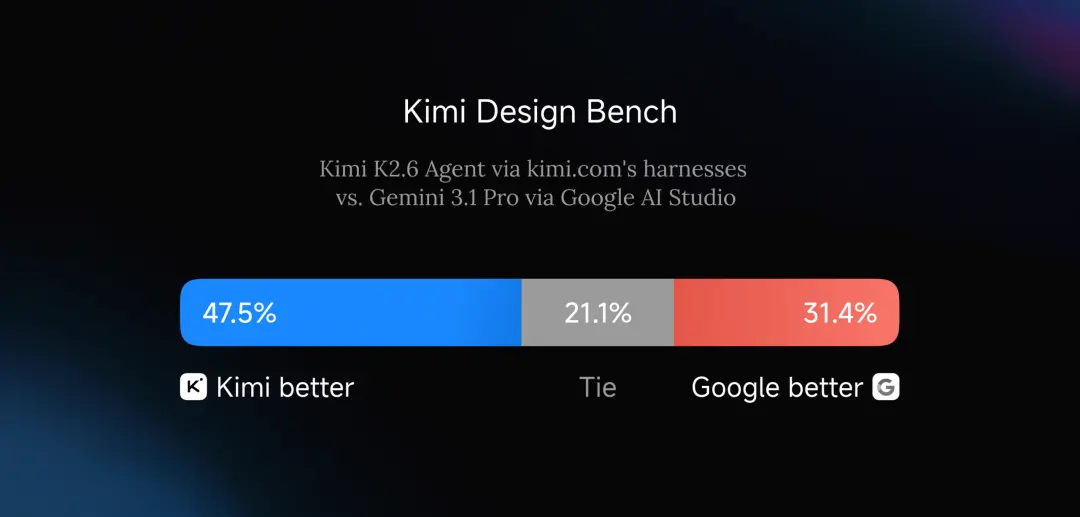

Frontend animation and WebGL shader generation are also highlighted in Moonshot's launch materials — the model can produce video hero sections, GLSL/WGSL shader animations, and GSAP-based motion design from text prompts. This is a specific capability addition to K2.5's general frontend coding ability.

Who Should Pay Attention

Senior devs, tech leads, teams running long-horizon agents

K2.6 is not trying to be a better chatbot. Its differentiation is narrow and deliberate: it's a model for developers and teams who are already running or planning to run AI agents on tasks that take time — code migrations, large refactors, multi-step research, infrastructure automation. If your workflow involves an agent calling tools 50 times to complete a task, K2.6 is relevant. If you're asking a model to explain a function, it's not.

The open-weight release matters for two specific audiences. Teams with data sovereignty requirements — regulated industries, security-sensitive codebases — can run K2.6 on their own infrastructure. Teams doing high-volume inference can self-host and avoid per-token API pricing for sustained workloads. For everyone else, the API on platform.moonshot.ai covers the same capabilities without the operational complexity.

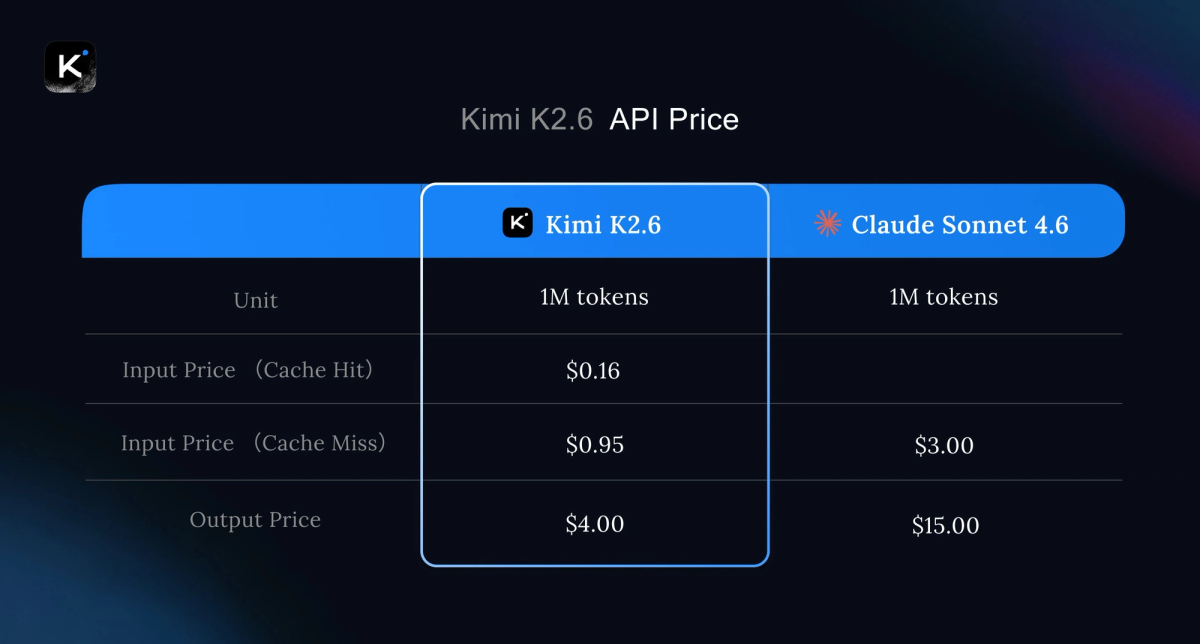

The pricing on the Moonshot API is meaningfully lower than Claude Opus 4.6 and GPT-5.4 for comparable tasks. For agent workflows that burn thousands of tool calls per run, that difference compounds. Whether the quality tradeoff is acceptable for your use case requires testing against your actual workloads — not benchmarks.

Known Limits and Open Questions

Hardware requirements are substantial. Running K2.6 locally at full quality requires 8× H100 or H200 GPUs for production. The INT4 variant can run on 4× H100 with reduced context length. Community experiments show the model running on consumer hardware (dual Mac Studios with 512GB RAM each) at very low throughput — roughly 1-7 tokens per second depending on configuration, which is impractical for most real workflows. Cloud APIs are the realistic deployment path for most teams.

Benchmark numbers are self-reported at launch. The Hugging Face model card notes that benchmarks without publicly available scores were re-evaluated by Moonshot under the same conditions used for K2.6 and are marked with an asterisk. Independent third-party validation of K2.6's benchmark claims will take weeks. The long-horizon agent claims (12-hour runs, 4,000 tool calls) are particularly difficult to replicate quickly at scale.

Geopolitical context. Moonshot AI is a Chinese company, and K2.6's launch arrives during ongoing scrutiny of Chinese AI firms in the US market. The US House is considering legislation that could affect Chinese AI companies operating internationally. For teams with compliance requirements, the vendor jurisdiction is a relevant factor alongside technical capability.

Claw Groups is a preview feature. The "Claw Groups" functionality — which enables human-machine collaboration where an autonomous run can loop in human workers for specific subtasks — is listed as a research preview at launch, not a generally available capability.

FAQ

Is Kimi K2.6 free?

The weights are free to download and use under the Modified MIT License. The kimi.com chat interface is free with usage limits (Moonshot offers subscription tiers named Moderato, Allegretto, and Vivace). API access via platform.moonshot.ai is paid per token. There are no per-seat fees for the open-weight model itself.

Where can I run Kimi K2.6 locally?

The weights are on Hugging Face (moonshotai/Kimi-K2.6). Three inference engines officially support K2.6: vLLM, SGLang, and KTransformers (Moonshot's own engine built for the K2 architecture). The deployment guide on the model card recommends vLLM 0.19.1 for stable production use. Minimum viable hardware for practical use is 4× H100 with INT4 quantization at reduced context length. The architecture is identical to K2.5, so existing K2.5 deployment configurations transfer directly — swap the model weights.

How does the Modified MIT License work for commercial use?

The license is standard MIT with one modification: if you deploy K2.6 (or a derivative) in a commercial product or service that exceeds 100 million monthly active users, or that generates more than $20 million USD in monthly revenue, you must prominently display "Kimi K2" on the user interface of that product. Below those thresholds, the license functions as standard MIT — use commercially, modify, redistribute, no royalties. The thresholds affect a small fraction of potential users. Most teams, including well-funded startups, sit well below both limits.

Is Kimi K2.7 coming soon?

There is no official announcement of K2.7. The K2 series has moved quickly — five significant releases between July 2025 and April 2026 — but Moonshot has not disclosed a roadmap. There has been community discussion on Reddit's r/LocalLLaMA about Moonshot working on a model tentatively referred to as Kimi K3, with speculation about a parameter count in the 3–4 trillion range. These are unverified community rumors, not official information. Treat them as speculation until Moonshot confirms anything.

How does K2.6 compare to Claude Opus 4.6 and GPT-5.4 at a high level?

On agentic and tool-heavy benchmarks (SWE-Bench Pro, BrowseComp, HLE with tools), K2.6 is competitive with or ahead of both at launch, per Moonshot's self-reported numbers. On pure single-turn reasoning tasks (AIME, GPQA Diamond without tools), GPT-5.4 and Gemini 3.1 Pro currently hold the lead. Claude Opus 4.7 released the same week as K2.6 and sits above Opus 4.6; direct K2.6 vs. Opus 4.7 comparisons are not in K2.6's launch benchmarks. The practical framing: K2.6 is strong for multi-step agent tasks and cost-sensitive workloads; for one-shot complex reasoning, the current proprietary models are still ahead. Independent benchmarks will clarify the picture over the next few weeks.

Bottom Line

K2.6 is a capable open-weight model for teams doing long-horizon agent work who need either cost control, data sovereignty, or both. Its technical differentiation — 300-agent swarms, 12-hour autonomous runs, native multimodal — is real but specialized. It won't replace simpler coding assistants for day-to-day development work, and it won't run cheaply on consumer hardware. If you're running or evaluating agentic coding pipelines at scale, it's worth testing. If you're not, it's a model to track without urgency.

Related Reading