There's a version of this debate that frames vibe coding as sloppy and Superpowers as serious engineering. That version is wrong, and it misses the actual decision you need to make. Both approaches work. They work on different problems, with different cost structures, and failing to pick the right one for the right task is more expensive than using either one badly.

This is a workflow comparison for developers who've used both — or are deciding whether to add structure to a setup that's working fine without it.

Two Very Different Philosophies (30-second version)

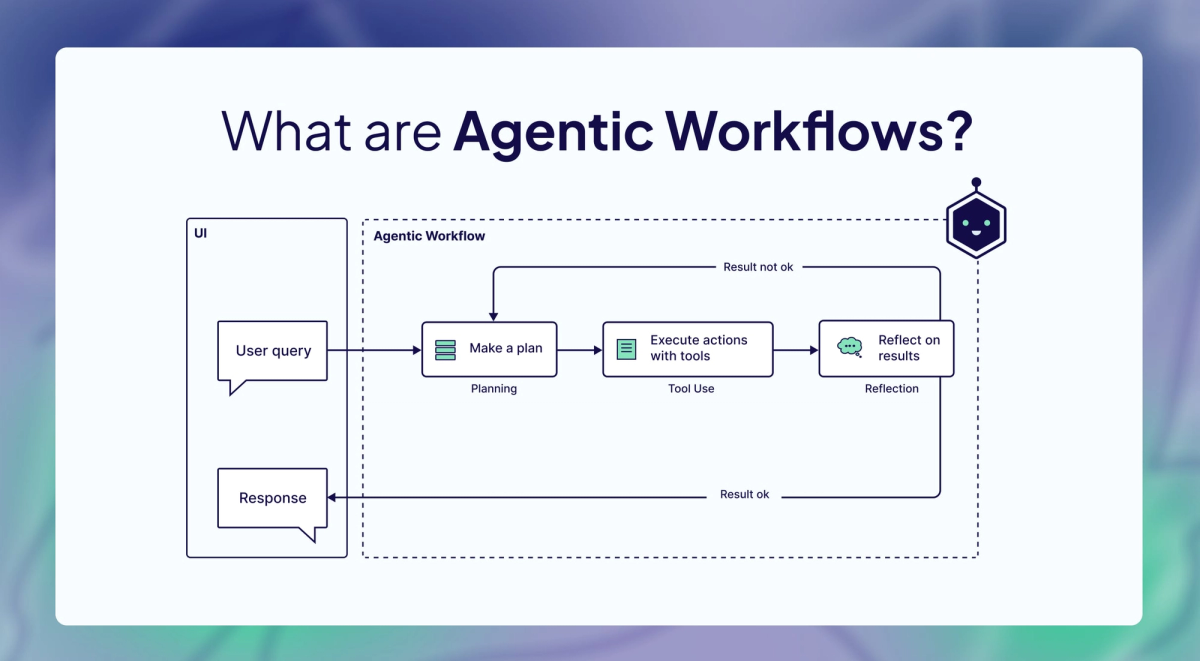

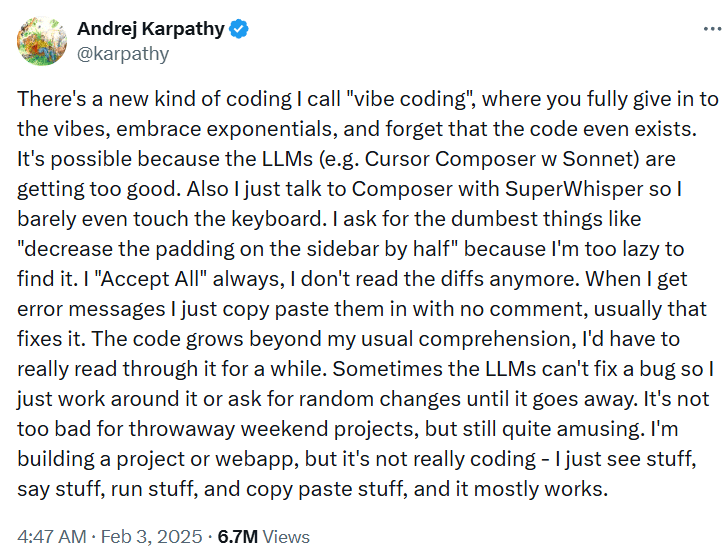

Vibe coding — coined by Andrej Karpathy in February 2025 — treats the AI agent as a fast, disposable generator. You describe intent, the agent writes code, you steer from there. No formal spec, no gated phases, no required test coverage. The model's failures are small, frequent, and cheap to fix.

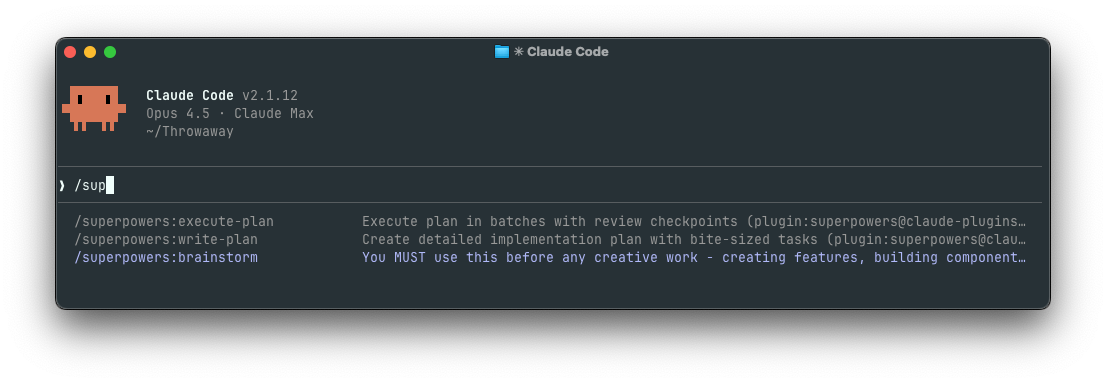

Superpowers v5.0.7 treats the agent as an executor of a pre-approved plan. Before any code is written, the agent works through a mandatory design gate: clarifying questions, architectural tradeoffs, a written design document, and your explicit sign-off. Only then does it write a micro-task plan, and only then do subagents execute one task at a time under TDD discipline. The model's failures are caught at plan review rather than discovered in a tangled diff.

Same model underneath. Radically different behavior on top.

| Vibe Coding | Superpowers v5.0.7 | |

|---|---|---|

| First agent action | Starts writing code | Asks clarifying questions |

| Spec requirement | None | Written design doc, user-approved |

| Test discipline | Optional | TDD enforced (red → green → refactor) |

| Execution model | Single agent, continuous context | Fresh subagent per task, no context bleed |

| Mid-task correction | Prompt redirect | Inline annotation on plan step |

| Session artifacts | Code only | Code + design doc + task plan |

| Planning overhead | Near-zero | 10–20 min per new feature |

| Failure mode | Context collapse on long tasks | Planning overhead on simple tasks |

What Vibe Coding Actually Is

The term has drifted from Karpathy's original framing. Simon Willison captured the precise boundary in a March 2025 post: "If an LLM wrote every line of your code, but you've reviewed, tested, and understood it all, that's not vibe coding in my book — that's using an LLM as a typing assistant." Vibe coding specifically means steering through observation rather than comprehension — you see it run, decide if it's right, redirect if not.

Karpathy himself, in a February 2026 retrospective, described how professional use has evolved: agents are now used "with more oversight and scrutiny," and his preferred term for that disciplined mode is "agentic engineering." The version most developers practice sits between Karpathy's original throwaway-project framing and Superpowers' fully enforced pipeline — prompt-driven iteration with continuous steering but no mandatory workflow.

The appeal: iterate fast, fix fast

The honest case for vibe coding is that most software tasks don't need a design document. You're adding a field to a form. You're fixing a bug with a clear reproduction case. You're prototyping a concept that might get thrown away in 48 hours. For these tasks, writing a design doc and a formal task plan before touching code is pure overhead. The specification would take longer to write than the implementation.

Vibe coding fits this space naturally. The feedback loop is fast enough that errors surface immediately. The tasks are scoped enough that context collapse is not a realistic failure mode. And critically, you're present throughout. Every output gets an immediate human review.

The other honest case: sometimes you genuinely don't know what you want until you see the first version. A working prototype is a better basis for a spec than a spec is for a prototype. Vibe coding handles this exploratory mode well precisely because it doesn't force you to commit to a direction before you've seen what's possible.

Where it breaks — context collapse on complex projects

The failure mode is specific and predictable. It hits when a task requires coordinating changes across multiple files, multiple systems, or multiple sessions. Turn one is clean. Turn two adds complexity. By turn seven, the context window carries a mix of old decisions, partial implementations, and accumulated steering corrections. The agent starts optimizing for "make the current prompt happy" rather than "maintain consistency with everything that came before."

You end up with code that passes your immediate prompt but contradicts a decision made four turns ago. The bug isn't in any single file — it's in the gap between files, in an assumption that was correct when it was made and is now wrong because something else changed. This isn't a model quality problem. It's a structural problem. Vibe coding provides no mechanism for the agent to check its current output against the full scope of what it was originally supposed to build.

What Superpowers Enforces

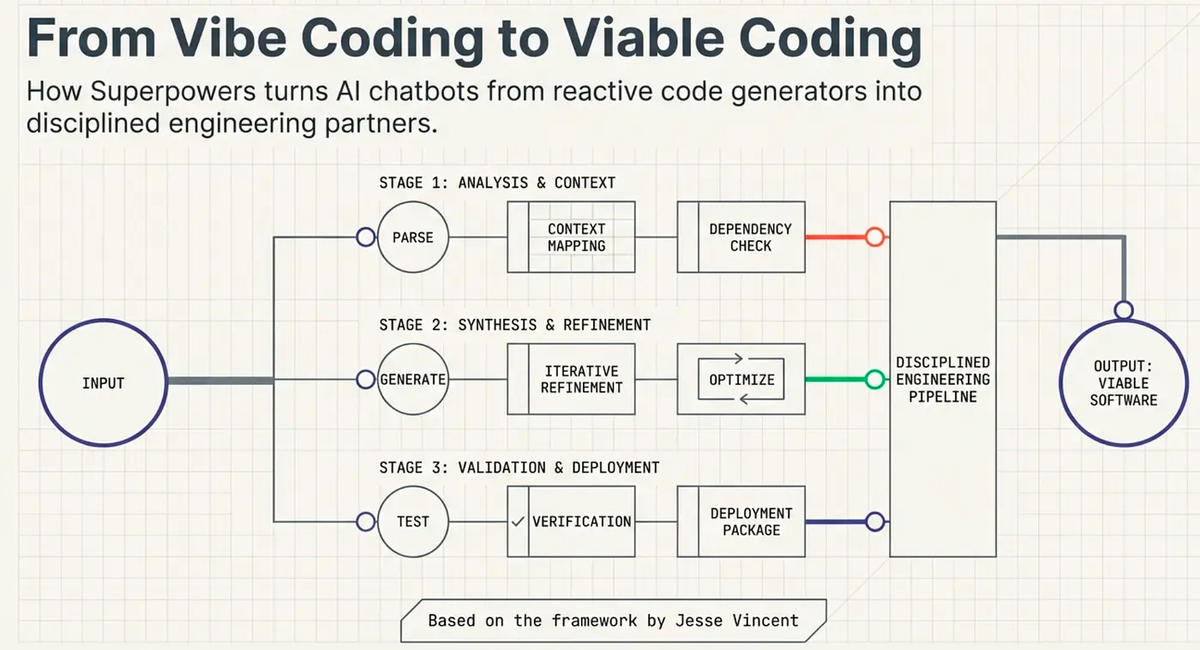

Superpowers (v5.0.7, MIT) is not a smarter model or a better prompt. It's a set of mandatory workflow constraints delivered as SKILL.md files injected at session start. The core skill's language leaves no room for interpretation: "IF A SKILL APPLIES TO YOUR TASK, YOU DO NOT HAVE A CHOICE. YOU MUST USE IT. This is not negotiable. This is not optional. You cannot rationalize your way out of this."

Design gate before a single line of code

The brainstorming skill activates when the agent detects you're starting something new. It executes a six-step checklist as a TodoWrite task list: explore context, ask clarifying questions one at a time, propose two or three implementation approaches with tradeoffs, present the emerging design in sections, write a design doc, then invoke writing-plans. The agent has to complete or explicitly skip each item — skipping requires an action.

Here's what a brainstorming session opening looks like in practice:

User: I need to add rate limiting to our API

Agent (brainstorming skill active):

Let me explore the codebase before proposing anything.

[reads middleware, config files, existing auth layer]

Before I propose an approach, a few questions:

1. Are you limiting by IP, by authenticated user, or both?

2. What's the expected normal request volume per user per minute?

3. Do you want hard blocks (429) or soft throttling with queuing?

4. Is there an existing Redis instance, or would this require new infra?Before Superpowers added the checklist structure — documented in detail in Jesse Vincent's v4.3.0 release notes — the agent would rationalize past brainstorming on tasks that seemed simple. The checklist closes that rationalization path. Every project goes through the process.

Plan → subagents → TDD loop

After design approval, writing-plans produces a micro-task plan with deliberately excessive detail. Every task is 2–5 minutes of work, with exact file paths, complete expected code, and explicit verification steps — written for what Jesse Vincent describes as "an enthusiastic junior engineer with poor taste, no judgment, no project context, and an aversion to testing."

A plan task entry looks like this:

## Task 3: Add rate limiter middleware

File: src/middleware/rateLimiter.ts

Action: Create new file

Expected code:

import rateLimit from 'express-rate-limit'

import RedisStore from 'rate-limit-redis'

import { redisClient } from '../lib/redis'

export const apiRateLimiter = rateLimit({

windowMs: 15 * 60 * 1000,

max: 100,

standardHeaders: true,

legacyHeaders: false,

store: new RedisStore({ client: redisClient }),

})

Verification: Run npm test src/middleware/rateLimiter.test.ts — all tests pass.

TDD requirement: Write the test first. Test must fail before implementation starts.On Claude Code and Codex, each task is executed by a fresh subagent — no accumulated context from previous tasks. The subagent writes the test (red), writes the implementation (green), refactors if needed, and passes two review stages: spec compliance, then code quality. Since a recent update, writing-plans no longer offers a choice on subagent-capable platforms — subagent-driven execution is required.

Cost in discipline, gain in consistency

The cost is real: 10–20 minutes of brainstorming and planning before any code starts. The consistency gain compounds on multi-session work. Because the design document and task plan are written artifacts, picking up a project after a week away doesn't require reconstructing what was decided and why. The agent reads the plan and resumes.

Head-to-Head: Same Task, Two Approaches

How each handles a multi-file refactor

Task: Migrate authentication from stateless JWT tokens to session-based auth with a Redis store. Touches session middleware, route handlers, token validation, test utilities, and deployment config — roughly 12 files.

Vibe coding path: You describe the migration. The agent starts with middleware. Turn one is clean. Turn two updates route handlers. By turn four, token validation is inconsistent with the middleware changes from turn one — the agent optimized for turn four without re-reading turn one's output. You correct it. Turn six introduces an issue in test utilities because the agent's model of "what session auth looks like" has drifted from what it actually wrote. Total: fast turns, multiple correction cycles, and a final integration pass before merge.

Superpowers path: Brainstorming surfaces three questions you hadn't considered — where session secrets are stored across environments, how existing refresh token logic interacts with Redis TTL, and whether deployment config must change before or after the code changes to avoid a broken intermediate state. You answer them. The design doc records the decisions. The plan breaks 12 files into 14 atomic tasks. Execution is slower at first, but there are no consistency correction cycles. The final diff is clean.

Neither path is obviously faster in absolute time. The crossover is roughly proportional to the number of implicit decisions the task contains.

How each handles debugging ambiguous specs

Task: "The checkout flow is broken for some users." No reproduction case. No specific symptom. Intermittent.

Vibe coding path: Paste the symptom, ask the agent to investigate. It reads relevant files, forms a hypothesis, makes a change. For genuinely ambiguous bugs, this is often the right approach — the fastest path to a hypothesis is letting the agent read the code and form one.

Superpowers path: Brainstorming activates and asks clarifying questions before anything else. What percentage of users? What do they have in common? What does the error log show? With good observability data, this produces a more accurate first hypothesis. Without it, the questioning phase surfaces the real problem: insufficient information to debug, not a code issue.

For ambiguous debugging, Superpowers' design gate is valuable when you have the information to answer its questions — and a diagnostic forcing function when you don't.

Where Vibe Coding Wins

On speed for well-scoped tasks, vibe coding isn't close. Adding an API endpoint that follows an existing pattern, writing a utility function with clear inputs and outputs, fixing a bug with a clean reproduction case — the Superpowers workflow adds time without adding quality on these. The design gate asks questions you already know the answers to.

On exploratory work — prototyping, spike implementations, proof-of-concept code that might get thrown away — vibe coding's absence of upfront commitment is an asset. You can't write a good design document for a system you haven't built yet.

On solo projects where you carry the full context in your head, the external coordination mechanisms Superpowers provides are partially redundant. The plan documents decisions you already made.

Where Superpowers Wins

On tasks crossing more than three or four files with interdependent changes, the vibe coding correction cycle compounds. Each correction requires the agent to re-read its own previous output — which it does with limited fidelity — and reconcile new changes against old ones.

On team contexts, Superpowers produces coordination artifacts that persist beyond the session. A teammate picking up a half-finished feature reads the design doc to understand what was decided and why — the same value a good PR description provides before reading the diff.

On production-critical or security-sensitive code, Superpowers' TDD enforcement means tests exist before code ships. Vibe coding doesn't prohibit TDD; it just doesn't enforce it. The difference between "you can write tests" and "you must write tests first" is the difference between what happens in theory and what happens at 5pm when you want to ship.

Decision Framework: Which to Use When

Project complexity threshold

The threshold isn't file count — it's implicit decision count. More than three or four decisions that need to stay consistent across an implementation: Superpowers. One or two decisions: vibe coding.

| Task type | Recommended | Reason |

|---|---|---|

| Single-file bug fix, clear repro | Vibe coding | One decision, no coordination needed |

| New endpoint following existing patterns | Vibe coding | Pattern already defined, overhead not justified |

| Throwaway prototype / spike | Vibe coding | Spec commitment costs more than exploration gains |

| Ambiguous debug with good observability | Superpowers | Brainstorming surfaces better first hypothesis |

| Multi-file feature with cross-cutting concerns | Superpowers | Implicit decisions compound across files |

| Production migration (auth, DB schema, infra) | Superpowers | Cost of wrong answer justifies planning overhead |

| Long-lived project, multiple sessions | Superpowers | Written artifacts survive context window limits |

| Team handoff, multiple devs on same codebase | Superpowers | Design docs become coordination artifacts |

| Security-sensitive code | Superpowers | TDD before shipping, not after |

Heuristic: if you'd write a spec before handing this task to a junior engineer, write a design doc before handing it to an AI agent.

Solo dev vs team context

Solo developers can use either approach effectively, but the tradeoff shifts with project duration. On a side project you'll touch weekly for six months, Superpowers' written artifacts become increasingly valuable as memory of early decisions fades. On a short-deadline prototype, vibe coding's speed advantage dominates.

In team contexts, Superpowers produces coordination artifacts that persist beyond the session. Vibe coding sessions produce code but no structured record of the decisions that produced it.

Can They Coexist? (Hybrid approach)

Yes — and this is how most experienced developers end up using them. Superpowers is not binary. Skills trigger based on context, and user instructions in CLAUDE.md take precedence over skills. A simple bug fix doesn't trigger the full brainstorming gate. A new feature in a complex codebase does.

The hybrid that works in practice: Superpowers as default, context detection handles simple tasks, CLAUDE.md overrides handle explicit exceptions:

# CLAUDE.md

## Workflow overrides

- For tasks tagged [hotfix]: skip brainstorming, go directly to implementation

- For tasks tagged [spike]: skip all Superpowers skills, freeform only

- For tasks tagged [feature]: run full Superpowers workflow including design docThe deeper point: the choice between structured agents and freeform prompts is not a permanent commitment. It's a per-task decision. Getting good at making that judgment — and building the tooling that makes it easy to switch — is more valuable than committing to either approach in isolation.

FAQ

Does Superpowers slow you down on simple tasks?

Yes, if brainstorming activates on a task where you already know exactly what to build. The checking phase takes time even when it finds nothing to ask. Jesse Vincent acknowledges this directly in his writing on the framework's design: skills should trigger when they apply, and there are task types where none apply. Use CLAUDE.md overrides or direct instruction to skip the workflow where the overhead exceeds the value.

Can I use Superpowers skills selectively?

Yes. Invoke individual skills without the full workflow — use writing-plans on a task you've already brainstormed elsewhere, or invoke using-git-worktrees without the design gate. User instructions in CLAUDE.md or direct prompts override skill behavior. The skills repo (github.com/obra/superpowers-skills, MIT) is community-editable — fork it, modify skills for your project's constraints, contribute changes back. Prime Radiant maintains the core skill stack.

Is vibe coding dead?

No. Karpathy's 2026 retrospective framed the evolution accurately: the throwaway-project mode has given way to "agentic engineering" — agents with oversight and scrutiny — but both modes remain valid for different contexts. Most AI-assisted coding sessions are simple, scoped, and short. Vibe coding handles these correctly and efficiently. What's changing is the growth of structured workflows for the subset of tasks that genuinely need them, not the disappearance of the unstructured approach.

Related Reading

- What Is Superpowers? Agent Skills Framework for AI Coding — Full breakdown of the Superpowers skill system: how automatic triggering works, the priority hierarchy, and install paths across Claude Code, Codex, Cursor, and Gemini CLI.

- Gemini CLI v0.38: Worktree Isolation and Plan Mode — How Gemini CLI's native Plan Mode compares to Superpowers' enforced brainstorming gate, and when to use which.

- Claude Code vs Verdent: Multi-Agent Architecture Compared — The orchestration layer question: when single-agent workflows (with or without Superpowers) give way to multi-agent systems.

- LLM Knowledge Base for Coding Agents: Beyond RAG — Persistent context strategies that address the context collapse problem vibe coding faces on long projects.

- What Is G0DM0D3? — Another structured approach to AI agent workflows, focused on multi-model evaluation rather than development methodology.