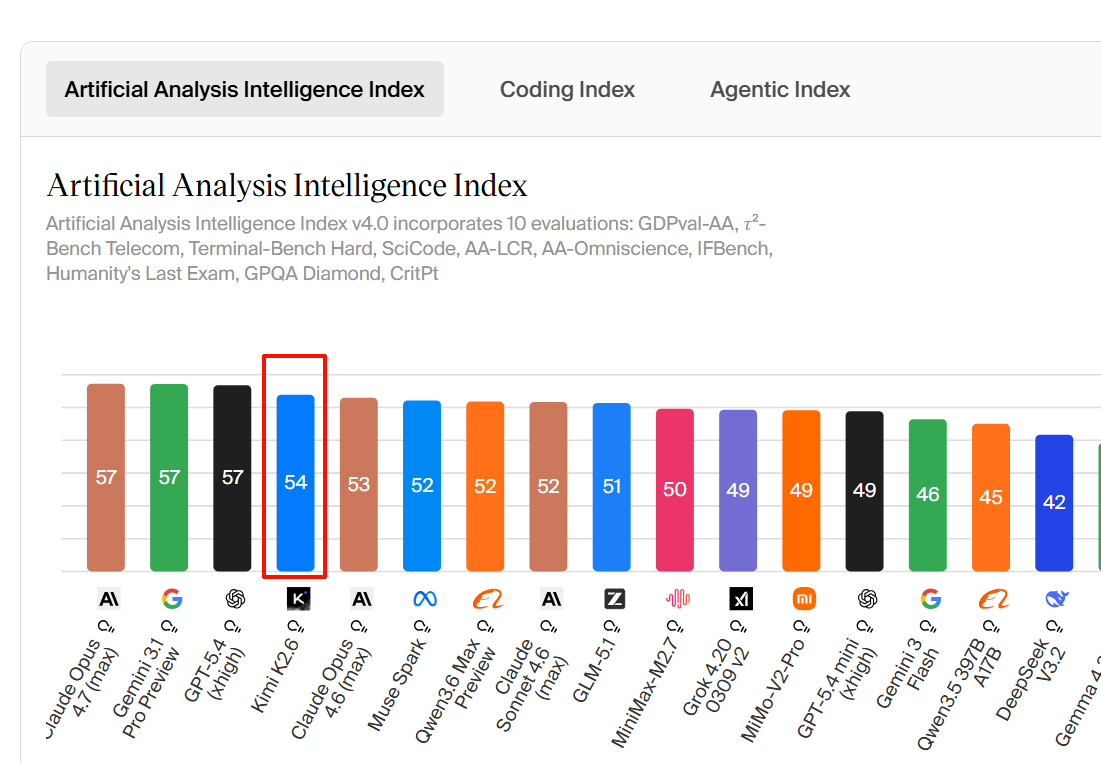

Three models are contending for the same slot in agent-heavy coding stacks right now. K2.6 dropped April 20, 2026, four days after Opus 4.7 and six weeks after GPT-5.4. The timing is not coincidental — Moonshot is explicitly targeting teams building agentic coding infrastructure.

This comparison covers K2.6, Opus 4.6, and GPT-5.4. Why Opus 4.6 and not 4.7? Because K2.6's own benchmark table compares against Opus 4.6 (max effort), which is the reference point Moonshot chose. Where Opus 4.7 numbers are available and relevant, they're noted, but this is a K2.6 launch comparison, not a full four-way.

One upfront caveat that matters throughout: all benchmark numbers in this article are sourced from vendor announcements. No numbers in this article come from independent third-party replication at launch. That is the norm for model releases, not an excuse — it's context for how much weight to put on any individual score.

Positioning in One Line Each

Kimi K2.6 — Open-weight, native multimodal, 1T MoE, cheapest per token of the three. Purpose-built for long-horizon agentic coding and parallel swarm tasks. Self-hostable.

Claude Opus 4.6 — Anthropic's previous flagship (now superseded by 4.7). Best-in-class SWE-Bench Verified score at launch; leads on pure reasoning depth and HLE. Premium priced.

GPT-5.4 — OpenAI's generalist flagship as of March 2026. Strongest on pure math reasoning, Terminal-Bench with certain harnesses, and native computer use. Competitive pricing.

Benchmark Comparison Table

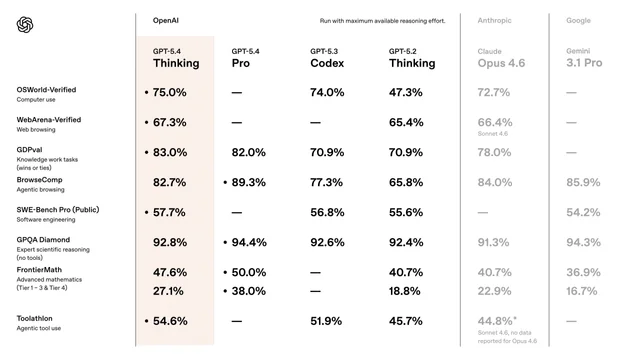

All numbers are from the official model cards, announcements, or vendor-reported evaluations, with test conditions noted where known. Scores reported by Moonshot for K2.6 use thinking mode enabled, temperature 1.0, 262,144-token context. Claude Opus 4.6 numbers are from Anthropic's official announcement. GPT-5.4 numbers are from OpenAI and Moonshot's comparative tables (xhigh reasoning effort).

| Benchmark | K2.6 | Claude Opus 4.6 | GPT-5.4 | Notes |

|---|---|---|---|---|

| SWE-Bench Pro | 58.60% | 53.40% | 57.70% | Moonshot in-house harness; SEAL mini-swe-agent puts GPT-5.4 at 59.1%, Opus 4.6 at 51.9% |

| SWE-Bench Verified | 80.20% | 80.80% | ~80% | Tight cluster; Opus 4.7 now leads at 87.6% |

| Terminal-Bench 2.0 | 66.70% | 65.40% | 65.40% | See note below |

| HLE with tools | 54.00% | 53.00% | 52.10% | All three within 2 points |

| LiveCodeBench v6 | 89.60% | 88.80% | — | v6 as of April 2026 |

Source for all K2.6 numbers: Moonshot AI official model card, April 20, 2026. Claude Opus 4.6 numbers from Anthropic's official announcement. GPT-5.4 from OpenAI and Moonshot's comparative table.

Terminal-Bench 2.0 harness discrepancy — important. Moonshot's table reports GPT-5.4 at 65.4% on Terminal-Bench 2.0 using the Terminus-2 harness. Other sources, including analysis from third-party leaderboards and our own Opus 4.7 review, cite GPT-5.4 at 75.1% on Terminal-Bench 2.0 with a different harness configuration. The gap is likely harness-driven — Moonshot uses Terminus-2, while other evaluations may use Codex CLI or custom agent frameworks. K2.6's 66.7% is therefore a lead of ~1 point over Moonshot's Terminus-2 baseline, but GPT-5.4 with optimized terminal harnesses may substantially outperform this comparison. Do not use this table to draw conclusions about Terminal-Bench without running your own harness.

Where GPT-5.4 wins that this table doesn't show: On AIME 2026 (pure competition math), GPT-5.4 reaches 99.2% versus K2.6's 96.4%. On GPQA-Diamond, GPT-5.4 scores 92.8% versus K2.6's 90.5%. If your use case involves high-stakes single-turn reasoning rather than multi-step agentic execution, GPT-5.4's published numbers are stronger.

Long-Horizon Execution and Agent Orchestration

This is the axis where the models are most differentiated in architecture, not just in benchmark scores.

Tool-call ceilings

| Capability | K2.6 | Claude Opus 4.6 | GPT-5.4 |

|---|---|---|---|

| Max tool-call steps (documented) | 4,000 | Not published | Not published |

| Max context window | 262K | 1M | 1.05M |

| Native video input | Yes | No | Yes |

Claude Opus 4.6 and GPT-5.4 both support multi-step tool use in agent workflows, but neither Anthropic nor OpenAI publishes an equivalent "step ceiling" number. K2.6's 4,000 coordinated steps is a specific architectural claim from the official model card; whether it holds at production scale across diverse tasks is not yet independently verified.

Context window is where Opus 4.6 and GPT-5.4 have a clear structural edge: 1M and 1.05M tokens respectively versus K2.6's 262K. For tasks that require loading very large codebases in a single context, this matters. For most agentic coding workflows that chunk context across steps, it matters less.

Sub-agent parallelism

K2.6's Agent Swarm supports 300 parallel sub-agents per run by Moonshot's claim. Neither Anthropic nor OpenAI ships an equivalent primitive out of the box — parallel execution with Claude or GPT requires orchestration infrastructure (LangGraph, CrewAI, or custom) on the application side. K2.6's swarm is a model-native feature, which is architecturally different from building parallelism in your own harness.

Whether that distinction matters depends on your stack. Teams with existing orchestration infrastructure running Claude or GPT gain parallelism at the framework layer. Teams without that infrastructure face less setup overhead with K2.6.

Stability over multi-hour runs

Moonshot claims K2.6 maintains coherent behavior across 12+ hour autonomous runs. This is not something benchmarks measure well — the benchmark tasks that measure long-horizon behavior (Terminal-Bench, SWE-Bench Pro) run tasks to completion, not sustained multi-session coherence. Anthropic has published patterns for long-running agents using Claude (initializer + executor architecture, commit-by-commit progress) but has not published a specific runtime claim.

At launch, K2.6's 12-hour claim is Moonshot's assertion without independent verification. Community reports of multi-day autonomous runs exist in Hacker News and Reddit discussions, but these are anecdotes, not audited benchmarks.

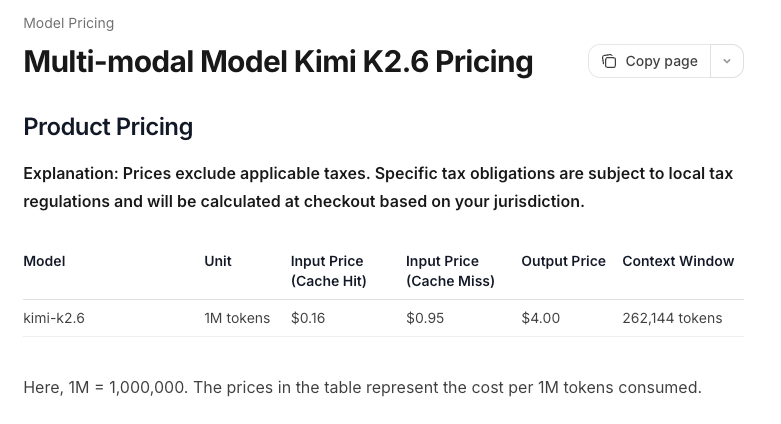

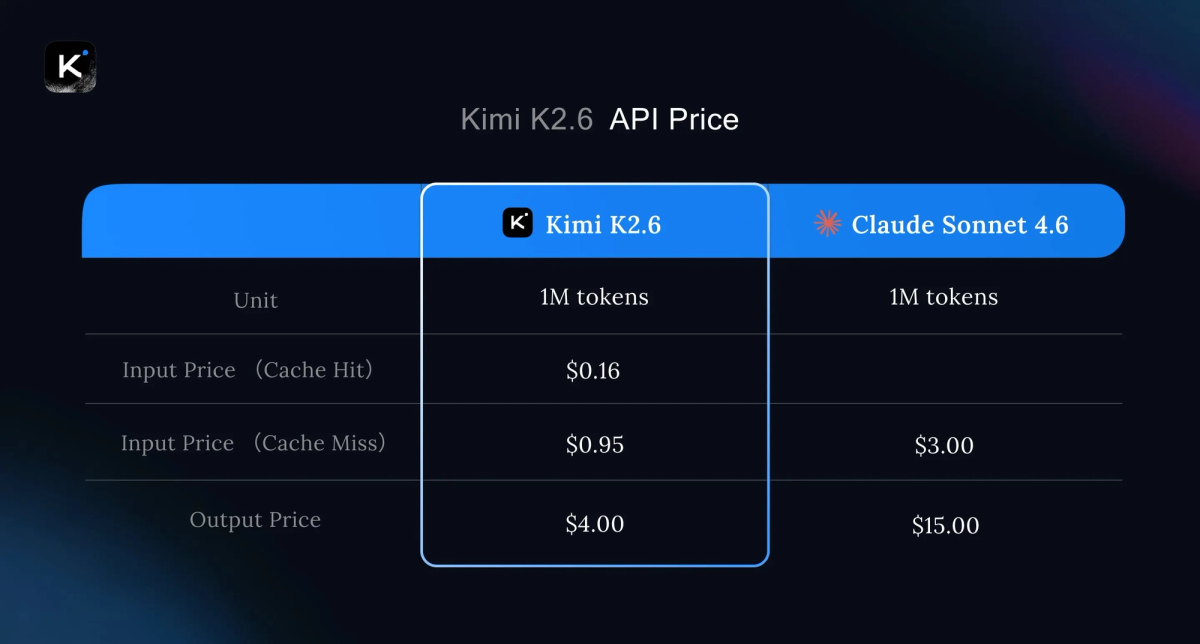

Cost per 1M Tokens — Honest Math

All prices are per million tokens, verified April 2026.

| Model | Input | Output | Source |

|---|---|---|---|

| K2.6 | $0.60 | $2.80 | OpenRouter, April 2026 |

| K2.6 (Moonshot platform) | $0.95 | $4.00 | platform.moonshot.ai(verify before budgeting) |

| Claude Opus 4.6 | $5.00 | $25.00 | Anthropic official |

| GPT-5.4 | $2.50 | $15.00 | OpenAI official |

Note on K2.6 pricing: OpenRouter lists K2.6 at $0.60/$2.80. Kilo.ai's aggregator shows $0.95/$4.00, attributed to OpenRouter but likely reflecting a different tier or caching configuration. Moonshot's platform.moonshot.ai does not publish a per-token rate card directly on the pricing page — verify current rates before production budgeting.

At 10M output tokens per month, the math looks like this:

| Model | Monthly output cost (10M tokens) |

|---|---|

| K2.6 (OpenRouter) | $28 |

| GPT-5.4 | $150 |

| Claude Opus 4.6 | $250 |

The cost gap is real and large. At agent-scale workloads burning millions of output tokens per day, K2.6's pricing changes what product designs are financially viable. Agent workflows that would cost $2,500/day on Opus 4.6 cost roughly $280/day on K2.6. That is not a marginal improvement — it unlocks different retry strategies, longer runs, and more parallel workers.

The counterpoint: if K2.6 completes tasks with lower reliability, requiring more retries or human intervention, effective cost per successful task may not differ as much as per-token pricing suggests. This is testable on your workload; the per-token comparison alone isn't sufficient.

Batch discounts apply to Anthropic and OpenAI: 50% off for async batch processing. K2.6 via OpenRouter does not list an equivalent batch tier publicly.

Where Each Model Actually Wins

Pick K2.6 when:

- You're running high-volume agent pipelines where token cost is a binding constraint — the 5–8× input price advantage over Opus 4.6 is substantial enough to change product economics

- You need self-hosted deployment for data sovereignty or compliance — K2.6's weights are on Hugging Face and deployable via vLLM, SGLang, or KTransformers

- Your tasks involve parallel workstreams that map naturally to swarm decomposition — research, multi-file refactoring, document generation — and you don't want to build orchestration infrastructure separately

- You're already using a Moonshot API-compatible endpoint and want the latest model with minimal migration cost

Pick Opus 4.6 when:

- Your workload involves reasoning depth, nuanced multi-file refactoring, or inferring developer intent from ambiguous requirements — this is where Anthropic's RLHF tuning shows up in practice, not in benchmarks

- Vendor provenance matters: enterprise security reviews for a US-origin model are faster and less likely to produce a "no"

- You need >262K context window in a single pass — Opus 4.6's 1M context at standard pricing is architecturally different from K2.6's 262K

- Your team uses Claude Code and wants first-party tooling, Routines, and the full Anthropic agent ecosystem

Note: Opus 4.6 is superseded by Opus 4.7 as Anthropic's current flagship. If you're choosing within the Anthropic family, Opus 4.7 scores 87.6% SWE-Bench Verified and 64.3% SWE-Bench Pro at the same $5/$25 price.

Pick GPT-5.4 when:

- Pure reasoning, math, or science problems are a significant portion of your workload — AIME 2026 at 99.2% and GPQA-Diamond at 92.8% are real leads that K2.6 doesn't match

- You need native computer use (desktop automation, browser control) as a first-party feature — GPT-5.4's OSWorld performance and native computer use integration are ahead of K2.6's current state

- You're in an OpenAI-native stack (Codex, Assistants API, Responses API) and migration cost matters

- You want the best-validated Terminal-Bench performance — when evaluated with optimized harnesses rather than Terminus-2, GPT-5.4's published scores exceed K2.6

What the Benchmarks Don't Tell You

Prompt sensitivity. All three models are highly sensitive to system prompt design, tool definitions, and harness configuration. A team with well-tuned Claude prompts may see better results on Opus 4.6 than a naively configured K2.6 session, regardless of benchmark rankings. Harness engineering matters as much as model selection for production agent workflows — this point applies equally to all three.

Benchmark saturation on SWE-Bench Verified. Six models now sit within 0.8 points of each other on SWE-Bench Verified (80.0–80.8%). The top of this benchmark has become a statistical noise zone. SWE-Bench Pro is more discriminating and reflects a more realistic task set. The numbers that matter for this comparison are SWE-Bench Pro and Terminal-Bench, not Verified.

Real-world vs. benchmark gap. SWE-Bench Pro tasks average 107 lines of change across 4.1 files. Most production coding tasks are either simpler (single-file bug fixes) or more complex (large-scale migrations, novel architecture decisions). Neither end maps cleanly to benchmark performance.

Contamination is an open question for all three. OpenAI has acknowledged training contamination on SWE-Bench Verified and stopped reporting it as primary evidence. Moonshot's model card states memorization screens were applied. Anthropic's model card notes similar controls. None of these claims can be independently audited at launch.

Vendor jurisdiction risk. K2.6 is from a Chinese company operating in a regulatory environment with increasing scrutiny in both directions. The Modified MIT License is permissive for most commercial use, but the 100M MAU / $20M monthly revenue attribution threshold and the Chinese provenance factor create real enterprise procurement friction that GPT-5.4 and Opus 4.6 do not.

FAQ

Are these numbers reproducible?

The benchmark methodology is documented for all three vendors but has not been independently replicated at launch. Moonshot states all coding scores are averaged over 10 independent runs; Anthropic specifies their Terminal-Bench evaluation used 1× guaranteed / 3× ceiling resource allocation with 5–15 samples per task. The harness differences (Moonshot uses in-house SWE-agent adaptation; Anthropic and OpenAI use their own frameworks) make direct number comparison imprecise. Treat the table as directional signal, not absolute ground truth.

Which model is safest for production agents?

"Safe" has two meanings here. For code quality and predictable behavior, all three are production-capable — the right answer depends on your specific workflow and how much you've tuned the harness. For vendor stability, both Anthropic and OpenAI have demonstrated sustained platform reliability; Moonshot's API platform is newer and has less production history at scale. For enterprise compliance and procurement risk, US-origin models (Anthropic, OpenAI) have faster security review cycles at large organizations.

Can I run K2.6 self-hosted while Claude and GPT are API-only?

Yes. K2.6 weights are on Hugging Face and run on vLLM, SGLang, or KTransformers. Minimum viable hardware is 4× H100 for the INT4 variant at reduced context. Claude and GPT-5.4 are API-only — there is no self-hosted path. If data sovereignty is a requirement, K2.6 is the only option among these three.

How stale are these numbers likely to get?

Quickly. Anthropic released Opus 4.7 on April 16, 2026, four days before K2.6 launched. Opus 4.7's SWE-Bench Verified is 87.6%, already well ahead of K2.6's 80.2%. OpenAI updates the GPT-5.4 family and the SEAL leaderboard rolls continuously. This table reflects the state as of April 20, 2026, and should be treated as a snapshot. For current leaderboard standings, check swebench.com and the official model cards.

Decision Framework

For most production teams, the decision is actually simpler than a full benchmark comparison suggests:

If cost is a hard constraint: K2.6 is the answer. The 5–8× price gap over Opus 4.6 is too large to justify Opus for high-volume agent work unless you have specific reasons (context window, reasoning depth, ecosystem fit) to pay the premium.

If your organization has enterprise procurement requirements: Evaluate US-origin models first (Opus 4.6 or GPT-5.4), then evaluate whether K2.6 self-hosted clears your security and compliance bar. Self-hosting mitigates the data residency concern but doesn't eliminate Chinese-origin vendor scrutiny.

If you need >262K context in a single pass: Opus 4.6 or GPT-5.4. This is a hard architectural limit for K2.6, not a benchmark issue.

If you're benchmarking for your workload: Run all three on representative tasks from your actual codebase before committing. Benchmark scores set the prior; your own workload data should dominate the decision.

Related Reading