Both AutoResearch and AI coding agents like Claude Code or Verdent involve an LLM writing and running code without constant human intervention. That's where the similarity ends. The design choices that make AutoResearch work for ML experimentation are precisely what would make it inadequate for shipping production code — and vice versa. Understanding the boundary isn't just conceptually tidy; it changes what you build when you're designing autonomous agent workflows.

Two Different Problems

AutoResearch: Optimizing Within a Fixed Eval

AutoResearch, as documented in Karpathy's GitHub repo, is an optimization loop. The problem is fully specified upfront: a single training file (train.py), a single metric (val_bpb, validation bits per byte), and a fixed 5-minute time budget per experiment. The agent's job is to find code changes that improve the metric. What "better" means is unambiguous, measurable in under 5 minutes, and doesn't require human judgment.

This constraint is load-bearing. Because success is reducible to a single number, the agent can make autonomous keep-or-revert decisions without asking anyone. It doesn't need to understand your team's coding standards, your architecture's constraints, or whether the change is maintainable at scale. It needs to know if val_bpb went down.

AI Coding Agents: Shipping Verifiable Changes to Real Repos

AI coding agents — Claude Code, Verdent, Cursor — are built for a structurally different problem. A software project doesn't have a single objective function. "Better" means: does this compile? do the tests pass? does it match the spec? does it conform to the style guide? does the reviewer find it readable? does it introduce a security vulnerability? Some of these checks are automatable; many require context the agent doesn't have in isolation. Karpathy frames this as a progression from "agentic engineering" — where humans orchestrate agents — to fully autonomous research, as covered in VentureBeat's AutoResearch reporting; the two roles require fundamentally different evaluation architectures.

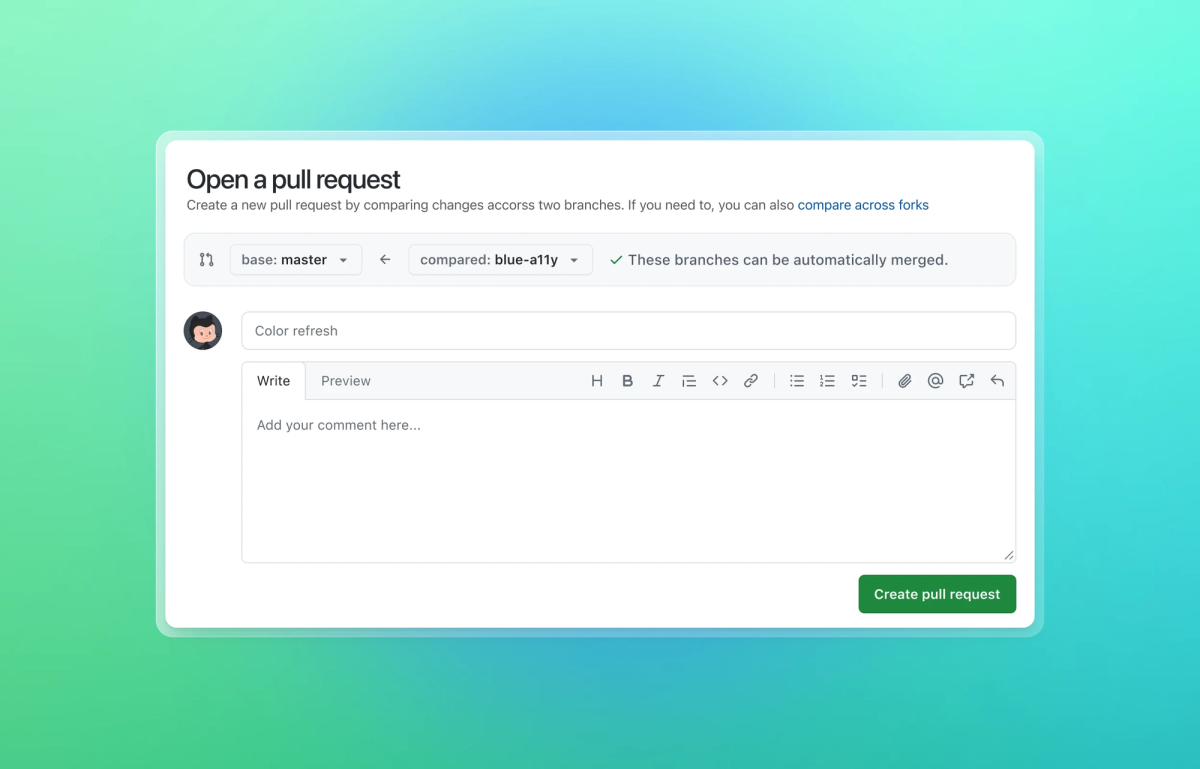

The agent has to read across multiple files, understand how the codebase is structured, make coordinated changes, run existing tests, and produce output that a human engineer can review and merge. The deliverable isn't an improved metric — it's a mergeable diff.

Where They Overlap

Despite the different problems, the underlying patterns overlap in meaningful ways.

Plan-First Execution

AutoResearch's loop, as specified in program.md, follows a think-then-act structure. The agent is explicitly instructed to form a hypothesis about what to try before modifying the file, and to log its reasoning alongside the result. This is the same rationale behind Plan Mode in Claude Code — having the agent articulate what it's about to do before it does it reduces errors and makes outputs reviewable.

The difference is enforcement. In AutoResearch, "plan then execute" is a written instruction the agent follows by convention. In Claude Code's Plan Mode, it's enforced at the framework level: the agent literally cannot edit files until the plan is approved. The autoresearch instruction is softer; the coding agent enforcement is structural.

Git-Based Isolation

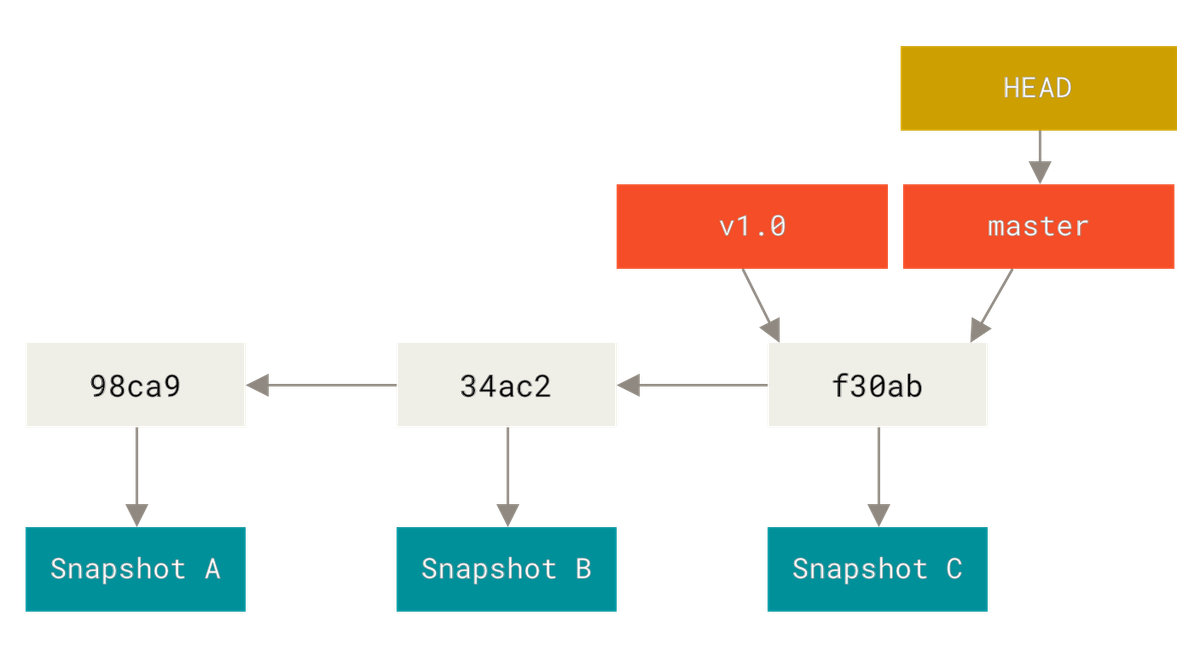

Both use git as the primary mechanism for managing state and rollback. AutoResearch runs on a dedicated branch and uses git reset to revert failed experiments instantly. Coding agents running in worktree-isolated environments (Verdent's architecture, or Claude Code with git worktrees) similarly use git branches to isolate work from the main codebase, enabling rollback without affecting other concurrent work.

In both cases, git isn't just version control — it's the rollback primitive that makes autonomous operation safe. Without it, a failed experiment or a broken change would require manual recovery.

Verification Loops

AutoResearch verifies each change by running the training job and measuring the metric. Claude Code verifies changes by running the existing test suite, linting, and type-checking. The structure is identical: make a change, run the evaluator, interpret the result, decide whether to keep or revert. What differs is the nature of the evaluator: AutoResearch's is a single scalar, and a coding agent's is a suite of heterogeneous checks with no single rollup score.

Where They Diverge

Search Space: Constrained File vs Arbitrary Repo

AutoResearch constrains the agent to a single file. The modifiable file (train.py) is roughly 630 lines — small enough that the agent can read the whole thing on every iteration and reason about any part of it. The blast radius of any single change is bounded. A catastrophic code rewrite can be reverted in one git reset call.

Coding agents work across arbitrary file structures, sometimes in codebases with hundreds of thousands of lines. No single context window can hold the entire repo. The agent has to navigate, index, and reason about what to read rather than reading everything. This is why tools like Claude Code maintain CLAUDE.md memory files and why Verdent's architecture builds codebase indexes — they're compensating for the fact that the repo doesn't fit in context.

This difference in scope changes what kinds of errors are recoverable. In AutoResearch, any change that breaks the metric is immediately reverted. In a production codebase, a change that breaks a subsystem the agent didn't examine — because it didn't know to look — may not surface until review or CI.

Failure Recovery: Git Rollback vs PR Rejection

AutoResearch's failure mode is clean: val_bpb doesn't improve, git reset runs, the codebase returns to the last known-good state. No human required. The failure is atomic and immediate.

Coding agent failure is messier. A change that passes automated tests might fail code review for readability, correctness of logic the tests don't cover, or architectural misalignment the reviewer catches. PR rejection is a social process, not a binary metric. Rollback is trivial mechanically, but understanding what went wrong and what to do differently requires human judgment that AutoResearch's loop explicitly bypasses.

Human Role: Goal-Setter vs Reviewer

In AutoResearch, the human's contribution ends when they write program.md. Once the loop starts — and the instruction is literally NEVER STOP — the agent runs until manually killed. The human reviews results in the morning but doesn't intervene mid-loop.

With coding agents, the human is an active participant in the review cycle. The agent proposes a change; the human reviews it; the agent revises; the human merges. Even in highly automated setups with auto-approve modes, the human retains responsibility for what gets merged. The agent can reduce the review burden dramatically, but it doesn't eliminate it.

Output: Improved Training Metric vs Mergeable Code Diff

AutoResearch produces a staircase of val_bpb values and a git history of accumulated code improvements to train.py. The "output" is a better training configuration — code changes that, when run, produce a better model. A coding agent produces a code diff that must be reviewed, approved, and merged by a human before it affects anything.

The output type drives the accountability structure. No one needs to sign off on AutoResearch's overnight run (except to review it afterwards). Every change from a coding agent requires a human decision before it lands in the shared codebase.

What AutoResearch's Architecture Reveals About Agent Design

Beyond the specific comparison, AutoResearch's design encodes lessons that apply to coding agent architecture more broadly.

Fixed Time Budgets as a Constraint Pattern

The 5-minute wall-clock budget makes experiments directly comparable regardless of what the agent changes. It also bounds the cost of any single failure — a crash or a bad idea costs 5 minutes, not an unbounded run. For coding agents, this pattern maps to the idea of bounded subtasks: give an agent a 10-minute or 20-minute budget for a specific subtask, then evaluate and continue, rather than allowing open-ended execution on a complex task. Unbounded execution is where agents tend to compound errors.

Simplicity Bias as an Explicit Instruction

Karpathy encodes a specific heuristic in program.md: a tiny improvement that adds complexity isn't worth keeping, but deleting code while maintaining performance is always a win. This is an explicit instruction to bias toward simpler solutions over complex ones. Most coding agent workflows don't encode this kind of taste explicitly — the agent is told what to achieve but not how to weigh tradeoffs between different valid solutions. The quality of the instruction document shapes the quality of the output.

program.md as a Human-AI Contract

The most transferable insight from AutoResearch is that the written specification is the system. There's no orchestration framework, no state machine, no supervisor agent. The loop exists entirely because the LLM reads a well-written document and follows it. The implication: the bottleneck in autonomous agent performance isn't the model — it's the quality of the specification given to the model. A CLAUDE.md that's vague produces agents that behave vaguely. A program.md that's precise about success criteria, failure modes, and scope produces agents that run reliably overnight.

Practical Implications for Teams Evaluating Coding Agents

When You Want Exploration vs Delivery

AutoResearch is an exploration tool. It's appropriate when you have a well-defined optimization target, no constraint on what form the solution takes, and time to burn on a GPU overnight. If you're exploring the design space of a training loop or a performance-critical algorithm, it's a legitimate approach.

AI coding agents are delivery tools. They're appropriate when the constraint is getting reviewable code into the codebase — where what you need isn't "any solution that improves the metric" but "a change that a human engineer would be comfortable merging." The deliverable has social requirements, not just metric requirements.

What "Autonomous" Actually Requires in Each Context

For AutoResearch to run autonomously, you need: a single file in scope, a deterministic scalar metric, and a fixed time budget per experiment. Remove any of these and the autonomous loop breaks down — either the agent has too much scope to bound its blast radius, or success is ambiguous, or experiments aren't comparable.

For a coding agent to run autonomously (in the sense of producing accepted changes without per-change human review), you need: a well-maintained CLAUDE.md or equivalent specification, a comprehensive automated test suite that catches regressions, a scoped task that the agent can verify against existing tests, and a human review step at the end — not in the middle. The "autonomy" in coding agents is autonomy of execution, not autonomy of acceptance. The merge decision stays with a human.

FAQ

Can AutoResearch Be Used for Coding Tasks (Not ML)?

The pattern applies anywhere you have a single file to modify, a runnable evaluation, and a scalar metric. Community forks have applied it to prompt optimization, coding skill improvement, and performance benchmarks. The awesome-autoresearch curated list tracks these adaptations. The canonical repo targets ML training and doesn't ship with infrastructure for general coding tasks. Adaptations require you to build the evaluation layer — which is the hard part. For a production codebase, "evaluation" is a test suite, a linter, and a type checker, which the standard test runner can run. The limiting factor is usually that the codebase spans many files and doesn't fit cleanly into a single-file, single-context-window scope the way AutoResearch's train.py does.

Is Ultraplan in Claude Code Similar to AutoResearch?

Ultraplan is a research preview feature in Claude Code that offloads complex planning to cloud infrastructure running Opus 4.6, freeing the local terminal during the planning phase. The human reviews the resulting plan in a browser with inline comments, then approves execution locally or in the cloud. It's not an autonomous loop — it's a planning enhancement with a human review gate before any code is written. AutoResearch, by contrast, has no human review gate in the loop. The structural difference is that Ultraplan keeps the human in the approval chain; AutoResearch explicitly removes the human from the loop entirely during execution.

What's the Closest Coding Agent Equivalent to AutoResearch's Loop?

The closest approximation in a coding agent context is a test-driven ratchet loop: the agent makes a change, runs the full test suite, commits if tests pass, reverts if tests fail, and repeats with a new approach. This replicates AutoResearch's binary metric (tests pass or not) and git rollback pattern. The difference is scope — you're evaluating an entire test suite across a multi-file codebase, not a single metric against a single file. Some teams implement this as a post-merge validation loop rather than a pre-merge experimentation loop: the agent is allowed to make changes that pass CI automatically, without per-change human review, under strict scope constraints.

Should Engineering Teams Care About AutoResearch?

Yes, for the architectural lessons rather than the tool itself. The design pattern — write the specification in English, use git as the rollback primitive, enforce a binary metric, fix the time budget — is directly applicable to designing autonomous coding agent workflows. The specific tool targets ML experimentation on a single GPU with NVIDIA hardware, which is a narrow use case for most engineering teams. The pattern of "define success unambiguously, bound the scope, enforce binary keep-or-revert, let the agent loop" is not narrow — it's a template for any autonomous agent task that can be structured the same way.

Related Reading

- AutoResearch Explained: How Karpathy's AI Research Agent Works — The technical foundation: what the ratchet loop,

program.md, and 5-minute budget actually do. - Claude Code vs Verdent: Multi-Agent Architecture Compared — How coding agents handle multi-agent parallelism, repo-level context, and isolation — the capabilities AutoResearch doesn't need.

- LLM Knowledge Base for Coding Agents: Beyond RAG — The persistent context layer that gives coding agents the project memory AutoResearch deliberately omits.

- Claude Code Auto Mode and Cloud Auto-Fix — Claude Code's autonomous execution modes and where human approval is still required.

- What Is G0DM0D3? — Another tool in the autonomous AI evaluation space, built for model comparison rather than code improvement.