You know that moment when you're three hours deep into reviewing a gnarly PR, context window bursting, and Claude just… stops you mid-thought? I've been there more times than I'd like to admit. So when Anthropic dropped the Claude for Open Source program on February 26, 2026 — six months of free Claude Max 20x, no strings, no credit card required — I had to dig into every detail. Because "free" and "20x" are doing a lot of heavy lifting in that headline, and I wanted to know exactly what it means for the maintainers who actually need it.

Here's everything you need to know before you apply.

What Does "20x" Actually Mean?

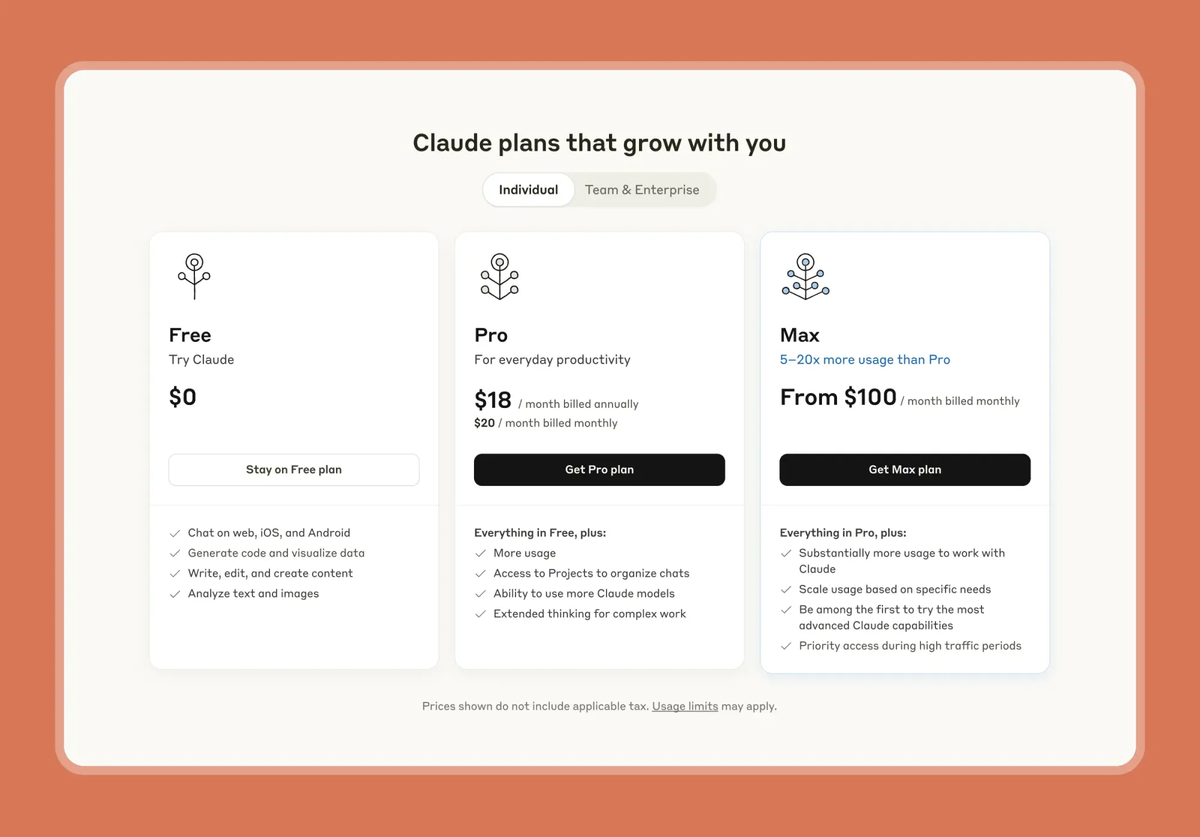

Pro vs Max 5x vs Max 20x — a plain comparison

The "20x" refers to usage capacity relative to the Pro plan. Not a different model, not extra features — just dramatically more runway before Claude tells you to slow down.

Here's how the three paid tiers stack up as of March 2026 — numbers drawn from Anthropic's Max plan page and usage limits documentation:

| Plan | Price/mo | Usage vs Pro | ~Messages per 5-hr window | Claude Code | Extended Thinking |

|---|---|---|---|---|---|

| Pro | $20 | 1x (baseline) | ~40–45 | ✅ | ✅ |

| Max 5x | $100 | 5x | ~225 | ✅ | ✅ |

| Max 20x | $200 | 20x | ~900 | ✅ | ✅ |

Message count ranges are estimates — Anthropic doesn't publish exact figures. Actual throughput varies by model, context length, and whether Extended Thinking is active. What Anthropic does confirm: Max 20x provides 20x the usage capacity of Pro across the same rolling 5-hour window structure.

One thing that trips people up: Claude.ai chat and Claude Code share the same quota pool — both draw from the same rolling 5-hour window. Heavy terminal users need to budget accordingly. Usage resets on a rolling 5-hour window — not midnight, not daily.

Two additional limits most guides don't mention: Max plans carry two weekly usage limits — one across all models, one specifically for Sonnet models — both resetting seven days after your session starts. Anthropic also reserves the right to impose monthly or per-feature caps at their discretion. The weekly limits were introduced in August 2025 and affect fewer than 5% of subscribers based on Anthropic's own estimate — but for intensive Claude Code workflows, it's worth knowing they exist.

What changes at 20x for daily maintainer work

This is where it gets practical. At Pro limits, a serious code review session — indexing a large repo, running iterative fixes, working through multi-file refactors — can burn through your window before you're halfway done. At 20x, that ceiling essentially disappears for a single user.

What you're actually buying is sustained, uninterrupted context. Long conversations keep their momentum. Claude Code loops that read your repo, apply changes, run tests, and fix failures don't get cut off mid-cycle. For maintainers who use Claude as a thinking partner across multiple PRs in a single afternoon, that's the real unlock.

One boundary worth being explicit about: Max 20x is an individual plan. Teams requiring shared access need the Team plan — Anthropic's Team plan documentation lists Standard seats at $25/user/month (annual) or $30 month-to-month, and Premium seats at $150/user/month with Claude Code included. The OSS program grant applies to one individual account — there's no "split the 20x across co-maintainers" option. Importantly, one team member hitting their limit doesn't affect other team members' limits, as Anthropic's documentation makes clear.

Who Should Apply (And Who Shouldn't)

You're a fit if…

Anthropic's eligibility criteria are specific. You need to meet both an identity condition AND an activity condition simultaneously:

Identity (one of):

- Primary maintainer or core team member of a public repo with 5,000+ GitHub stars

- Primary maintainer or core team member of a package with 1M+ monthly NPM downloads

- Maintainer of something the ecosystem "quietly depends on" — even if you don't hit those numbers

Activity:

- Commits, releases, or PR reviews within the last 3 months

You're not a fit if…

- You're a contributor but not a core maintainer or primary decision-maker on the repo

- Your project has strong impact but low visibility metrics — unless you make a compelling case in the application

- You haven't been actively committing or reviewing in the past quarter

- You're looking for team-wide coverage: this program is individual-only

- You're planning to use the grant to power automations or pipelines rather than direct maintainer work — if an

ANTHROPIC_API_KEYenvironment variable is set, Claude Code routes billing to that API key instead of your subscription, which means separate charges outside the grant entirely

Verdent gives new users 100 free credits with no card required. Get started free → No application, no approval wait — start running PR reviews, refactors, and doc generation with Claude Sonnet 4.6, Opus 4.6, GPT-5.4, Gemini 3.1 Pro, GLM-5, or MiniMax M2.7 today.

Real Use Cases for Open Source Maintainers

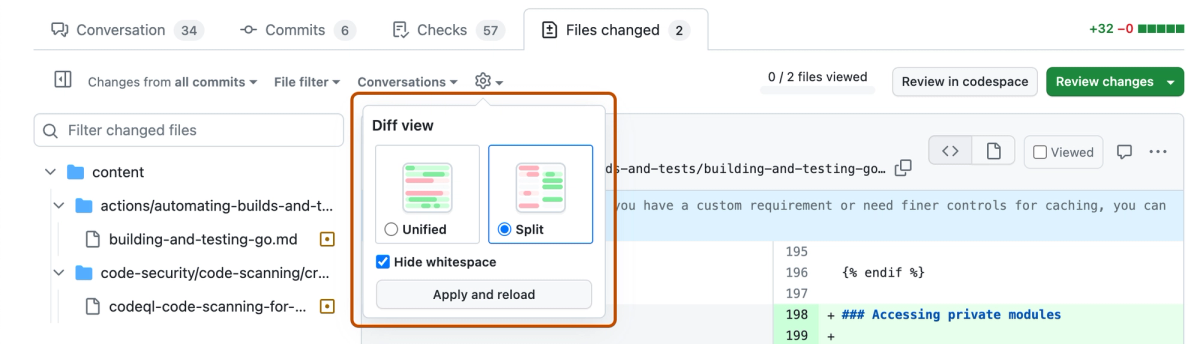

Reviewing large PRs

This is the use case I keep coming back to. Community PRs on popular projects can be sprawling — touching multiple modules, with undocumented side effects, questionable test coverage. At Pro limits, you might get through the analysis but run out of runway before you've drafted feedback. At Max 20x, you can paste the full diff, ask Claude to trace the blast radius, check for security implications, and draft a reviewer comment — all in one session.

A concrete workflow that works well at 20x:

1. Paste full diff (even 2,000+ line PRs)

2. Ask Claude to map which modules are affected and what assumptions each change makes

3. Ask for a list of regression scenarios worth testing

4. Draft review comment based on Claude's analysis

5. Iterate on tone and specifics without losing contextThis loop breaks at Pro limits somewhere between steps 3 and 4 on a large PR. At 20x, it runs to completion.

Documentation generation

Docs debt is the silent killer of open source projects. With 20x headroom, you can feed Claude an entire module's source, ask it to generate API docs, README sections, and migration guides in a single pass — then iterate without hitting a wall.

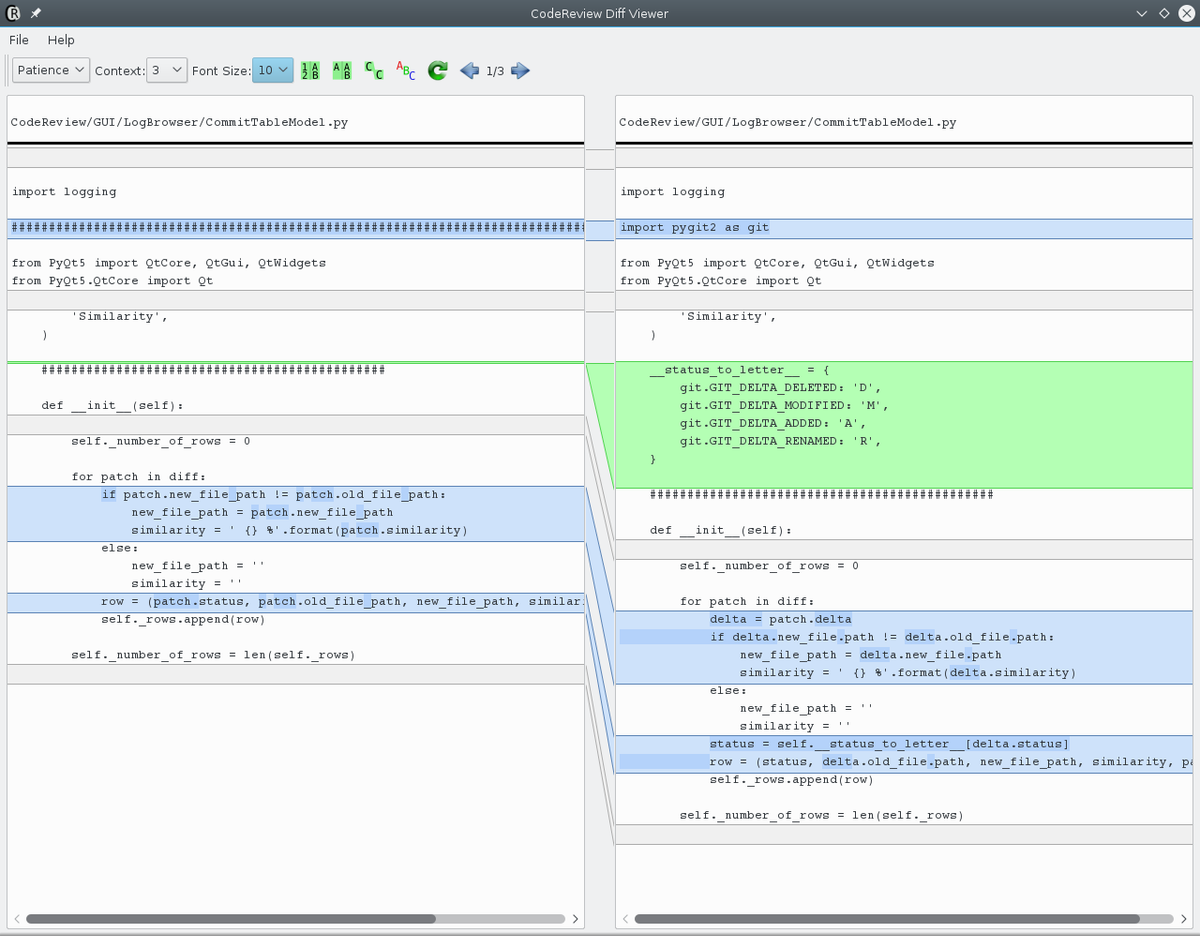

Refactoring legacy code

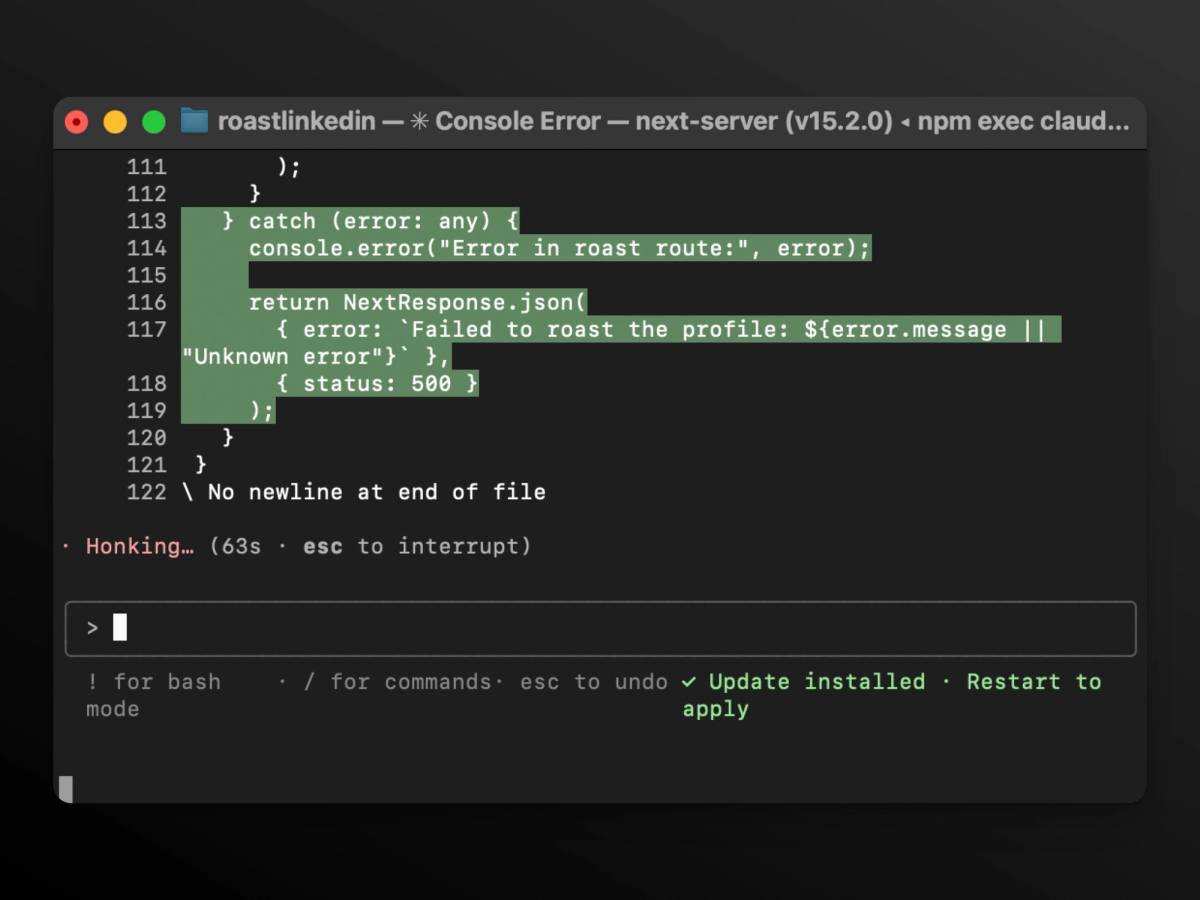

Here's where Claude Code specifically shines. You're not chatting — you're running terminal-based agentic loops. Claude reads your repo structure, proposes a refactoring plan, applies changes file by file, runs your test suite, fixes failures. That loop needs sustained context. The Claude Code official documentation confirms that each agentic step — reading files, generating changes, running tests — counts as part of the shared quota pool, meaning a single refactor session consumes far more than an equivalent number of chat messages. At 20x, that consumption headroom lets the loop run to completion.

Issue triage automation

High-volume repos get flooded with duplicate issues, vague bug reports, and feature requests that are really just misunderstood docs problems. Extended Thinking — included in Max 20x — helps Claude reason through ambiguous cases, cross-reference existing issues, and draft initial responses. Tidelift's 2024 Open Source Maintainer Report found that maintainer burnout is driven substantially by the volume of low-signal interactions. Triage automation is where that volume problem is most directly addressable.

6 Months Free — What It Really Means

The math behind $1,200 worth of access

Claude Max 20x is $200/month — confirmed on Anthropic's pricing page. Six months = $1,200 in value.

What you get included:

- Claude.ai (web, desktop, mobile)

- Claude Code (terminal-based agentic coding)

- Extended Thinking for complex reasoning

- Memory for long-term project context

- Priority access to new models and features

What this grant doesn't include: API access. The OSS program gives you a Max 20x subscription — not API credits. If an ANTHROPIC_API_KEY environment variable is set on your system, Claude Code routes authentication to that key instead of your subscription, resulting in separate API charges. If you're building automation on top of this grant, audit your environment variables first.

No auto-renewal to Max 20x after the free period ends. When your six months are up, you drop back to whatever plan you were on before — you won't be charged $200/month when it expires.

180 days = how many release cycles for your project?

Six months is roughly 12–24 minor release cycles for active projects on a bi-weekly cadence, or 2–4 major version releases for projects with longer cycles. The practical value compounds over that window. You build workflows around the higher limits, discover which use cases actually save you hours per week, and by month five you have a real answer to "is this worth $200/month to continue."

How to Apply + What to Prepare

The application form is straightforward — name, email, project details — except for one field that matters more than the rest. Head to the official application page when you're ready.

What to put in the "Other info" field (this matters most)

The metrics speak for themselves if you hit the thresholds. The "Other info" field is where most applications either land or die.

If you clearly meet the criteria: Describe your specific planned workflows. "I will use Claude Code to automate PR review triage and generate release notes" is more compelling than leaving it blank. It signals intent, not just eligibility.

If you're in the gray zone: Be direct and specific. Name the companies or projects that depend on your work. Quantify the dependency — "used as a direct dependency by X major packages" or "embedded in Y enterprise stacks." The exception clause — "if you maintain something the ecosystem quietly depends on, apply anyway" — is real, not boilerplate. GitHub's Open Source Guides document exactly this dynamic: critical infrastructure maintained by solo developers who don't show up in star counts. If that's you, say so.

Avoid: Generic statements about your passion for open source. Vague descriptions of your project. Anything that reads like a cover letter.

Applications are reviewed on a rolling basis with up to 10,000 total recipients. No hard deadline, but earlier applications face less competition for remaining spots.

Bottom line: If you're a primary maintainer of a qualifying project and you're already using Claude — or you've been curious about whether it fits into a serious maintenance workflow — this is a no-brainer application. The six-month window is enough time to build real habits and evaluate whether Max 20x is worth continuing. Apply, use it seriously, and decide with data rather than speculation.

You might also find these useful: