Claude Managed Agents launched in public beta on April 8, 2026. If you're evaluating whether to use it, the pricing model is straightforward in structure but has a few specifics that change how you estimate costs. This article walks through the billing mechanics as documented on Anthropic's official pricing page — no secondary sources, no guesswork.

How Claude Managed Agents Pricing Works

Two billing dimensions — tokens + session runtime

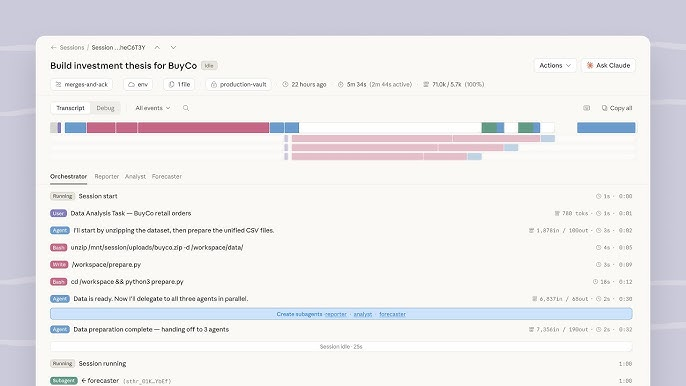

Managed Agents bills on exactly two dimensions: the tokens consumed by Claude during the session, and the time the session spends in running status. There is no flat monthly fee, no per-agent license, and no infrastructure charge on top.

Every token — input, output, cache write, cache read — is billed at the same rates as the standard Claude API. The session runtime charge is separate and additional: $0.08 per session-hour.

Session runtime billed to the millisecond, only while status is "running"

The official pricing page states: runtime is "measured to the millisecond and accrues only while the session's status is running."

This means the $0.08/hour rate only accumulates when the session is actively executing work. A session that runs for 20 minutes of active execution is charged $0.08 × (20/60) = $0.0267 in runtime, regardless of how long the session has existed.

Idle time, waiting, and terminated sessions don't count

The following session states do not accrue runtime charges, per the official documentation:

- Idle — session is waiting for your next message or for a tool confirmation

- Rescheduling — session is queued or transitioning

- Terminated — session has ended

For workloads with significant gaps between active processing steps — human-in-the-loop workflows, interactive debugging, periodic monitoring tasks — the idle exclusion materially reduces runtime cost compared to wall-clock billing.

Token Costs

Same rates as standard Claude model pricing

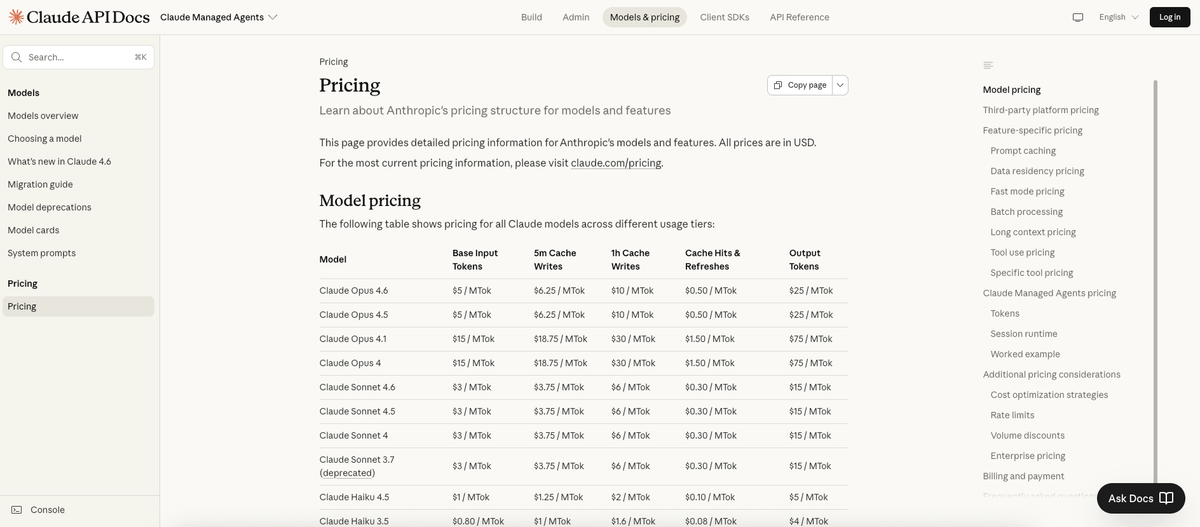

All tokens in a Managed Agents session bill at identical rates to the standard Messages API. From the official pricing table:

| Model | Input (per MTok) | Output (per MTok) |

|---|---|---|

| Claude Opus 4.6 | $5.00 | $25.00 |

| Claude Sonnet 4.6 | $3.00 | $15.00 |

| Claude Haiku 4.5 | $1.00 | $5.00 |

MTok = million tokens. Model selection is the dominant cost lever for most workloads — Opus 4.6 output tokens cost 5× more than Haiku 4.5 output tokens.

Prompt caching multipliers apply

Prompt caching works the same in Managed Agents sessions as in standard API calls. The official multipliers:

| Cache operation | Price multiplier |

|---|---|

| 5-minute cache write | 1.25× base input price |

| 1-hour cache write | 2.0× base input price |

| Cache read (hit) | 0.1× base input price |

Cache reads at 0.1× mean a 90% discount on repeated context. For sessions with consistent system prompts, large shared knowledge bases, or recurring task structures, caching is the most significant available cost reduction.

Web search inside sessions — $10 per 1,000 searches

If the session triggers web search, each search is billed at the standard rate of $10 per 1,000 searches ($0.01 per search). This is in addition to the standard token costs for processing the search results. Searches that return errors are not billed.

What's NOT Separately Billed

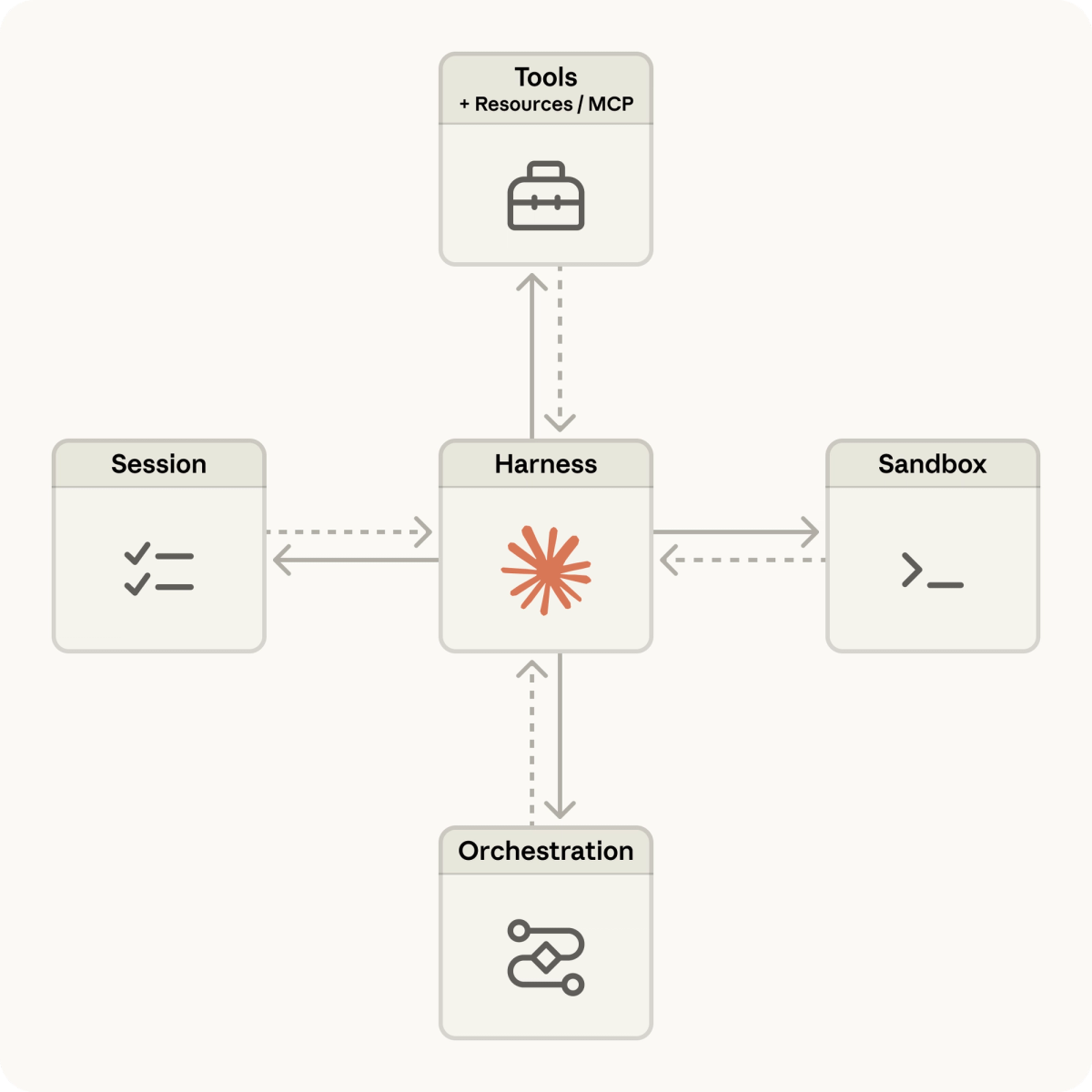

Container hours — replaced by session runtime

The standard Claude API has a separate Code Execution tool that bills by container-hour for compute. The official pricing page is explicit: "Session runtime replaces the Code Execution container-hour billing model when using Claude Managed Agents. You are not separately billed for container hours on top of session runtime."

This is relevant if you're comparing Managed Agents costs to a scenario where you use Code Execution as a standalone tool — the container billing you'd see there is replaced, not added on top.

Sandbox and tool execution — included

The sandboxed execution environment, state management, checkpointing, and error recovery infrastructure are covered by the session runtime charge. You are not paying separately for the compute that runs tool calls inside the session.

Cost Examples

One-hour Opus 4.6 coding session — official worked calculation

Anthropic's own pricing page includes this worked example. A one-hour coding session using Claude Opus 4.6 consuming 50,000 input tokens and 15,000 output tokens:

| Line item | Calculation | Cost |

|---|---|---|

| Input tokens | 50,000 × $5 / 1,000,000 | $0.25 |

| Output tokens | 15,000 × $25 / 1,000,000 | $0.38 |

| Session runtime | 1.0 hour × $0.08 | $0.08 |

| Total | $0.71 |

Note what these proportions tell you: in this example, the session runtime is $0.08 out of a $0.705 total — 11% of the cost. Tokens are 89%. For most workloads, runtime cost is a minor factor; token cost is where optimization matters.

With prompt caching — cost reduction

Same session, but 40,000 of the 50,000 input tokens are served from cache (cache reads at 0.1× standard input rate):

| Line item | Calculation | Cost |

|---|---|---|

| Uncached input tokens | 10,000 × $5 / 1,000,000 | $0.05 |

| Cache read tokens | 40,000 × $5 × 0.1 / 1,000,000 | $0.02 |

| Output tokens | 15,000 × $25 / 1,000,000 | $0.38 |

| Session runtime | 1.0 hour × $0.08 | $0.08 |

| Total | $0.53 |

Prompt caching reduces this session from $0.705 to $0.525 — a 25.5% reduction. At scale, across many sessions sharing similar system prompts or context, the savings compound. Cache reads pay off after just one subsequent retrieval on the 5-minute TTL, or after two retrievals on the 1-hour TTL.

A Sonnet 4.6 session for comparison

The same workload profile (50K input / 15K output / 1 hour active) on Claude Sonnet 4.6:

| Line item | Cost |

|---|---|

| Input tokens (50K × $3/MTok) | $0.15 |

| Output tokens (15K × $15/MTok) | $0.23 |

| Session runtime | $0.08 |

| Total | $0.46 |

Switching from Opus 4.6 to Sonnet 4.6 saves $0.25 on this session — 35% less. The runtime charge is identical regardless of model choice, which reinforces that model selection, not runtime, is the primary cost variable.

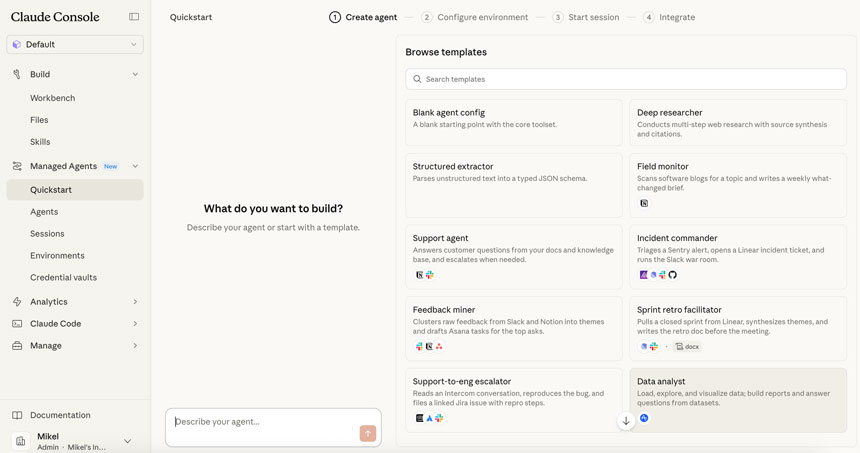

Who This Pricing Works For

Enterprise teams with high-value async workflows

The pricing structure suits workloads where each session produces meaningful output — customer support automation, document processing, complex analysis pipelines, multi-step research tasks. At $0.705/session for a substantial Opus 4.6 hour-long task, the unit economics work if the session output replaces meaningful human time or produces high-value deliverables.

The idle-time exclusion specifically benefits asynchronous workflows: a session that processes a document for 10 minutes and then waits 50 minutes for human review accumulates only 10 minutes of runtime charges, not 60.

Where it gets expensive — high-volume, low-value tasks

The pricing structure becomes difficult to justify for high-frequency, low-complexity tasks. A workflow that triggers hundreds of short sessions per day — each processing a trivial classification or simple extraction — accumulates runtime and token costs that may exceed the value produced, especially on Opus 4.6.

For that use case, either: (a) switch to Sonnet or Haiku to reduce token costs, (b) batch similar tasks within fewer longer sessions, or (c) use the standard Messages API with Batch API discounts for non-interactive workloads. The Batch API is explicitly not available in Managed Agents (see below).

Beta Limits to Know

Rate limits per organization

The Managed Agents overview documentation states rate limits of 60 requests per minute for create endpoints and 600 requests per minute for read endpoints. Organization-level spend limits and tier-based rate limits also apply.

Beta header requirement

All Managed Agents endpoints currently require the managed-agents-2026-04-01 beta header. The Claude SDK sets this automatically. Direct API calls need it manually. This is a current beta implementation detail — the product is under active development.

Messages API modifiers that do not apply

The official pricing page lists these explicitly: several standard API modifiers are incompatible with Managed Agents sessions. The key one for cost planning:

Batch API** discounts do not apply.** Anthropic's reasoning, quoted directly: "Sessions are stateful and interactive. There is no batch mode." If your architecture plans for 50% Batch API discounts on Managed Agents sessions, that is not available. Batch API discounts apply to the standard Messages API only.

Other excluded modifiers: Fast mode pricing, Data residency multiplier (inference_geo), Third-party platform pricing (Managed Agents is available only through the direct Claude API, not Bedrock or Vertex AI).

Enterprise custom pricing available

For high-volume production workloads, Anthropic offers custom pricing arrangements through the enterprise sales team. Volume discounts are negotiated case-by-case. The standard public pricing above applies until a custom arrangement is in place.

FAQ

Is there a free tier for Managed Agents?

No dedicated free tier. New Anthropic API users receive a small amount of free credits for testing the API. These credits apply to standard usage; there is no Managed Agents-specific free trial. Enterprise evaluation credits may be available — contact Anthropic sales for details.

How does session runtime billing work exactly?

Runtime accrues only while the session status is running, measured to the millisecond. The charge is $0.08 per session-hour. A 90-second active run costs $0.08 × (90/3600) = $0.002. Idle time, rescheduling, and terminated states generate no runtime charge.

Can I use Batch API discounts with Managed Agents?

No. This is explicitly documented on the official pricing page. Batch API applies to the Messages API only; Managed Agents sessions are stateful and interactive, with no batch mode available. If you need asynchronous processing with Batch API discounts, use the Messages API directly rather than Managed Agents.

How does this compare to self-hosted agent infrastructure costs?

The comparison depends heavily on your engineering cost assumptions and workload volume. The session runtime charge of $0.08/hour covers sandboxing, state management, checkpointing, and tool execution infrastructure. Running equivalent infrastructure yourself — compute, orchestration, monitoring, error recovery — involves both direct cloud costs and ongoing engineering maintenance. For teams earlier in the build-vs-buy evaluation, the managed service typically wins on total cost through the first year for most workload volumes; extremely high-volume, continuously-running workloads may eventually favor custom infrastructure at scale.

Related Reading

- Claude Code vs Verdent: Multi-Agent Architecture Compared — How Managed Agents relates to the broader landscape of coding agent infrastructure.

- LLM Knowledge Base for Coding Agents: Beyond RAG — Persistent context strategies that affect token consumption and, therefore, Managed Agents session costs.

- AutoResearch vs AI Coding Agents: Where Autonomous Research Ends — Frameworks for thinking about autonomous execution, relevant as Managed Agents introduces hosted long-running agent sessions.

- What Is Muse Spark? Meta's New AI Model Explained — For teams evaluating which model to run inside Managed Agents sessions, the frontier model comparison landscape.

- GLM-5-Turbo vs GLM-5V-Turbo: Which Agent Model to Use — Alternative model options for teams routing certain agent tasks through non-Anthropic models.