Most developers evaluating terminal coding agents hit the same wall: Claude Code is the mature, well-documented default, but the cost adds up fast on output-heavy agent runs. DeepSeek-TUI showed up as the obvious alternative once DeepSeek V4 landed. The comparison looks clean from a distance. It gets complicated when you actually dig in.

The comparison versions: DeepSeek-TUI v0.8.8 (May 2026, active development, not GA) vs Claude Code (current May 2026 release, GA). Both evaluated as of the same week — this matters because both tools are changing fast.

One fact that changes the comparison upfront: Claude Code can run DeepSeek V4 as its backend, via environment variables officially documented by DeepSeek. That's not a niche workaround — it's documented in DeepSeek's own API integration guide for Claude Code. The implications are real: you're not forced to choose between Claude Code's UX and DeepSeek's cost structure. But that path has its own tradeoffs, covered below.

TL;DR — Which One Fits You

Pick DeepSeek-TUI if you're committed to DeepSeek V4, want the RLM parallel sub-agent primitive out of the box, care about Rust-native performance and memory footprint, and can accept a community-maintained project at v0.8.x maturity.

Pick Claude Code if you want Anthropic's first-party ecosystem (xhigh effort, /ultrareview, task budgets, Routines, Max subscription), need enterprise-grade vendor accountability, or are using Claude Sonnet/Opus as your primary model and don't want to build around a model-specific harness.

DeepSeek-TUI and Claude Code at a Glance

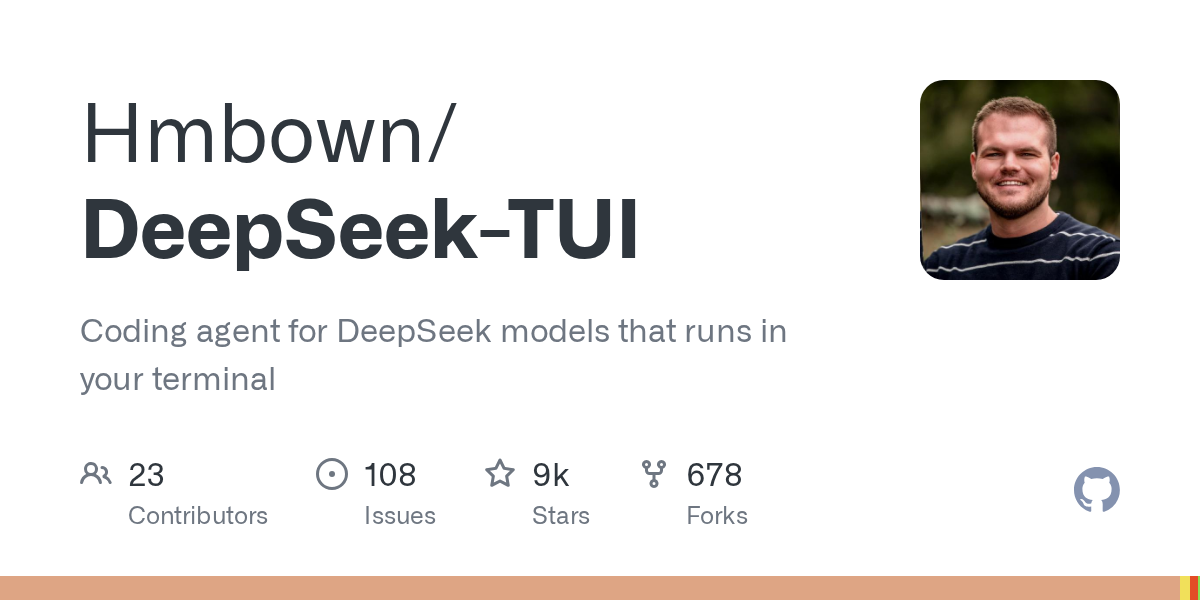

DeepSeek-TUI is an independent open-source project by Hunter Bown (GitHub: Hmbown), built in Rust with ratatui, shipped as two required binaries (see github.com/Hmbown/DeepSeek-TUI) (deepseek dispatcher + deepseek-tui runtime). It targets DeepSeek V4 specifically — the tool's architecture, cost estimator, prompt design, and RLM parallel sub-agent system are all built around DeepSeek's API economics. MIT license. v0.8.8 as of early May 2026, with frequent releases.

Claude Code is Anthropic's first-party terminal agent, built on Node.js, distributed as a single npm package. Official docs at code.claude.com. It's Claude-model-native: tool call protocols, effort levels, /ultrareview, task budgets, and Routines are all Anthropic-designed features. Requires a Claude subscription or API key. GA product with enterprise SLA and support.

Side-by-Side Comparison Table

| DeepSeek-TUI v0.8.8 | Claude Code (May 2026) | |

|---|---|---|

| Runtime | Rust, ~12MB RAM at idle | Node.js, higher baseline footprint |

| Install | npm i -g deepseek-tui (downloads Rust binaries) | npm install -g @anthropic-ai/claude-code |

| Default model | deepseek-v4-pro | Claude Sonnet 4.6 / Opus 4.7 |

| Supported models | DeepSeek V4-Pro, V4-Flash; NVIDIA NIM, Fireworks, SGLang (all DeepSeek) | Claude family; DeepSeek V4 via env var swap |

| Context window | 1M tokens (V4-Pro/Flash) | 200K (Sonnet 4.6), 200K (Opus 4.7) |

| Parallel sub-agents | RLM: 1–16 V4-Flash children, native | Task tool: parallel subagents, Claude-managed |

| Skills system | SKILL.md, reads .claude/skills path | Project memory, CLAUDE.md |

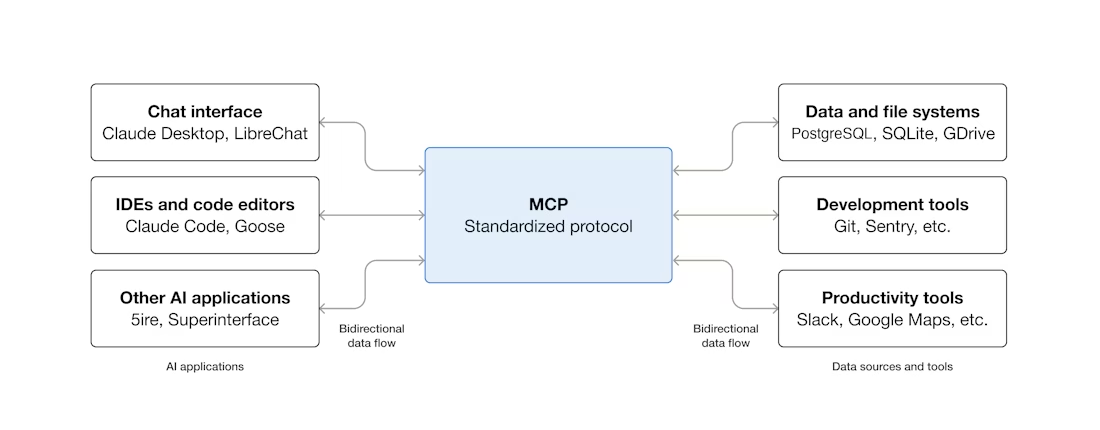

| MCP support | Yes, stdio | Yes, stdio |

| Approval modes | Plan (read-only) / Agent (per-tool approval) / YOLO | Plan mode / auto mode (Max users) |

| Cost model | API-metered per token | Subscription (Pro/Max) or API-metered |

| Open source | ✅ MIT | ❌ |

| Maturity | v0.8.x, community-maintained | GA, Anthropic-maintained |

| Enterprise support | None | Available (Teams/Enterprise plans) |

Architecture Differences That Matter

Rust single binary pair vs Node-based runtime

DeepSeek-TUI compiles to native binaries. The runtime overhead is minimal — the project reports roughly 12MB RAM at idle, with no Node process, no V8 heap, and no Python interpreter in the background. On developer machines with many concurrent processes, this matters in practice, not just on spec sheets.

Claude Code runs on Node.js, which means V8's garbage collector and a higher baseline memory profile. For most developers this is irrelevant — IDE plugins and language servers dwarf this difference. For teams running agents on constrained servers or in containerized CI environments, the difference is real.

Tool-call protocol: DeepSeek-native vs Anthropic-native

Claude Code's tool-call protocol is built around Anthropic's API format — the way tool definitions are structured, how results are fed back, and how multi-turn agent loops are managed. DeepSeek-TUI is built around DeepSeek's API, which exposes an Anthropic-compatible endpoint but has its own behavioral quirks (particularly around reasoning_content on tool-call turns, which DeepSeek-TUI handles natively).

When you run Claude Code with DeepSeek V4 as backend via environment variable substitution, you're running Anthropic's tool loop against DeepSeek's Anthropic-compatible endpoint. The mismatch is usually fine — DeepSeek's Anthropic format support is documented and maintained — but edge cases exist. The CHANGELOG for DeepSeek-TUI contains specific fixes for reasoning_content handling and tool-call transcript consistency that Claude Code doesn't need to implement because it doesn't run DeepSeek's thinking mode natively.

LSP post-edit diagnostics in DeepSeek-TUI

DeepSeek-TUI ships with LSP integration: after every file edit, it queries the relevant language server (rust-analyzer, pyright, typescript-language-server, gopls, clangd) and surfaces inline diagnostics. The agent sees type errors, missing imports, and syntax problems immediately after writing code — before the human reviews the diff.

Claude Code does not have equivalent first-party LSP integration. Developers using Claude Code in VS Code get LSP diagnostics through the editor, but the agent itself doesn't receive structured diagnostic feedback between edits unless you explicitly set up a feedback loop in your harness configuration.

Model Strategy — Locked-In V4 vs the Anthropic Family

What "model-specific harness" actually trades off

DeepSeek-TUI's value proposition depends on DeepSeek V4 being your preferred model. The cost estimator is tuned to V4 pricing. The RLM system fans out to V4-Flash specifically. The prompt design was revised with DeepSeek V4's behavior in mind. The thinking-mode streaming renders V4's reasoning_content natively. None of this transfers if you want to use Claude Sonnet 4.6 for a task, or GPT-5.5 for computer use.

The trade-off is explicit: if your model of choice shifts — because a Claude model outperforms V4 on your specific task type, because you need computer use that only GPT-5.5 provides, or because Anthropic ships a capability that matters to your workflow — DeepSeek-TUI offers no migration path to those models. You'd switch tools, not just swap a parameter.

When model lock-in is acceptable, when it isn't

Lock-in is acceptable when DeepSeek V4 genuinely covers your workload. For cost-sensitive agentic workflows, high-volume code generation, and tasks that don't require frontier reasoning depth, V4-Flash's price structure combined with DeepSeek-TUI's RLM makes the lock-in a reasonable exchange.

Lock-in becomes a problem when your workload is heterogeneous. If you're doing routine code generation (V4-Flash-appropriate) and occasional hard architectural decisions (where Opus 4.7's SWE-Bench Pro lead matters), you want a tool that routes to different models per task — or you accept running two different tools.

Parallel Sub-Agents Compared

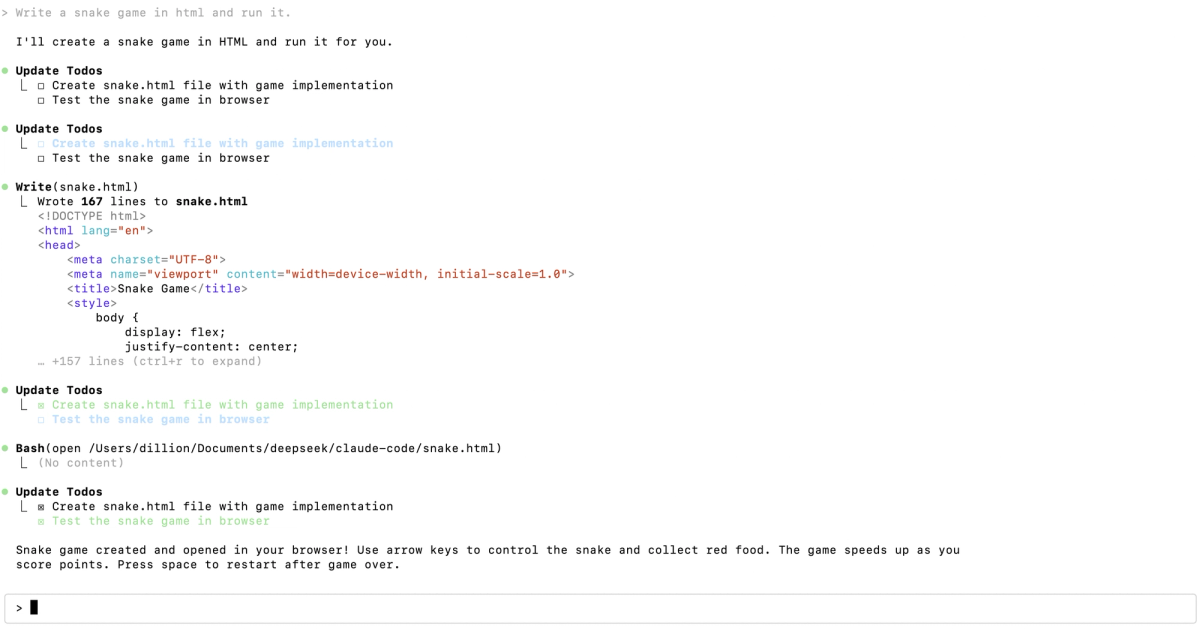

DeepSeek-TUI's RLM (rlm_query) — 1–16 flash children

RLM is a first-class primitive in DeepSeek-TUI: the main agent calls rlm_query with one prompt or up to 16 prompts simultaneously, each spinning up a V4-Flash child. Children run as independent API calls against the same async engine. Results return to the parent agent, which synthesizes them and continues. The parallel budget (1–16) is configurable per call; children can be promoted to V4-Pro for more demanding subtasks.

The cost arithmetic is favorable: V4-Flash children are cheap enough that a 16-child fan-out for batched analysis competes economically with a single V4-Pro call on the same problem.

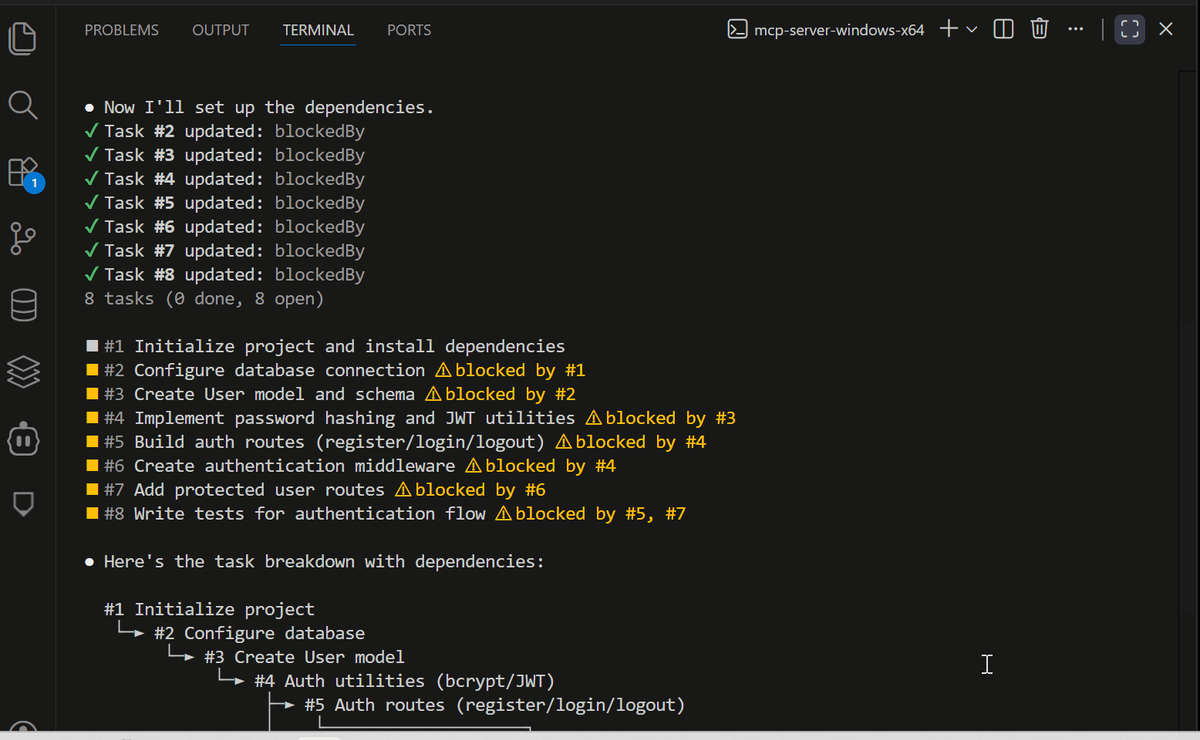

Claude Code's Task / subagent pattern

Claude Code supports parallel subagents via the Task tool, which spawns independent Claude agent instances for subtasks. Subagents can operate in parallel, each with their own tool access and context. The orchestration is managed by Anthropic's runtime — you don't configure concurrency limits the way you would with RLM; the system handles scheduling.

The behavioral difference: Claude Code's subagents share the same model tier as the parent by default (you can configure CLAUDE_CODE_SUBAGENT_MODEL to use a cheaper model). DeepSeek-TUI's RLM always routes to V4-Flash, making the cost-quality split between main agent and children an architectural default rather than a configuration choice.

How each handles state isolation

DeepSeek-TUI's RLM children are stateless API calls — they receive a prompt, return a result, and have no persistent context of their own. State isolation is complete but lightweight: children can't observe each other's work, can't be interrupted mid-run, and don't maintain conversation history.

Claude Code subagents have richer isolation: each runs as an independent agent session, can make their own tool calls, and can be assigned specific working directories or file scopes. For complex decomposition tasks where subtasks themselves require multi-turn reasoning, Claude Code's subagent model is more capable. For batched parallel analysis where you want independent answers fast, RLM's lightweight model is adequate.

Skills, MCP, and Extension Surface

DeepSeek-TUI's skill discovery walks .claude/skills too

DeepSeek-TUI discovers skills from a priority-ordered path: .agents/skills → skills → .opencode/skills → .claude/skills → ~/.deepseek/skills. The inclusion of .claude/skills means teams already maintaining skills for Claude Code can reuse them in DeepSeek-TUI without restructuring — at the file-reading level. The agent can auto-select skills via load_skill when task descriptions match skill descriptions.

The compatibility caveat: skill files are SKILL.md documents with name, description, and instructions. Claude Code's internal project memory system (CLAUDE.md, project-level context) works differently from DeepSeek-TUI's skill activation model. What transfers cleanly is the SKILL.md content itself; the trigger and activation behavior differs between the two tools.

MCP parity and trust boundaries

Both tools implement MCP via stdio transport per the MCP protocol specification. The configuration approaches differ: DeepSeek-TUI uses deepseek-tui mcp init and TOML config; Claude Code uses its own MCP server configuration. Functionally, any MCP server that works with one should work with the other — the protocol is standardized.

Trust boundary handling differs. Claude Code's trust model for tool calls is integrated with its approval gate system. DeepSeek-TUI's YOLO mode bypasses approvals at the session level, with a workspace trust check. For enterprise environments where per-tool trust policies matter, Claude Code's more granular control is the relevant distinction.

Cost Profile

API-metered (DeepSeek-TUI) vs subscription-anchored (Claude Code)

DeepSeek-TUI is purely API-metered: every token costs what DeepSeek charges per token at the time of the call. There's no subscription floor, no seat pricing, and no monthly commitment. For light or variable usage, this is economical. For heavy, sustained usage where you'd hit plan ceilings anyway, the math shifts toward subscription pricing.

Claude Code is primarily subscription-anchored for interactive use: Pro and Max plans give access within plan limits. API-metered Claude Code is available for teams that want pay-as-you-go, but interactive use is optimized around the subscription model. The xhigh default effort level and auto mode in Max increase token consumption per session — relevant for anyone budgeting monthly Claude Code spend.

What changes when you pick one over the other for a real workload

The practical question is not "which is cheaper" but "what does the cost look like on my specific volume and task mix?" For output-heavy agent loops running many hours per day, DeepSeek-TUI's per-token economics can be substantially lower. For teams with predictable usage that fits comfortably within a Claude subscription tier, the subscription model's simplicity outweighs per-token optimization.

One specific scenario where cost structure matters most: iterative development work with high retry rates — tasks where the agent tries an approach, fails, and loops. At high output token volumes, per-token pricing compounds. DeepSeek-TUI's RLM parallel approach (spin out many cheap children simultaneously rather than retrying serially) is designed partly to address this by front-loading analysis at low cost before committing to an approach.

When to Pick Each — Decision Framework

Pick DeepSeek-TUI if:

- DeepSeek V4 is your primary or only model and you want a terminal agent designed specifically around its API behavior

- RLM parallel sub-agents are a core part of your workflow and you want them built into the agent rather than requiring orchestration infrastructure

- Rust binary, minimal runtime footprint, and LSP post-edit diagnostics matter for your deployment environment

- You're comfortable with a v0.8.x community project and can absorb the occasional breaking change between releases

- Open-source under MIT with no vendor dependency on Anthropic is an explicit requirement

Pick Claude Code if:

- You use Claude Sonnet or Opus as your primary model, or need to mix model tiers for different task types within the same workflow

- First-party Anthropic features matter: xhigh effort, /ultrareview at merge gates, task budgets for cost control, Routines for scheduled automation

- Enterprise procurement requires a GA product with a named vendor, SLA, and support path — DeepSeek-TUI is a personal project, not an enterprise product

- You need computer use capabilities, which are native to Claude Code's architecture and not documented in DeepSeek-TUI

Where multi-model, parallel-execution platforms fill a different gap

Both tools are single-model terminal agents — they're designed around one model family and one developer at a time. Teams that need to route different task types to different models, run parallel agents across multiple codebases simultaneously, or integrate coding agents into broader CI/CD and review workflows are looking at a different product category. Platforms like Verdent operate across that gap: multi-model, IDE-integrated, with first-party parallel execution that doesn't require picking between DeepSeek-TUI's RLM and Claude Code's Task tool.

FAQ

Can DeepSeek-TUI work with Claude models?

No. The configuration supports NVIDIA NIM, Fireworks, and SGLang as providers, but all of these serve DeepSeek models. There's no path to routing Claude Sonnet or Opus through DeepSeek-TUI.

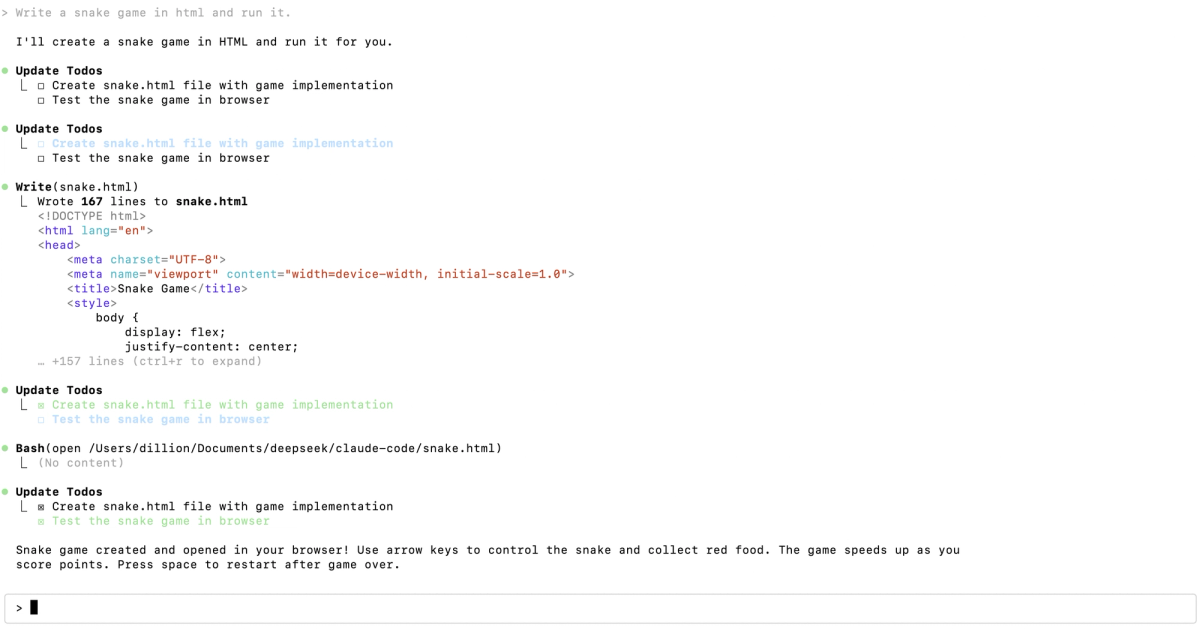

Does Claude Code support DeepSeek V4 as a backend?

Yes — this is less obvious than it should be. DeepSeek's official API documentation includes a Claude Code integration guide showing the exact environment variables to set:

export ANTHROPIC_BASE_URL=https://api.deepseek.com/anthropic

export ANTHROPIC_AUTH_TOKEN=<your DeepSeek API Key>

export ANTHROPIC_MODEL=deepseek-v4-pro[1m]

export ANTHROPIC_DEFAULT_OPUS_MODEL=deepseek-v4-pro[1m]

export CLAUDE_CODE_SUBAGENT_MODEL=deepseek-v4-flashClaude Code then sends API calls to DeepSeek's Anthropic-compatible endpoint. You keep Claude Code's UX, tool loop, and commands. What you lose: Claude-native features (xhigh effort, /ultrareview, task budgets) don't function because they rely on Anthropic's backend. And DeepSeek-TUI's RLM, thinking-mode streaming, and V4-tuned prompts aren't available on the Claude Code side. It's a valid hybrid path, but understand the capability gap before committing.

Which has more accurate tool calls in real workloads?

No third-party benchmark exists as of this writing that directly compares DeepSeek-TUI and Claude Code on real coding tasks under controlled conditions. Community reports favor Claude Code for complex multi-file refactors and tasks requiring nuanced instruction following; DeepSeek-TUI community feedback highlights RLM parallel analysis as a genuine differentiator for batched workloads. Treat these as anecdotes rather than evidence. Run both on your own representative workloads before deciding.

Are there third-party benchmarks comparing them?

Not at the terminal agent level. Underlying model benchmarks (SWE-Bench Pro, Terminal-Bench 2.0) exist for Claude Opus 4.7 and have some applicability, but they test model behavior under benchmark harnesses, not the full tool loop including DeepSeek-TUI's RLM, prompt design, and LSP feedback. There's no published eval that runs the same task set through both tools under identical conditions.

Can I use both in the same project?

Yes. They operate at the filesystem level and write to git — there's no session conflict if you're not running both simultaneously in the same working tree. Some teams run Claude Code for interactive, reasoning-heavy sessions and DeepSeek-TUI's RLM for batch analysis tasks against the same repo. The skills overlap (DeepSeek-TUI reads .claude/skills) makes shared skill maintenance practical. Keeping both configured is low overhead; deciding which to use for which task type is the actual work.

Related Reading