Here's a number that stopped me cold: 60% of open source maintainers work unpaid, and 44% report burnout. I've talked to enough maintainers to know those aren't abstract statistics — they're Tuesday nights. They're the feeling of closing your laptop at midnight after triaging 30 issues, knowing there are 30 more by morning.

So when Anthropic launched Claude for Open Source on February 26, 2026, the framing around "free access" felt secondary to me. The more interesting question was: does this actually change anything day-to-day? I spent time mapping Claude's real capabilities against the actual workflows that drain maintainers most. Here's what I found.

What "Claude for OSS" Actually Means

It's not just a free tier — it's a usage model

The Claude for Open Source program is Anthropic's way of saying thank you to the people keeping the ecosystem running. It offers 6 months of free Claude Max 20x to qualifying maintainers — no credit card, no catch beyond the standard Terms of Service.

But "free tier" is the wrong mental model. What the program actually gives you is sustained access at a usage level that changes how you work. At Pro limits, you're constantly rationing. You pick which PR to review deeply and which to skim. You skip the doc update because you've burned through your window on a refactor. At Max 20x, that rationing pressure mostly disappears. You start building workflows around what Claude can actually do, rather than around what you can afford to ask it.

That's the real shift. Not the price. The behavior change.

What the OSS program includes (Max 20x, extended context, continuity)

The deal: if you qualify, you get 6 months of Claude Max 20x — the $200/month tier — at zero cost. Here's what that tier actually includes as of March 2026:

| Feature | Included in OSS Program |

|---|---|

| Claude.ai(web, desktop, mobile) | ✅ |

| Claude Code (terminal agentic coding) | ✅ |

| Extended Thinking | ✅ |

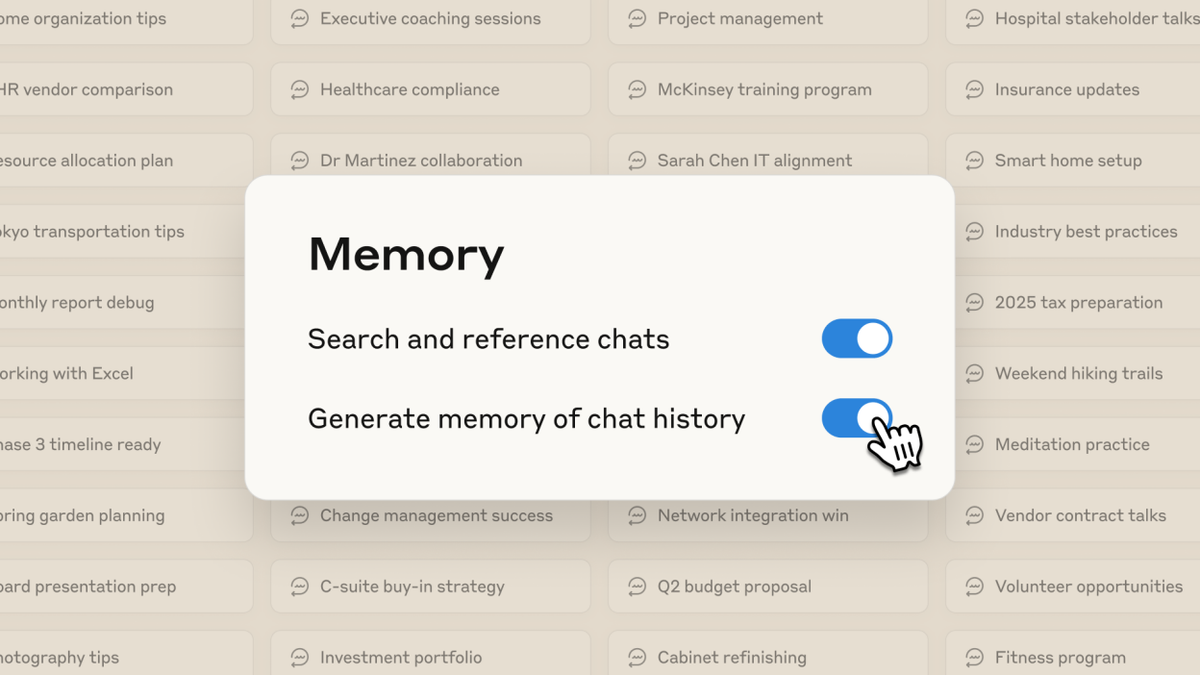

| Memory (long-term project context) | ✅ |

| ~900 messages per 5-hour rolling window | ✅ |

| Priority access to new models | ✅ |

One thing worth knowing: Claude.ai chat and Claude Code share the same quota pool. If you're running heavy Claude Code sessions, factor that in when planning your chat usage.

Real Workflows That Matter to Maintainers

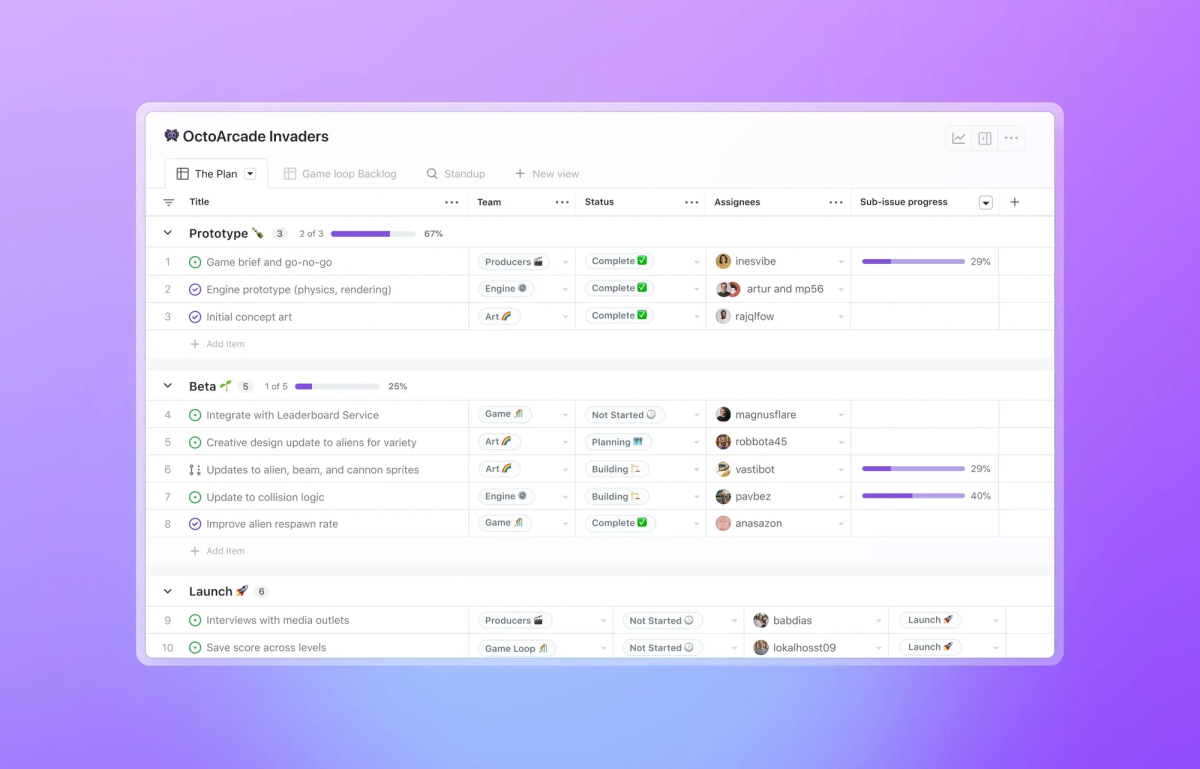

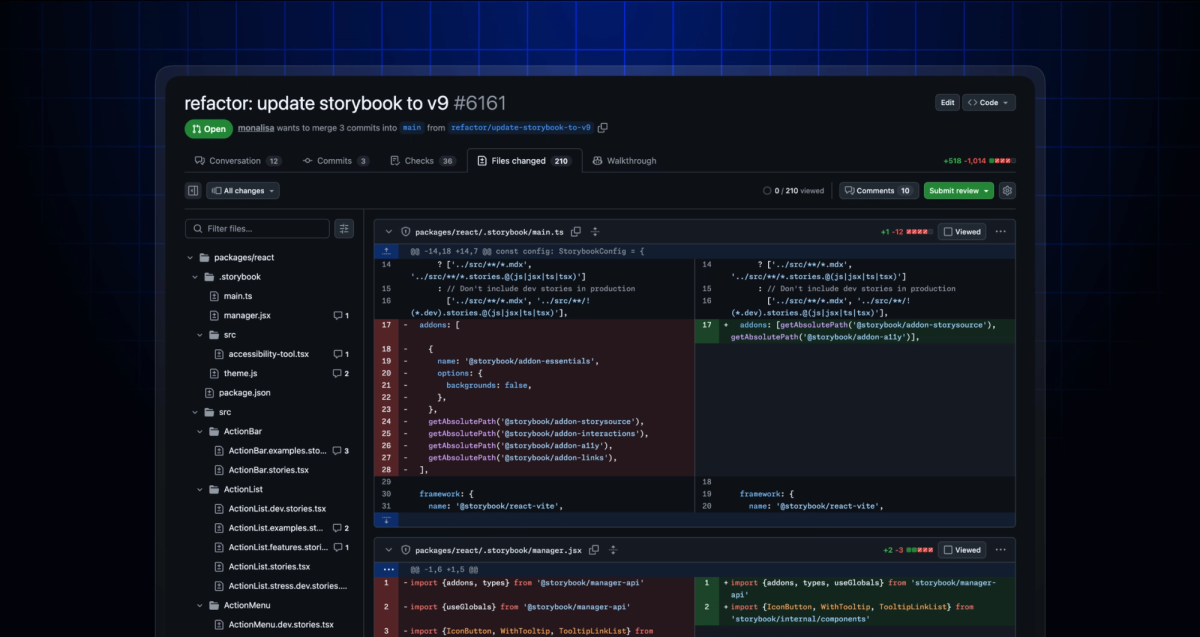

Large PR review — diff summarization + risk detection

This is the use case where Claude earns its keep fastest. Large community PRs are time-consuming not because the code is hard — it's because *understanding blast *radius takes time. What else does this touch? What assumptions does this break? What's the regression surface?

With Max 20x, you can paste a full diff, ask Claude to trace the dependency chain, flag potential regressions, surface undocumented side effects, and draft review comments — in a single session that doesn't get cut off. Research shows AI tools can reduce code review time by 30–40% — and in my experience, that estimate holds when you're not fighting usage limits mid-review.

A typical prompt structure that works well:

You're reviewing a PR for [project name], a [brief description].

Here's the full diff: [paste]

Please:

1. Summarize what this PR changes and why

2. Identify any regressions or unintended side effects

3. Flag any security or performance concerns

4. Draft 3–5 specific review comments I can post directlyDocumentation automation — README, API docs, changelogs

AI tools can cut documentation work by 50%+ — but only when you're not constantly hitting limits before finishing a pass. Docs debt is one of the most demoralizing parts of maintainership. It's invisible work with no social reward, and it keeps piling up.

At Max 20x, you can feed Claude an entire module, generate API docs, produce a migration guide, and update the README in one continuous session. Changelogs are especially fast: paste your commit history, describe your audience, and Claude produces a human-readable release summary in seconds.

What actually works here:

- README updates: Feed the full source file + current README → ask for a revised README that reflects current behavior

- API docs: Provide function signatures + inline comments → ask for JSDoc or docstring output

- Changelogs: Paste

git log --oneline v1.0.0..v1.1.0→ ask for a categorized changelog sorted by impact

Issue triage — categorize, draft, flag duplicates

High-volume repos get buried. Duplicate issues, vague bug reports, feature requests that are really doc problems in disguise. Extended Thinking — included in Max 20x — helps Claude reason through ambiguous cases rather than pattern-matching superficially.

A workflow that reduces manual triage load:

Here are 10 new issues from the last 48 hours: [paste]

For each one:

- Classify as: bug / feature request / docs gap / duplicate / needs-more-info

- If bug: estimate severity (critical / moderate / low)

- Draft a one-sentence initial response

- Flag any that appear to duplicate existing issues from this list: [paste known issues]This isn't replacing your judgment — it's handling the first pass so you can focus on the 2 out of 10 that actually need your full attention.

Legacy code understanding — map modules, explain complexity

Every long-running project has that module. The one that predates three major refactors, has no docs, and nobody touches without a full prayer session first. Claude is genuinely good at reading unfamiliar code and producing a coherent map of what it does and why — faster than reading it yourself, and with fewer gaps.

Useful prompt:

Here's a module from our codebase: [paste]

Please:

1. Explain what this module does in plain English

2. Identify its key dependencies and what it assumes about them

3. Note any patterns that look like technical debt or implicit contracts

4. Suggest where refactoring would have the highest leveragePair this with Claude Code for repos too large to paste in full — it can read your directory structure and pull relevant files autonomously.

When Claude Actually Makes Sense for OSS

Repos where Claude adds real leverage

Claude works best when the problem is information synthesis at scale — pulling together context, generating structured output, reasoning across a large surface area. Maintainer work is full of this:

- Repos with high PR volume and active contributors (review fatigue is real)

- Projects with docs debt and no dedicated writer

- Libraries with complex APIs and frequent "how do I use X" issues

- Codebases with legacy modules that need mapping before safe refactoring

If your bottleneck is time and cognitive load, Claude directly addresses both.

Situations where it won't move the needle

Honest take: Claude doesn't solve everything.

- Funding and sustainability: AI tools help with burnout by automating repetitive tasks, but they don't solve the funding problem. What maintainers actually need includes predictable income and recognition from companies profiting from their work. Claude is a productivity tool, not a business model.

- Community dynamics: Conflict resolution, governance decisions, contributor onboarding culture — these are human problems. Claude can help draft communication, but the judgment calls are yours.

- Repos with very small, tightly-coupled codebases: If your project is 500 lines that one person fully holds in their head, the overhead of prompting may not be worth it.

- Security-critical code review: Claude is a good first pass, but for crypto, auth, or anything where a subtle bug has serious consequences, human expert review is non-negotiable.

Claude Max 20x vs Standard Plan for OSS

Where Pro hits its ceiling

The Pro plan is solid for casual Claude use. But maintainer workflows are not casual. They're sustained, context-heavy, and often run multiple intensive sessions in a single day.

At Pro limits (~40–45 messages per 5-hour window), you hit the ceiling during:

- Any multi-file refactor using Claude Code

- A deep PR review session followed by a doc update

- An issue triage run on a high-volume repo

- Any session where Extended Thinking kicks in on a complex problem

The wall doesn't just slow you down — it breaks flow. You're mid-thought, mid-loop, and Claude stops you. You either wait, or you lose the context thread.

Why 20x changes the maintainer experience

| Scenario | Pro (~45 msg/5hr) | Max 20x (~900 msg/5hr) |

|---|---|---|

| Deep PR review + draft comments | Often cuts off | Completes fully |

| Claude Code refactor loop | Breaks mid-cycle | Runs to completion |

| Full doc generation pass | Partial output | Full module coverage |

| Issue triage (20+ issues) | 1–2 batches max | Full batch, iterable |

| Extended Thinking sessions | Limited by quota | Sustained reasoning |

The 20x number isn't marketing padding. For maintainers doing real work across multiple domains in a single day, it's the difference between Claude being a tool you reach for and Claude being a tool you ration.

Should You Apply for the OSS Program?

What to prepare

Before you open the application form, gather:

- GitHub repo URL and current star count

- NPM package name and monthly download count

- Recent activity evidence: link to commits, releases, or merged PRs from the last 3 months

- Ecosystem context: What depends on your project? Who uses it? Can you name companies or other packages?

The official eligibility threshold is a public repo with 5,000+ GitHub stars or 1M+ monthly NPM downloads, with commits, releases, or PR reviews within the last 3 months.

If you're below those numbers but maintain something critical, the exception clause is real — "If you maintain something the ecosystem quietly depends on, apply anyway and tell us about it."

What to write in your application

The metrics field speaks for itself if you hit the thresholds. The "Other info" field is where the application actually lives or dies.

If you clearly qualify on metrics: Describe your specific planned workflows. "I will use Claude Code to automate PR review triage and generate release changelogs" is more credible than leaving it blank. It signals intent, not just eligibility.

If you're in the gray zone: Be concrete and direct. Name the projects or companies that depend on your work. Frame it as ecosystem impact, not just project size. A 900-star package that 40 major enterprise systems depend on is a stronger case than a 6,000-star repo that nobody actually uses in production.

Avoid: Passion statements about open source. Generic descriptions of what your project does. Anything that reads like a cover letter. The reviewers are developers — they respond to specifics.

Applications are reviewed on a rolling basis, with up to 10,000 recipients accepted. There's no hard deadline, but the rolling review means earlier applications face less competition for remaining spots.

Bottom line: Claude for OSS isn't going to fix the structural problems in open source sustainability — funding, recognition, governance. But for the hours-per-week grind of review, docs, and triage? It's a genuine lever. Apply if you qualify. Build the workflows. Decide in month five whether $200/month is worth continuing. That's the honest experiment.

You might also find these useful: