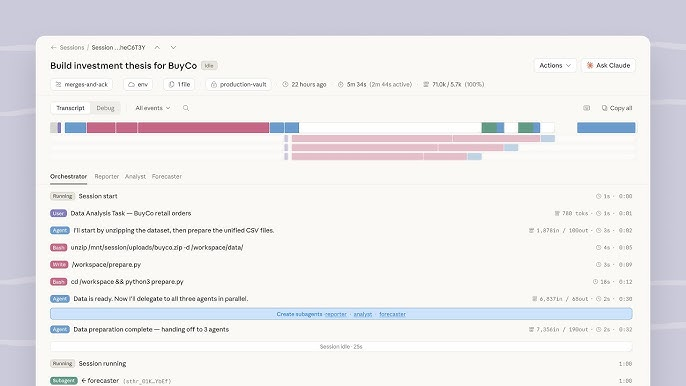

Two days ago I watched Anthropic's Managed Agents announcement pull 2 million views in under two hours. One developer's response: "there goes a whole YC batch." I've been evaluating agent infrastructure for enterprise deployments since early 2025, and this is the first time I've seen a platform launch that directly addresses the 3-6 month gap between "my agent works in a demo" and "my agent runs reliably at scale."

Claude Managed Agents isn't a new model. It's hosted infrastructure that handles sandboxing, orchestration, state management, and crash recovery—the operational complexity that typically delays production agent deployments. If you're a tech lead evaluating whether to build your own agent runtime or adopt a managed service, this breakdown covers what actually ships today, what's still in research preview, and where the lock-in risks live.

What Is Claude Managed Agents?

Claude Managed Agents provides the harness and infrastructure for running Claude as an autonomous agent. Instead of building your own agent loop, tool execution, and runtime, you get a fully managed environment where Claude can read files, run commands, browse the web, and execute code securely.

Announced on April 8, 2026, it's currently in public beta with the managed-agents-2026-04-01 header requirement. Think of it as Anthropic's answer to the infrastructure problem every team hits after they prototype an agent: you need sandboxed execution environments, credential management, session persistence, error recovery, and observability—and that's months of engineering work before you ship anything users see.

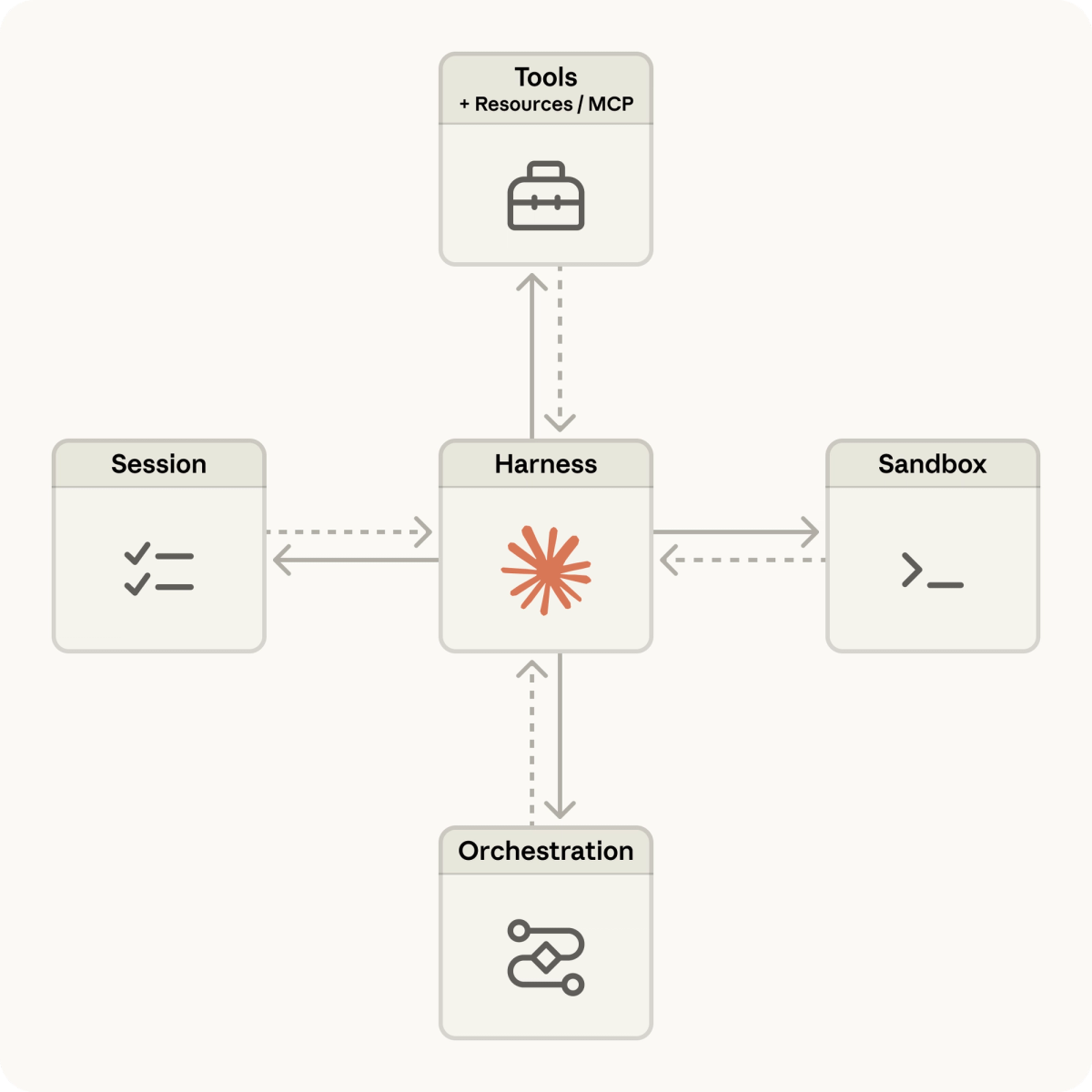

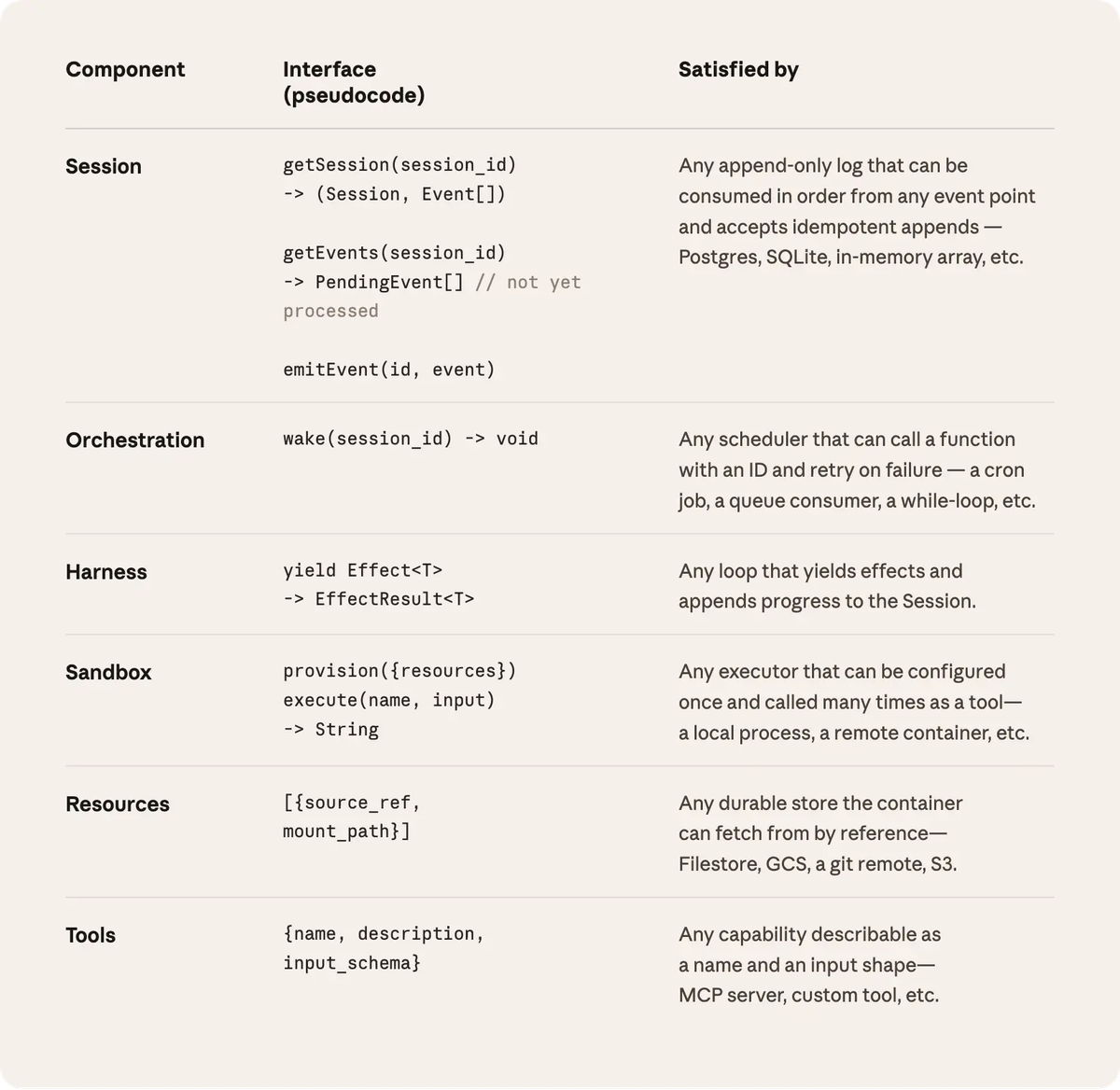

The architecture breaks into three components:

- Agent DefinitionYou define the model (Sonnet 4.6, Opus 4.6, or Haiku 4.5), system prompt, tools, MCP servers, and permissions. This gets saved with an ID you reference across sessions.

- Environment ConfigurationA cloud container with pre-installed packages (Python, Node.js, Go), network access rules, and mounted files. Anthropic handles the container lifecycle.

- Session ManagementLaunch a session that references your agent + environment. The session is an append-only event log—everything that happened, every tool call, every output. Sessions persist through disconnections and can run for hours autonomously.

Here's what that looks like in practice:

# Create an agent

curl https://api.anthropic.com/v1/agents \

-H "x-api-key: $ANTHROPIC_API_KEY" \

-H "anthropic-beta: managed-agents-2026-04-01" \

-d '{

"name": "Security Scanner",

"model": "claude-sonnet-4-6",

"system": "You analyze codebases for security vulnerabilities",

"tools": [{"type": "agent_toolset_20260401"}]

}'

# Launch a session

curl https://api.anthropic.com/v1/sessions \

-H "x-api-key: $ANTHROPIC_API_KEY" \

-H "anthropic-beta: managed-agents-2026-04-01" \

-d '{

"agent": "agent_xyz",

"environment_id": "env_abc",

"title": "Weekly security scan"

}'The agent_toolset_20260401 enables bash, file operations, web search, and code execution. You can also add custom tools or MCP servers for Slack, GitHub, Google Drive, or any system with an MCP implementation.

What differentiates this from running the Messages API with tool use? The orchestration harness. Managed Agents is a meta-harness with general interfaces that allow many different harnesses. For example, Claude Code is an excellent harness that we use widely across tasks. Managed Agents can accommodate any of these, matching Claude's intelligence over time.

Translation: Anthropic abstracts the agent loop so you don't have to rebuild it every time the underlying model improves or when context management strategies change.

What It Can Do

Based on official documentation and early adopter reports, here's what Managed Agents handles out of the box:

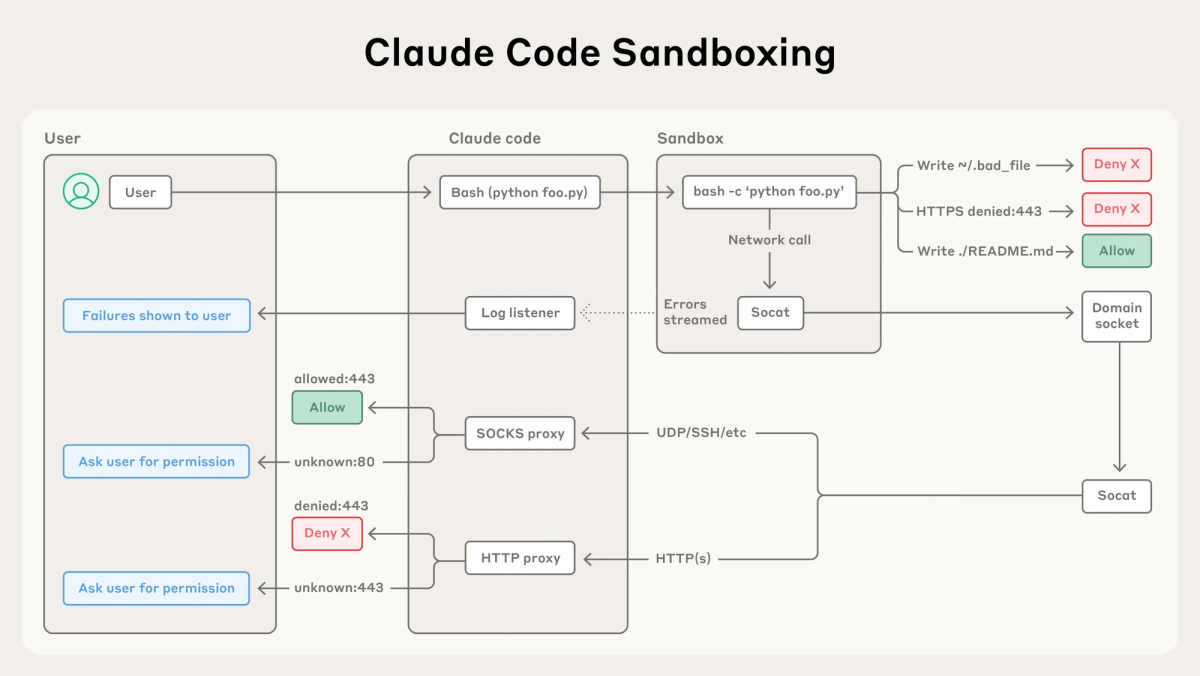

Production-grade sandboxing: Each session runs in an isolated Linux container with configurable network access. The container is ephemeral—it spins up when needed, shuts down when idle. You're not paying for compute when the agent isn't actively working.

Long-running autonomous sessions: Sessions can operate for hours without human intervention. If your connection drops, the session keeps running. When you reconnect, the full event log is available. This is critical for tasks like "scan this 50,000-file codebase for SQL injection vulnerabilities" where the agent needs to work through hundreds of files over multiple hours.

Built-in prompt caching and compaction: The harness supports built in prompt caching, compaction, and other performance optimizations for high quality, efficient agent outputs. This isn't something you configure—it's baked into the infrastructure. In practice, this means lower token costs for repeated context (like re-reading the same codebase across multiple sessions).

Tool execution with error recovery: When a tool call fails (network timeout, permission error, etc.), the harness has built-in retry logic and fallback strategies. You don't need to implement this yourself.

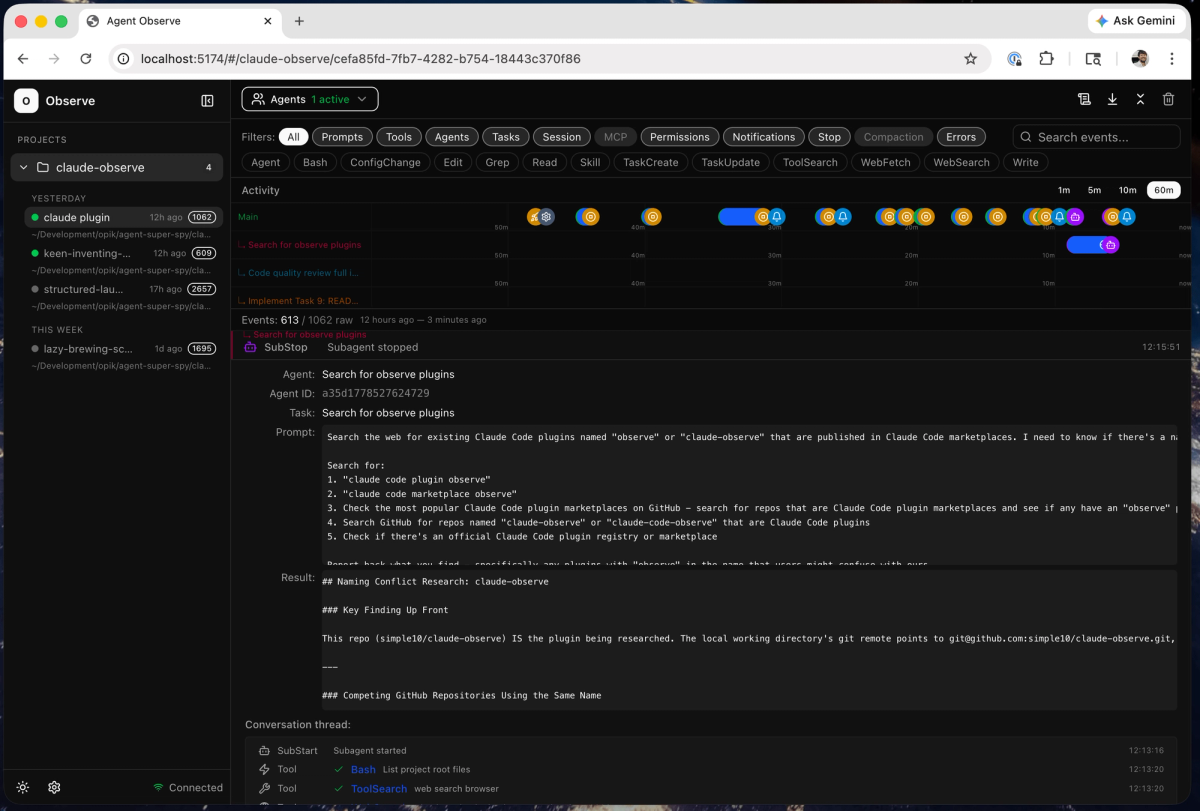

Session tracing: Every agent action is logged in the Claude Console. You can see which tools were called, what inputs were provided, what outputs were returned, and where the agent got stuck. This is essential for debugging "why did my agent do that?" scenarios.

What's explicitly in research preview (meaning: request access, expect instability):

- Multi-agent coordination: Multi-agent coordination so agents can spin up and direct other agents to parallelize complex work (available in research preview, request access here).

- Memory: Persistent memory across sessions (not yet documented in public docs).

- Outcomes: Structured success/failure criteria (also research preview).

Critical point: Certain features (outcomes, multiagent, and memory) are in research preview. Request access to try them. Don't build a production system assuming multi-agent orchestration is stable.

How It Differs from Messages API and Agent SDK

| Approach | What It Is | Who It's For |

|---|---|---|

| Messages API | Stateless API for single-turn or multi-turn conversations. You manage tool execution, context, and state. | Teams building chat interfaces, RAG systems, or custom agent loops where you want full control. |

| Agent SDK | The Agent SDK gives you the same tools, agent loop, and context management that power Claude Code, programmable in Python and TypeScript. | Developers who want the Claude Code harness running in their own infrastructure. You handle deployment, scaling, and ops. |

| Managed Agents | Hosted infrastructure + agent harness. Anthropic runs the containers, manages state, handles crashes. | Teams that want to ship agents fast without building sandboxing, orchestration, or observability from scratch. |

This is where most confusion lives. Anthropic now has three ways to build with Claude agents:

Messages API: You're in the driver's seat. Every tool call, every context management decision, every retry strategy—that's your code. This gives you maximum flexibility but maximum responsibility. If you're building a custom agent loop with specific requirements (like interleaving multiple models, or routing tool calls to different backends), this is the right choice.

Agent SDK: You get the harness (the agent loop, tool execution, context compaction) as a library you import into your Python or TypeScript app. The Agent SDK includes built-in tools for reading files, running commands, and editing code, so your agent can start working immediately without you implementing tool execution. But you're still responsible for deployment. You spin up the containers, handle scaling, monitor for crashes, and manage secrets. This is the middle ground: you get the agent runtime logic without building it, but you own the operations.

Managed Agents: Anthropic runs everything. You define the agent, launch sessions, and consume results. The sandboxing, scaling, crash recovery, logging—that's handled for you. The trade-off is lock-in. Once your agents run on Anthropic's infrastructure with their session format and their container specifications, switching to another provider isn't trivial.

Practical decision tree:

- Need to mix models (Claude + GPT-5 + Gemini) in one agent? → Messages API.

- Want Claude Code's runtime but need to self-host for compliance? → Agent SDK.

- Want to ship agents in weeks instead of months and don't need multi-vendor flexibility? → Managed Agents.

One developer on Hacker News put it well: "The best performance I've gotten is by mixing agents from different companies. Unless there is a 'winner take all' agent, I think the best orchestration systems are going to involve mixing agents". If that's your architecture, Managed Agents won't fit—it's Claude-first by design.

Current Status and Limitations

Managed Agents launched in public beta on April 8, 2026. That label matters. Here's what "beta" means in practice:

Header requirement: All Managed Agents endpoints require the managed-agents-2026-04-01 beta header. Behaviors may be refined between releases to improve outputs. Translation: Anthropic reserves the right to change how the harness works. If you're building production systems today, you need a plan for handling breaking changes.

Rate limits: Managed Agents endpoints are rate-limited per organization. Organization-level spend limits and tier-based rate limits also apply. Specific limits aren't published in docs, but based on the API documentation structure, these appear to be separate from Messages API rate limits. For high-volume deployments, you'll need to work with Anthropic's sales team to negotiate custom limits.

Multi-agent coordination isn't ready: Despite being a headline feature, multi-agent is explicitly research preview. That last feature is in research preview — a step behind the public beta — so teams should expect meaningful instability before relying on it for anything serious. If your architecture depends on one agent delegating to sub-agents, you're in early-adopter territory.

No SLA guarantees: This is beta infrastructure. Uptime commitments, support response times, and incident handling aren't what you'd expect from a GA product. Early adopter Notion, Rakuten, and Asana are getting white-glove treatment, but that doesn't scale to every customer.

Lock-in concerns: One developer framed it bluntly: "Once your agents run on their infra, switching cost goes through the roof." The session format, the environment configuration, the tool definitions—these are Anthropic-specific. If you need to migrate to a different provider later, you're rewriting significant portions of your agent logic.

Pricing adds up for always-on agents: Standard Claude Platform token rates apply, plus $0.08 per session-hour for active runtime. An agent running 24/7 costs ~$58/month in runtime fees alone, before token costs. For most use cases, agents run in bursts (triggered by events, not continuous polling), so this is manageable. But if your architecture requires always-on monitoring, factor that in.

Who's Using It

Anthropic highlighted five early adopters in the launch announcement. These aren't hypothetical demos—they're production deployments:

Notion: Using it to let teams delegate open-ended tasks — from coding to generating slides and spreadsheets — without leaving their workspace. The agent operates inside Notion's interface, taking a task description and returning completed deliverables.

Rakuten: Shipped enterprise agents across product, sales, marketing, finance, and HR that plug into Slack and Teams, letting employees assign tasks and get back deliverables like spreadsheets, slides, and apps. Each specialist agent was deployed within a week. This is the speed claim Anthropic's making: agents in production in days, not months.

Asana: Built AI Teammates—agents that work inside Asana projects. Amritansh Raghav (CTO): "Claude Managed Agents dramatically accelerated our development of Asana AI Teammates — helping us ship advanced capabilities faster — and freeing us to focus on creating an enterprise-grade multiplayer user experience".

Sentry: Paired their existing Seer debugging agent with a Claude-powered agent that writes patches. Developers go from a flagged bug to a reviewable fix in one flow. The workflow: Seer identifies the bug, Claude Managed Agent generates the patch, opens a PR, and hands it off for human review.

Vibecode: Helps their customers go from prompt to deployed app using Managed Agents as the default integration, powering a new generation of AI-native apps. Users can now spin up that same infrastructure at least 10x quicker than before.

Common pattern across all of these: complex, multi-step workflows that previously required either dedicated engineering teams or fragile chains of API calls. The infrastructure abstraction is what matters—not the model capabilities.

When to Use Managed Agents vs Build Your Own

This is the decision most tech leads are wrestling with. Here's the framework I use:

Use Managed Agents if:

- You need agents in production quickly (weeks, not months) and the infrastructure isn't your competitive advantage.

- Your agents are Claude-specific and you're comfortable with vendor lock-in.

- You don't have the DevOps capacity to build and maintain sandboxing, orchestration, and observability systems.

- Your workload is bursty (agents run for a few hours per day, not 24/7).

- You're okay with beta-level stability and rate limit uncertainty.

Build your own if:

- You need multi-vendor agent orchestration (mixing Claude, GPT-5, Gemini, or open models).

- Compliance requires self-hosted infrastructure (HIPAA, FedRAMP, etc.).

- You have existing container orchestration systems (Kubernetes, ECS) and want to leverage them.

- Your agents need specialized execution environments (GPU access, specific OS versions, non-standard packages).

- You need guaranteed uptime SLAs that a beta product can't provide.

Hybrid approach: Some teams are using Managed Agents for rapid prototyping and then migrating to the Agent SDK when they need more control. This lets you validate the agent logic fast, then self-host when operational requirements demand it. The SDK and Managed Agents share enough patterns that migration isn't a full rewrite.

Real-world consideration from one developer: "The $0.08/hr adds up for always-on agents. An agent running 24/7 costs about $58/month in runtime alone, before token costs. For most use cases agents run in bursts, not continuously — but if yours needs to be always-on, factor that in".

The inflection point: if you're spending more engineering time building and maintaining agent infrastructure than building the actual agent logic, Managed Agents is worth serious evaluation. But if infrastructure is part of your product moat (like you're selling an agent platform to other companies), building on Managed Agents gives Anthropic leverage over your business.

FAQ

Is Claude Managed Agents a new model?

No. It's hosted infrastructure that runs existing Claude models (Sonnet 4.6, Opus 4.6, Haiku 4.5). The models are the same ones available through the Messages API. What's new is the orchestration harness, sandboxing environment, and session management.

Can I use Managed Agents with non-Claude models?

Not currently. The infrastructure is purpose-built for Claude and won't run GPT, Gemini, or open-source models. If you need multi-vendor support, you'll need to build on the Messages API or use a framework like LangGraph that supports multiple providers.

How does pricing work?

You pay standard Claude API token rates ($3 input / $15 output per million tokens for Sonnet 4.6, for example) plus $0.08 per session-hour for active runtime. "Active" means the session is processing work—idle sessions don't accrue charges. The first 50 hours per day across all sessions are free (that's a per-organization limit, not per-session).

What's the difference between beta and research preview?

Beta (like the core Managed Agents platform) means it's publicly available but still being refined. Expect some rough edges, but it's usable for production with appropriate risk management. Research preview (like multi-agent coordination and memory) means it's experimental—request access, expect breaking changes, and don't bet your product roadmap on it yet.

Can I migrate from Managed Agents to self-hosted later?

Partially. The Agent SDK uses similar patterns (agent definitions, tool specifications, environment configs), so you're not starting from zero. But the session management, orchestration logic, and deployment setup will require significant re-engineering. Plan for 4-8 weeks of migration work depending on complexity.

What rate limits apply?

Managed Agents endpoints are rate-limited per organization, separate from Messages API limits. Specific numbers aren't published—they vary by usage tier. For production deployments at scale, work with Anthropic's sales team to negotiate appropriate limits.

Is multi-agent coordination production-ready?

No. It's in research preview, which means request access and expect meaningful instability. Anthropic hasn't published timelines for when it will graduate to beta or GA. If your architecture requires one agent delegating to sub-agents, you're taking on early-adopter risk.

How does this compare to OpenAI's Agents SDK or Google's ADK?

Different focus. OpenAI's Agents SDK (launched March 2026) is provider-agnostic and supports 100+ LLMs with built-in handoffs, guardrails, and tracing. Google ADK is multimodal-first and optimized for Gemini but supports other models. Managed Agents is Claude-specific hosted infrastructure—you're trading flexibility for operational simplicity. The right choice depends on whether you value multi-vendor optionality or infrastructure-as-a-service.

Conclusion

Claude Managed Agents is Anthropic's bet that most teams building agents don't want to become infrastructure companies. The value proposition is straightforward: get production-grade sandboxing, orchestration, and observability without the 3-6 month engineering investment to build it yourself.

The beta status matters. Multi-agent coordination isn't ready. Rate limits aren't fully documented. Lock-in is real. But for teams that need Claude-powered agents in production quickly and aren't ready to build a full agent runtime from scratch, Managed Agents removes the infrastructure excuse.

If you're evaluating it: start with a non-critical workflow, run it through a full session lifecycle (creation, execution, error handling, shutdown), measure the actual costs against your projected usage, and decide whether the operational simplicity justifies the vendor lock-in. For teams already committed to Claude and focused on shipping agent features fast, Managed Agents is the fastest path from prototype to production.

For everyone else—especially teams that need multi-vendor flexibility or have compliance requirements that demand self-hosting—the Agent SDK or Messages API remain better starting points.

Related Reading

- What Is G0DM0D3? The Single-File AI Tool Running 50+ Models — Technical architecture breakdown: how the localStorage design, AGPL-3.0 license, and OpenRouter gateway actually work.

- GLM-5V-Turbo: Z.ai's Vision Coding Agent Explained — One of the models available in godmod3's ULTRAPLINIAN pool, reviewed in depth.

- Claude Code vs Verdent: Multi-Agent Architecture Compared — The coding agent layer that godmod3 explicitly doesn't cover, for teams evaluating what's missing.

- LLM Knowledge Base for Coding Agents: Beyond RAG — Persistent context architecture for coding agents — the inverse of godmod3's stateless design.

- GLM-5-Turbo vs GLM-5V-Turbo: Which Agent Model to Use — Model selection for agent workflows, applicable when you've used godmod3 to benchmark options.