Okay, here's a confession: I spent the first two weeks of February staring at Twitter threads from Windows developers furious that the new Codex app dropped macOS-only, while simultaneously digging through Verdent's changelog to understand their "Skills" feature that quietly shipped mid-January. Two major moves in the same month, both pointing in the same direction. The ai coding tools 2026 predictions I've been sitting on for months are suddenly playing out in real time — and faster than I expected.

If you're trying to figure out which tools deserve your attention right now and which features will actually matter in your workflow six months from now, this is the breakdown I wish someone had written for me.

Current State of AI Coding Tools in Early 2026

The landscape shifted meaningfully in the last 90 days. We've moved from "AI that helps you write code" to "AI that orchestrates other AI while you supervise." The agents are doing actual project work now — not completing lines, but completing tasks. And the tooling is finally catching up to that reality.

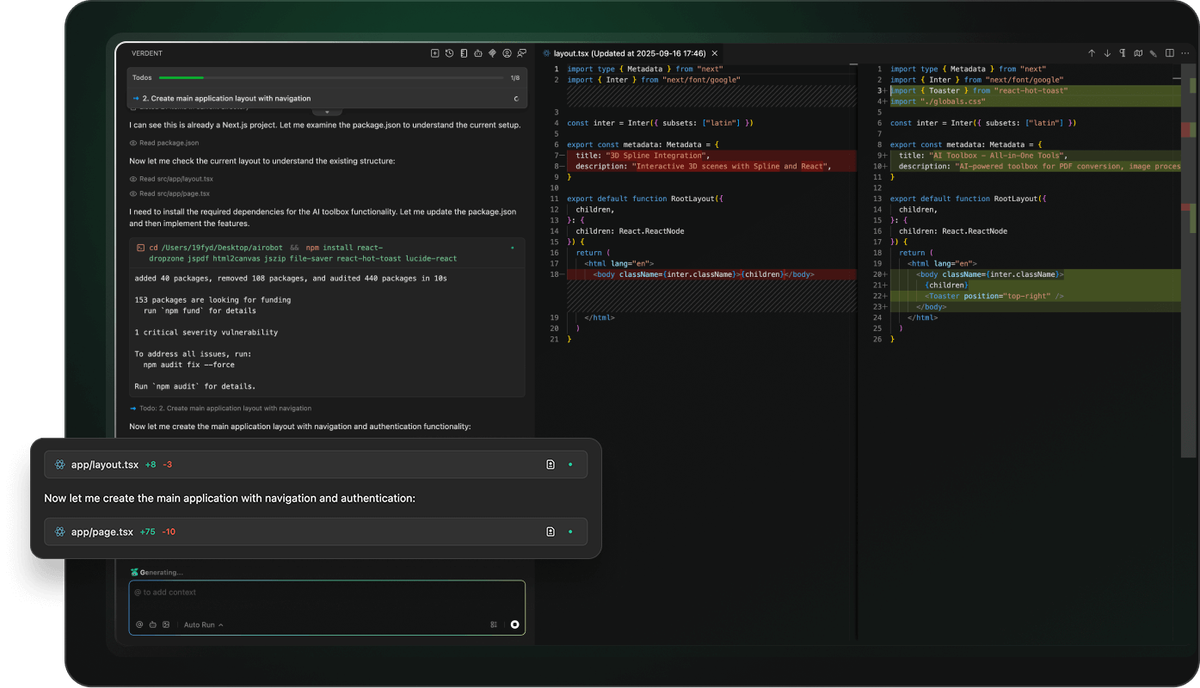

Verdent's Strengths in Parallel Agents

Verdent shipped parallel agents, git worktree isolation, and a built-in Review Subagent that cross-validates results using three frontier models simultaneously: Gemini 3 Pro, Claude Opus 4.5, and GPT-5.2. That last feature landed in January and quietly changed what "code review" means in an agentic workflow.

The platform supports custom subagents, allowing you to define specialized workers with their own behaviors, tools, and models, with each subagent running independently to enable a streamlined workflow from planning to delivery. Verdent's changelog also confirms that Skills — their equivalent of reusable workflow bundles — now ship as a core feature, described as turning best practices into reusable abilities that enable predictable, reliable outcomes.

Where Verdent genuinely wins right now is in the multi-model orchestration layer. The system routes tasks to Claude Sonnet 4.5, GPT-5, and GPT-5-Codex depending on task type, which means you're not leaving performance on the table by committing to a single model.

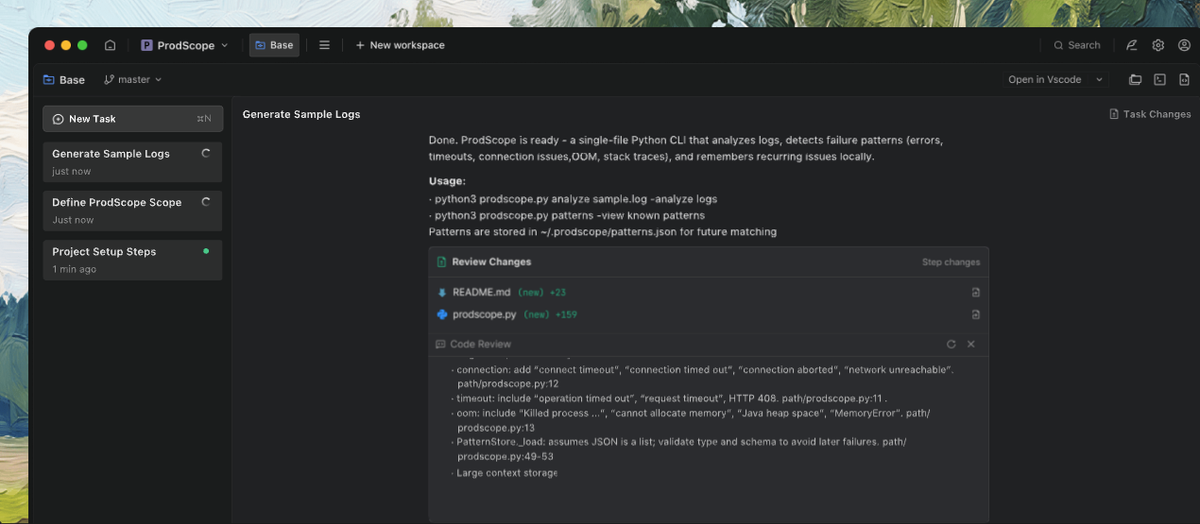

Codex App's Expansion Beyond Code Generation

OpenAI introduced GPT-5.3-Codex on February 5, 2026, describing it as the most capable agentic coding model to date, combining frontier coding performance with stronger reasoning and professional knowledge capabilities, and running 25% faster for Codex users.

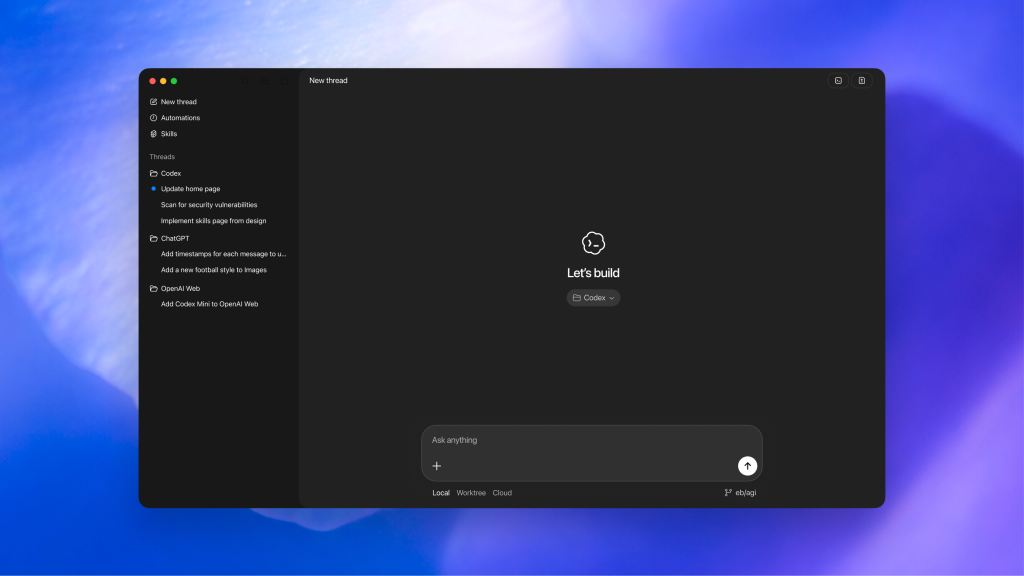

But the more interesting story is the desktop app. Codex is evolving from an agent that writes code into one that uses code to get work done on your computer, with Skills bundling instructions, resources, and scripts so Codex can reliably connect to tools, run workflows, and complete tasks according to team preferences.

The Codex app can now run multiple agents in parallel threads, review diffs inline, and integrate directly with GitHub for PR creation — all from a dedicated desktop interface rather than an IDE sidebar. For developers who've been frustrated by the context-switching costs of terminal + IDE + browser, this is a meaningful architecture change.

Upcoming Features: Verdent Skills Marketplace

Here's where things get really interesting. The whole skills ecosystem that's been bubbling under the surface for three months is about to become a primary workflow layer for serious developers.

How It Compares to Codex's agentskills.io

Let me be precise here because this is genuinely confusing, and I've seen several articles conflate two different things.

The Agent Skills open standard at agentskills.io was originated by Anthropic and published as an open specification in December 2025. OpenAI had already implemented a structurally identical architecture; the open standard codifies that convergence. Skills written for Claude Code can now work with OpenAI's Codex, Cursor, or any other platform that adopts the standard.

This means Skills are now genuinely cross-platform. The format — a folder containing a SKILL.md file with YAML frontmatter, plus optional scripts and resources — works identically across Claude Code, Codex CLI, Cursor, Verdent, and more. Adopted platforms include OpenCode, Cursor, Amp, Letta, goose, GitHub, and VS Code.

Here's how the two primary distribution platforms compare right now:

Verdent's Skills feature, based on the changelog, follows the same SKILL.md open standard. This means skills you build or install for Verdent can theoretically be reused across Codex and Claude Code — which is a significant change from six months ago when every tool's "prompts" or "custom instructions" were fully proprietary.

Potential Impact on Custom Subagents

This is where the prediction work gets interesting. Right now, Verdent's custom subagents live at ~/.verdent/subagents/ as individual Markdown files. The Skills standard introduces a complementary but distinct layer: while subagents define who a specialist is, skills define how a task gets done. They compose.

The emerging pattern looks like this: a subagent inherits a role (e.g., @migration-reviewer), and a skill provides the specific workflow steps for that role to follow consistently. A migration-safety skill loaded into a @migration-reviewer subagent means your review process is both specialized and standardized — the same output format every time, auditable and shareable across your team.

What's coming: as SkillsMP grows past 160,000 community skills and Verdent aligns its Skills implementation to the open standard, expect to see browsable skill directories directly inside the Verdent UI. The analogy to VS Code extensions or npm packages isn't a stretch — it's the obvious next step.

Codex App's Windows and Linux Rollout: What to Expect

I'll be blunt: this one is taking longer than most Windows developers were hoping for, and there's a real technical reason for it.

Sandboxing Challenges and Timelines

OpenAI launched its Codex desktop app on February 2, 2026, but Windows and Linux users could only join a waiting list while Mac users got immediate access. OpenAI engineer Alexander Embiricos explained that the company needed more time to get "really solid sandboxing working on Windows, where there are fewer OS-level primitives for it."

This isn't a resourcing problem — it's an architecture problem. macOS provides kernel-level sandboxing primitives (Sandbox.framework, App Sandbox) that let Codex isolate AI-generated code from your actual filesystem with minimal overhead. Windows doesn't have a direct equivalent at the same level of OS integration.

The Codex changelog shows active groundwork underway: vendored Bubblewrap + FFI wiring in the Linux sandbox has been added as groundwork for upcoming runtime integration, and a gated Bubblewrap (bwrap) Linux sandbox path was added to improve filesystem isolation options. The same changelog shows unified_exec has been enabled on all non-Windows platforms — which implies Windows is the remaining gap.

Current Windows workarounds while waiting for the native app:

My honest read: native Windows app arrives Q2 2026, with Linux following shortly after. The Bubblewrap work is too far along to be more than a month or two out.

Benefits for Mixed-OS Teams

Here's what most coverage is missing: the Windows delay is actually shaping a healthier cross-platform architecture. By solving sandboxing properly rather than shipping a degraded experience fast, OpenAI is building the foundation for truly equivalent functionality across platforms — not a Mac-first product with a Windows port that lags six months on every major feature.

For engineering teams with mixed OS environments — which, in enterprise contexts, is nearly everyone — this matters. When the Windows app ships, it should be feature-parity, not a subset. That means team workflows built around Codex today (Skills, Automations, Git worktree isolation) will translate directly to Windows developers without workflow gaps.

Verdent has an advantage here right now: Verdent's standalone app runs on both Apple Silicon and Intel Macs, while the VS Code extension works cross-platform already. Teams who can't wait for Codex's Windows app have a parallel-agent option available today.

Broader Trends in AI Development

Let me pull back from the specific tools for a moment and share what I think is actually happening at the trend level.

Multi-Model Orchestration Evolution

Single-model AI coding tools are starting to feel like single-threaded programs — technically functional, but leaving obvious performance on the table. The tools that are pulling ahead in 2026 all share one architectural pattern: they use different models for different task types rather than running everything through one.

As one Anthropic researcher put it: "We used to think agents in different domains will look very different. The agent underneath is actually more universal than we thought." The specialization comes from composable skills, not from a custom-built agent for every domain.

This is a significant shift. It means the "best AI coding tool" debate is increasingly beside the point — what matters is the orchestration layer that routes work intelligently across models, and the skills/subagent system that encodes how that work gets done. Verdent's multi-model routing and Codex's skill automations are both bets on this same architectural direction.

Automation and Personalization Advances

The other major trend is asynchronous execution. Both Verdent Deck and the Codex App now support delegating long-running tasks, stepping away, and returning to completed results. Codex Automations combine instructions with optional skills, running on a schedule you define — with Codex adding findings to the inbox or automatically archiving runs if there's nothing to report.

This fundamentally changes the role of the developer in the workflow. You stop being the person who supervises each step and become the person who defines the success criteria. It's a different skill set, and it's worth deliberately building.

The security corollary that nobody talks about enough: as skills and automations run more autonomously, supply chain risk becomes real. A Snyk security audit of 3,984 skills from public marketplaces as of February 5, 2026 found that 13.4% contained at least one critical-level security issue, including malware distribution, prompt injection attacks, and exposed secrets. The Snyk ToxicSkills research is the clearest articulation of this risk I've seen — worth reading before you install anything from a community marketplace.

Recommendations for Developers

Okay, here's the part where I stop describing what's happening and tell you what I'd actually do.

When to Switch or Hybridize Tools

Don't frame this as "which tool wins." The developer workflows that will look most effective a year from now are hybrid ones — using the right tool for the right layer of work.

The one move that pays dividends regardless of which tool "wins": invest time now in writing good SKILLS.md files and AGENTS.md project rules. Because the open standard is adopted by every major tool, that investment is portable. You're not writing instructions for Verdent or Codex — you're writing instructions for any compatible agent, today and in the future.

Staying Updated in the AI Developer Community

The pace of change in this space makes traditional blog-and-wait inadequate. Here's the actually useful update loop I've settled into:

Watch the changelogs directly: Verdent's changelog and the Codex changelog both ship meaningful updates weekly. Reading them takes five minutes and surfaces real workflow changes before they show up in coverage articles.

Follow security research alongside feature announcements. The agentskills.io specification is the canonical reference for what the open standard allows — understanding it helps you evaluate both capabilities and risks.

The developers I've seen adapt fastest to each new agentic tool aren't the ones who follow every announcement. They're the ones who have a clear mental model of what problem each layer solves — models, orchestration, skills, subagents — and slot new tools into that model quickly. Build the model first. The tools will keep changing.