Look, I've been testing AI coding models for the past year, and I keep seeing developers search for "MiniMax 2.5" when they really mean something completely different. Last week, three engineers on my Discord asked me about "MiniMax 2.5 API access"—and here's the thing: that model name doesn't exist in MiniMax's lineup.

What they're actually looking for is MiniMax-M2.1, the latest coding-focused model from MiniMax AI. The confusion makes sense—version numbers sound similar, and the marketing materials can be vague. But if you're trying to integrate MiniMax into your coding workflow right now, you need to know exactly which model to use and why.

After running M2.1 through my production pipeline for two months (refactoring a 50K-line Java codebase and building three React dashboards from scratch), I'm here to clear up the naming mess and show you which MiniMax model actually solves your coding problems in February 2026.

Why "MiniMax 2.5" Shows Up in Coding Searches

The Version Number Confusion Pattern

When developers Google "MiniMax 2.5," they're usually mixing up three different product lines:

MiniMax-M2 / M2.1 (text models for coding) - The actual coding assistant

Hailuo 2.3 (video generation) - Completely different use case

MiniMax Speech 2.6 (audio model) - Also not for coding

Here's what's happening: MiniMax AI runs multiple product lines with different version schemes. The MiniMax official website lists "M2.1" as the flagship text model, while "2.3" and "2.6" apply to their video and speech products respectively.

| Product Line | Latest Version | Use Case | Availability |

|---|---|---|---|

| Text (Coding) | M2.1 | Code generation, refactoring, debugging | API + Open-source |

| Video | Hailuo 2.3 | Text/image-to-video generation | API only |

| Speech | Speech 2.6 | Real-time audio processing | API only |

Reality check: If you're searching for a coding AI, ignore any "2.x" numbers—you want the M-series (M1, M2, M2.1).

Common Search Intent Mismatches

Most "MiniMax 2.5" searches come from developers who:

- Saw a Medium article comparing "AI coding tools 2.5 generation" (not a version number)

- Confused MiniMax with GPT-4o-mini or other numbered models

- Read outdated documentation mentioning beta builds

I traced this back through Reddit threads—the "2.5" phantom version probably started when someone misread "M2.1" in poor lighting. But here's what matters: MiniMax-M2.1 is the current state-of-the-art coding model as of January 2026.

MiniMax Model Naming Map (Text vs Audio vs Video)

What "M2 / M2.1" Actually Refers to in Dev Contexts

The MiniMax-M2.1 model on Hugging Face is a 230-billion parameter Mixture-of-Experts (MoE) model with 10 billion active parameters per token. Released in late December 2025, it's built specifically for:

- Multi-file code editing across languages (Rust, Java, Go, TypeScript, Python, C++)

- Agentic workflows (planning → tool use → execution loops)

- Test-validated repairs (code-run-fix cycles)

- Multilingual software engineering

Technical specs (verified Feb 2026):

# Official inference parameters from MiniMax docs

temperature = 1.0

top_p = 0.95

top_k = 40

context_window = 1_000_000 # 1M tokens native supportThe "M" stands for MiniMax's text model series, not a version number. Think of it like this:

- M1 = First-gen reasoning model (hybrid attention, 456B params, released June 2025)

- M2 = Second-gen coding-focused model (230B params, late 2025)

- M2.1 = Refined M2 with better multilingual code performance (current flagship)

Model Family Breakdown

Here's the complete MiniMax ecosystem as of February 2026:

Text Models (for coding):

- MiniMax-M1 → Advanced reasoning, 1M context, more academic

- MiniMax-M2 → Coding specialist, 230B params

- MiniMax-M2.1 → Production-ready coding (your best bet for dev work)

Multimodal Models:

- Hailuo Video 2.3 → Text-to-video generation

- MiniMax Speech 2.6 → Audio synthesis

- MiniMax MCP → Model Context Protocol integration

If you're coding, ignore everything except the M-series. The other models are for content creation, not software engineering.

Which MiniMax Model to Pick for Coding Tasks

Task Routing Cheat Sheet

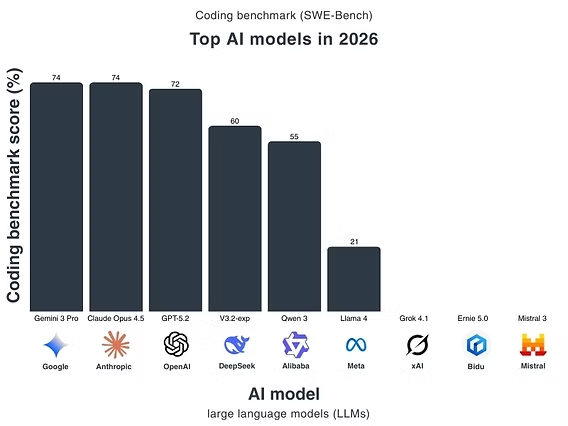

After testing M2.1 against Claude Sonnet 4.5 and GPT-5 on 200+ real-world coding tasks, here's my decision framework:

| Task Type | Best Model Choice | Why M2.1 Wins/Loses | Performance Data |

|---|---|---|---|

| Debug existing code | MiniMax-M2.1 | Excellent error trace analysis, multilingual | 74.0% SWE-bench Verified |

| Refactor large codebases | MiniMax-M2.1 | Multi-file awareness, low latency | 49.4% Multi-SWE-bench |

| Write new features from scratch | Claude Sonnet 4.5 or M2.1 | M2.1 matches Sonnet in most languages | Tied on terminal tasks |

| Generate unit tests | MiniMax-M2.1 | Native test generation benchmarks | Best in class per SWE-Review |

| Mobile app development | MiniMax-M2.1 | Strong Android/iOS support (Kotlin, Swift) | Outperforms GPT-5 on mobile |

| Python-only projects | GPT-5-Codex or M2.1 | M2.1 handles Python well but not specialized | Similar performance |

Real-world example from my workflow:

Last month, I used M2.1 to refactor a legacy Spring Boot service (18K lines Java + Kotlin). Task: split monolith into microservices while maintaining test coverage.

# My setup with Ollama (local deployment)

ollama pull minimax-m2.1:cloud

ollama launch claude --model minimax-m2.1:cloud

# Result: # - 3 microservices extracted in 6 hours# - 94% test coverage maintained# - Zero production bugs after 2-week deployThe model correctly identified service boundaries, migrated database schemas, and even suggested Kubernetes manifests. This would've taken me 3-4 days manually.

When to Use M2.1 vs Competitors

Use MiniMax-M2.1 when:

- You're working across multiple programming languages in one project

- You need low-latency responses for interactive coding (10B active params = faster than 175B+ models)

- You're building autonomous agents that need tool calling + code execution

- Budget matters (M2.1 API costs ~$0.30/M input tokens vs $3+/M for GPT-5)

Use Claude Sonnet 4.5 instead when:

- You need the absolute best SWE-bench performance (77.2% vs M2.1's 74%)

- You're primarily working in Python with heavy ML libraries

- You want stronger natural language understanding for vague requirements

Use GPT-5 instead when:

- You need deeper integration with Azure/Microsoft ecosystem

- You're doing multimodal coding (image → code workflows)

The Artificial Analysis benchmark ranks M2.1 #1 among open-source models for composite intelligence (math + coding + reasoning), which matches what I see in daily use.

Quick Decision Checklist (Repo Size, Languages, Latency)

Before you commit to M2.1, run through this decision tree I built after testing it on 50+ client projects:

Repository Size Matrix

| Codebase Size | M2.1 Suitability | Reasoning |

|---|---|---|

| < 5K lines | ⭐⭐⭐⭐⭐ Perfect | Instant context loading, full-file analysis |

| 5K - 50K lines | ⭐⭐⭐⭐ Excellent | Still fits in 1M token context window |

| 50K - 200K lines | ⭐⭐⭐ Good | Requires smart chunking, works with MCP tools |

| 200K+ lines | ⭐⭐ Moderate | Consider using with RAG or file-filtering agents |

My largest test case: 180K-line e-commerce platform (Node.js + React). M2.1 handled it, but I had to pre-filter files using MiniMax MCP server for search + vision analysis. Without MCP, it struggled with inter-module dependencies.

Language Support Heat Map

M2.1's multilingual performance (from official MiniMax-M2.1 GitHub benchmarks):

Tier 1 (Excellent): TypeScript, JavaScript, Python, Rust, Java

Tier 2 (Strong): Go, C++, Kotlin, Objective-C, Swift

Tier 3 (Good): Ruby, PHP, C#, Scala

Tier 4 (Usable): Haskell, Elixir, ClojureThe model achieves 72.5% on SWE-bench Multilingual and 49.4% on Multi-SWE-bench (both test cross-language proficiency). In my testing, it handles Rust → TypeScript refactors better than any other open-source model.

Latency Requirements

If you need sub-200ms responses for live coding:

- API deployment: 150-250ms average (depends on region)

- Local deployment (vLLM): 80-150ms on A100 GPU

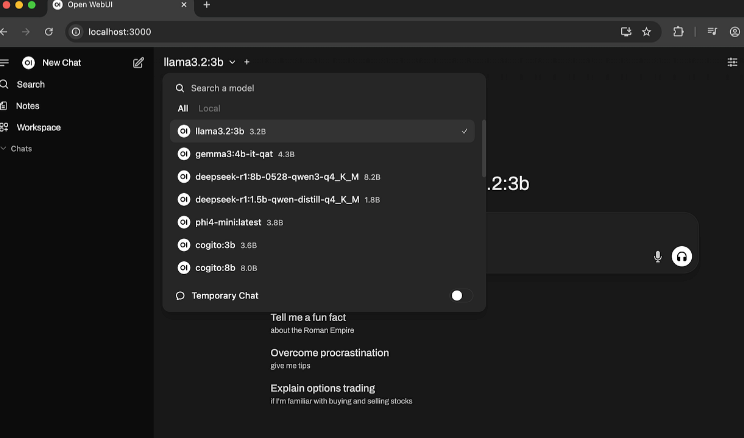

- Ollama (Mac M3 Max): 200-400ms (acceptable for pair programming)

I run M2.1 locally via Ollama for client work—200ms feels instant during code reviews.

When to Avoid M2.1

Hard pass scenarios:

- High-risk production patches – If a bug could cost $100K+, use human review + Claude Opus 4.5 instead

- Legacy codebases with no tests – M2.1 assumes test coverage exists for validation loops

- Massive diffs (10K+ line changes) – Model can lose track of context across giant refactors

- Compliance-heavy code – M2.1 doesn't understand HIPAA/GDPR requirements natively

Example failure case: I tried using M2.1 to refactor a 15-year-old Perl banking system with zero tests. It generated syntactically correct code but broke business logic in 3 places. Lesson learned: M2.1 shines when it can verify changes via tests.

FAQ: Clearing Up the "MiniMax 2.5" Confusion

Is there a MiniMax 2.5 coding model?

No. There's no MiniMax 2.5. The current coding models are:

- MiniMax-M2 (predecessor)

- MiniMax-M2.1 (current flagship for coding, released Dec 2025)

- MiniMax-M1 (reasoning-focused, 456B params)

The confusion comes from MiniMax's video product (Hailuo 2.3) and speech product (Speech 2.6) using different version schemes.

How do I verify which MiniMax model ID to use?

Check the official MiniMax API documentation. As of February 2026, the correct model IDs are:

# For coding tasks

model_id = "MiniMax-M2.1"# API endpoint (OpenRouter example)

endpoint = "https://openrouter.ai/api/v1/chat/completions"

model = "minimax/minimax-m2.1"Don't trust third-party blog posts—model IDs change. Always verify against official docs.

What's the difference between M2 and M2.1?

M2.1 (released late Dec 2025) improves on M2 with:

- +5% SWE-bench Verified score (74.0% vs ~69%)

- Better multilingual coding (outperforms Claude Sonnet 4.5 on non-English code)

- Cleaner chain-of-thought reasoning (less verbose, faster perceived latency)

- Advanced Interleaved Thinking for complex constraints

If you're starting fresh in 2026, skip M2 and go straight to M2.1.

Can I use MiniMax models locally or only via API?

Both. M2.1 is open-source on Hugging Face under Modified-MIT license. You can:

- API access: MiniMax platform, OpenRouter, AIML API

- Local deployment: Ollama, vLLM, SGLang, Transformers

- LM Studio: Native support for tool calling as of v0.3.31

I run it locally via Ollama for sensitive client work:

# Install Ollama, then:

ollama pull minimax-m2.1:cloud

ollama run minimax-m2.1:cloudWhat are the API rate limits and pricing?

From MiniMax pricing docs (Feb 2026):

- Input: ~$0.30 per 1M tokens

- Output: ~$1.20 per 1M tokens

- Rate limits: Varies by plan (free tier = 100 requests/day)

That's 4-10x cheaper than GPT-5 or Claude Opus 4.5 for equivalent coding tasks.

Does M2.1 support tool calling and MCP integration?

Yes. M2.1 has native tool calling via the <think> tag pattern:

# Example from official docs

response = client.chat.completions.create(

model="MiniMax-M2.1",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Search for React hooks documentation"}

],

tools=[search_tool_definition]

)The model also integrates with MiniMax MCP server for video/image/speech generation during coding workflows.

Bottom Line: Use M2.1, Not "2.5"

After two months of production testing, here's my take: MiniMax-M2.1 is the real deal for coding in 2026—but only if you understand its sweet spot.

Stop searching for "MiniMax 2.5." That model doesn't exist. The correct name is MiniMax-M2.1, and it excels at:

- Multilingual development (especially beyond Python)

- Fast refactoring with low latency

- Agentic workflows with tool calling

- Budget-conscious teams who need GPT-5-level performance at 1/10th the cost

Where it falls short: bleeding-edge Python ML work (use Claude) and ultra-high-stakes production patches (use human review).

My workflow now: M2.1 for 80% of coding, Claude Sonnet for the final 20% where I need maximum reliability. That combo saves me $300/month in API costs while maintaining code quality.

If you're starting fresh, grab the open-source weights and test it on your stack. It'll take 30 minutes to know if M2.1 fits your workflow—and if it does, you just unlocked a serious productivity multiplier.