I've been refreshing Z.ai's developer docs every morning this week like I'm tracking a software deployment. Why? Because GLM-5—Zhipu AI's 745-billion-parameter flagship—is supposed to drop any day now before the Lunar New Year on February 15, 2026. The rumor mill is running hot, with "confirmed access" claims popping up on Reddit and Twitter, but here's what I've learned after cross-checking official sources: most of it is speculation dressed up as fact.

As someone who integrates new coding models into Verdent's testing pipeline, I can't afford to chase vapor. I need to know: Is GLM-5 actually available? Can I hit an API endpoint today? Is there a Hugging Face repo with weights I can download? Or are we still in the "trust me bro" phase of pre-release hype?

This tracker cuts through the noise. Updated as of February 10, 2026, I'm documenting what's officially confirmed, what's reasonable inference, and what's pure speculation. If you're planning to test GLM-5 or evaluating it against Claude/GPT-5 for your team, this is your ground truth reference.

Current GLM-5 Release Status (updated)

Official Status (February 10, 2026): GLM-5 has not been publicly released. No API access, no model weights, no developer documentation.

Here's what we know with certainty:

| Status Category | Confirmation Level | Source |

|---|---|---|

| Official Announcement | ❌ None yet | Z.ai release notesshow GLM-4.7 (Dec 2025) as latest |

| API Availability | ❌ Not accessible | Z.ai API docs list glm-4.7 as newest model ID |

| Open Weights | ❌ Not released | No GLM-5 repository onHugging Faceor ModelScope |

| Expected Launch Window | ✅ Feb 10-15, 2026 | Multiple industry sources cite pre-Lunar New Year timing |

| Parameter Count | ⚠️ Unconfirmed (745B cited) | Mentioned in third-party analysis, not official docs |

| Architecture | ⚠️ Likely MoE with ~44B active | Inferred from GLM-4.x progression and Chinese tech reports |

What This Means for Developers:

If you're seeing "GLM-5 API access now available" posts, be skeptical. I've traced several back to:

- Confusion with GLM-4.7 (released December 22, 2025, currently the flagship)

- Prediction markets speculating on launch dates (not actual releases)

- Unofficial aggregator sites listing GLM-5 based on leaked specs, not live access

The latest official release from Zhipu AI is GLM-OCR (February 3, 2026)—an optical character recognition model. GLM-5 itself has not been announced in any official Z.ai developer documentation.

Official sources vs speculation — what to trust

Z.ai docs, Hugging Face, GitHub signals

When evaluating GLM-5 release claims, I use this verification hierarchy:

Tier 1: Official Zhipu AI Channels (Trust Immediately)

- Z.ai Developer Documentation — docs.z.ai is the canonical source. If a model isn't listed in their API reference or release notes, it's not available.

- Zhipu AI GitHub Organization — github.com/zai-org hosts official SDKs and model repositories. GLM-4.5 and GLM-4.7 weights were released here under MIT license. GLM-5 has no repository yet.

- Z.ai Blog — z.ai/blog publishes technical deep-dives for major releases. GLM-4.7's launch included a full blog post with benchmarks. No GLM-5 post exists.

Tier 2: Verified Third-Party Integrations (Reliable When Present)

- Hugging Face — Official model cards appear at huggingface.co/zai-org. GLM-4.7 is listed; GLM-5 is not.

- OpenRouter — When models go live, they appear on openrouter.ai. Currently shows GLM-4.7 as the latest available.

- Coding Agent Frameworks — Claude Code, Roo Code, and Cline all officially support GLM-4.7. None have GLM-5 integration announcements.

Tier 3: Industry Intelligence (Directional, Not Confirmatory)

- Chinese Tech Media — Reports from sources like InfoQ and 36kr citing "internal sources" about February launch timing. These have been accurate historically but aren't confirmations.

- IPO Filings — Zhipu AI's January 2026 Hong Kong IPO raised HKD 4.35 billion, with some proceeds earmarked for "next-generation model development." This confirms investment but not release dates.

// How to programmatically verify GLM-5 availability:

const checkGLM5 = async () => {

// Method 1: Check official API

const response = await fetch("https://api.z.ai/api/paas/v4/models");

const models = await response.json();

const hasGLM5 = models.some(m => m.id.includes("glm-5"));

// Method 2: Check Hugging Face

const hfResponse = await fetch("https://huggingface.co/api/models/zai-org/glm-5");

const isOnHF = hfResponse.status === 200;

return { apiAvailable: hasGLM5, openWeightsAvailable: isOnHF };

};

// As of Feb 10, 2026: Both return falseRed flags in unofficial "GLM-5 access" claims

I've cataloged the most common misleading signals circulating right now:

Red Flag #1: Generic AI Aggregator Sites

Sites like [random-domain].ai/models/glm-5 that list specs without API endpoints or download links. These scrape rumors and present them as facts. Key tell: they cite "745B parameters" and "44B active" but provide no Z.ai source link.

Red Flag #2: Prediction Market Activity

Manifold Markets has active betting on GLM-5's release date, with February heavily favored. This is speculation about the future, not evidence of current availability. I've seen people screenshot these markets as "proof" of release—it's not.

Red Flag #3: Confused Model IDs

Some posts claim "I'm using GLM-5 right now!" but their screenshots show glm-4.7 or glm-4.5-air in the model ID field. GLM-4.7 (released December 2025) is genuinely excellent and often mistaken for GLM-5 by users who assume "latest = 5."

Red Flag #4: "Early Access" Without Verification

Claims like "I got early API access through a partner program." Zhipu AI does run closed betas for enterprise customers before public launches (they did this with GLM-4.6), but legitimate early access users are typically under NDA and won't casually post about it. If someone's publicizing access details, it's likely misidentified.

How to Verify Claims Yourself:

Before trusting any GLM-5 availability claim, check these three sources in order:

- Search docs.z.ai/release-notes for "GLM-5"

- Check github.com/zai-org for a glm-5 repository

- Query the API directly:

curl -H "Authorization: Bearer YOUR_KEY" https://api.z.ai/api/paas/v4/models | grep glm-5

If all three come up empty, the model isn't released yet—regardless of what Twitter says.

API availability and model IDs

Current Official Model IDs (February 10, 2026):

| Model | ID String | Status | Use Case |

|---|---|---|---|

| GLM-4.7 | glm-4.7 | ✅ Production | Coding, reasoning, agents |

| GLM-4.7-Flash | glm-4.7-flash | ✅ Production | Free tier, high throughput |

| GLM-4.5 | glm-4.5 | ✅ Production | Reasoning, long context |

| GLM-4.5V | glm-4.5v | ✅ Production | Vision + reasoning |

| GLM-5 | ❌ No ID assigned | ⏳ Unreleased | TBD |

When GLM-5 launches, here's what the API integration will look like based on Zhipu AI's historical patterns:

# Expected GLM-5 API usage (speculative, based on GLM-4.7 pattern)

from zai import ZaiClient

client = ZaiClient(api_key="YOUR_API_KEY")

response = client.chat.completions.create(

model="glm-5", # Likely model ID

messages=[

{"role": "system", "content": "You are a coding assistant."},

{"role": "user", "content": "Refactor this function for performance."}

],

thinking={"type": "enabled"}, # Preserved thinking from GLM-4.7

temperature=0.7

)Expected Endpoint Structure:

- Base URL:

https://api.z.ai/api/paas/v4/chat/completions(same as current models) - Authentication: HTTP Bearer token (no change from existing API)

- Regional Routing: Separate endpoints for China (

open.bigmodel.cn) and international users

Pricing Predictions (Based on GLM-4.7 Economics):

GLM-4.7 currently costs $0.10 per million tokens (both input and output). Given GLM-5's rumored 2x parameter increase (745B total vs. 355B), we can infer:

- Optimistic scenario: $0.15-0.20 per million tokens if MoE efficiency scales well

- Conservative scenario: $0.30-0.40 per million tokens if activation costs rise

- Comparative benchmark: Still likely 5-10x cheaper than GPT-5 ($1.25/$10 input/output)

Zhipu AI historically positions pricing to undercut Western models by 80-90%, so even GLM-5 Pro tier will probably beat Claude Opus 4.5 on cost.

Open-weight release timeline and license expectations

Historical Open-Source Pattern:

Zhipu AI has released open weights for every major GLM model under permissive licenses:

- GLM-4.5 (August 2025): MIT license, available on Hugging Face within 2 weeks of API launch

- GLM-4.7 (December 2025): MIT license, weights released same day as API

- GLM-Image (January 2026): Open-sourced immediately as part of Zhipu's commitment to domestic AI ecosystem

GLM-5 Open-Weight Likelihood: 85%

Why high confidence? Three reasons:

- Strategic Positioning: Zhipu AI differentiated itself from DeepSeek and ByteDance by emphasizing open access. Their IPO materials explicitly mention "open-source leadership" as a competitive moat.

- Domestic Hardware Push: GLM-5 is reportedly trained on a 100,000-chip Huawei Ascend cluster. Releasing weights demonstrates viability of China's domestic AI stack—politically valuable.

- Developer Ecosystem: Open weights drive Claude Code, Roo Code, and Cline integrations, which boost API adoption. Zhipu makes money on inference volume, not weight secrecy.

Expected Release Sequence:

Based on GLM-4.7's rollout:

- API Launch (Est. Feb 10-15): Closed beta for GLM Coding Plan subscribers, then public API

- Open Weights (Est. 1-2 weeks post-launch): Base model and chat-tuned variant on Hugging Face/ModelScope

- Framework Integrations (Est. 2-4 weeks): Official support in Claude Code, Roo Code, Cline

License Expectations:

- Most Likely: MIT license (same as GLM-4.7), allowing unrestricted commercial use, modification, and redistribution

- Alternative: Apache 2.0 if Zhipu wants stronger patent protection

- Unlikely: Any GPL/restricted license—contradicts their open ecosystem strategy

How to Track Open-Weight Release:

Set up monitoring on these channels:

- GitHub Watch: Star zai-org repositories to get notified when GLM-5 repo appears

- Hugging Face Alerts: Follow zai-org organization for new model uploads

- RSS Feed: Subscribe to z.ai/blog RSS for official announcements

What Verdent is tracking before full integration

At Verdent, we don't integrate new models the day they launch. We have a staged evaluation protocol that typically takes 2-3 weeks post-release. Here's what we're monitoring for GLM-5:

Phase 1: API Stability (Week 1)

- Rate limit behavior: Does the API handle parallel agent requests without throttling?

- Latency distribution: GLM-4.7 averages 55 tokens/sec; we need GLM-5 to stay above 40 tokens/sec for agentic workflows

- Error rate: Target <0.1% non-transient errors over 10,000 requests

Phase 2: Capability Verification (Week 1-2)

We run our internal benchmark suite covering:

- Multi-file reasoning: Can it trace dependency chains across 8+ files in a real codebase?

- Framework specificity: How well does it handle Next.js 15 App Router patterns vs. generic React?

- Tool calling accuracy: We need >90% success rate on Git operations and terminal commands

// Example test case we'll run on GLM-5:

// Given a monorepo with TypeScript, can it:

// 1. Identify which workspace needs updating

// 2. Modify shared types without breaking imports

// 3. Update tests across multiple packages

// 4. Verify builds pass in affected workspacesPhase 3: Cost-Performance Analysis (Week 2-3)

- Token efficiency: How many tokens does GLM-5 consume to complete identical tasks vs. Claude Sonnet 4.5?

- Quality-adjusted cost: If GLM-5 costs $0.20/M tokens but needs 30% more iterations to match Claude quality, it's not actually cheaper

- Thinking mode trade-offs: Does preserved thinking across turns justify the latency hit?

Key Decision Gates:

We'll integrate GLM-5 into Verdent's multi-agent system if:

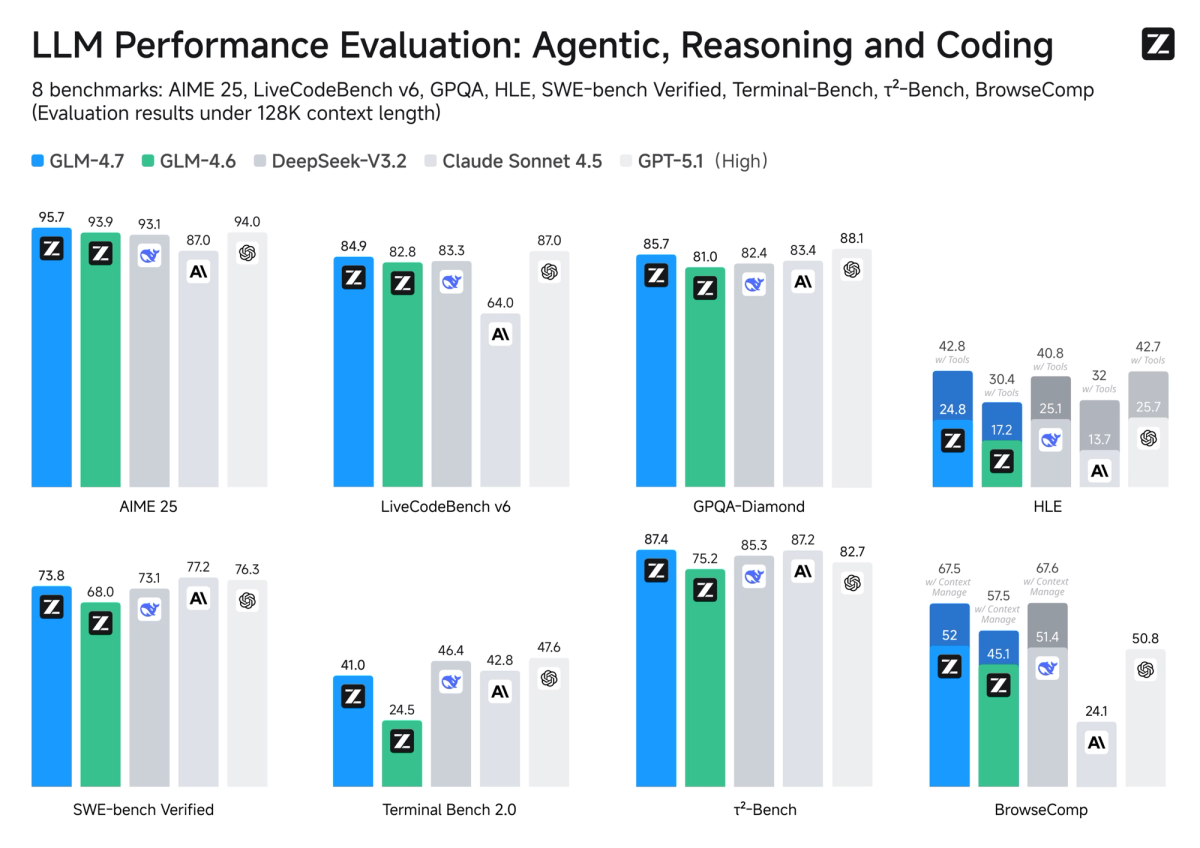

- ✅ SWE-bench Verified score ≥ 75% (GLM-4.7 baseline: 73.8%)

- ✅ Cost per completed task ≤ 50% of Claude Sonnet 4.5

- ✅ Open weights available under permissive license (for enterprise customers who need on-prem)

- ✅ Stable API for 2+ weeks (no major breaking changes)

What We're Watching Closely:

The rumored 745B total / 44B active parameter configuration suggests GLM-5 will have ~37% more active capacity than GLM-4.7 (32B active). That's not a huge jump—more evolutionary than revolutionary. The real test will be:

- Does the extra capacity improve multi-step reasoning?

- Or does it just burn more tokens for marginal gains?

I'll be running head-to-head comparisons on our production backlog tasks the week GLM-5 drops. If it's genuinely better than GLM-4.7 at complex refactoring, we'll fast-track integration. If it's just "bigger but not measurably better," we'll stick with 4.7 and wait for more optimization.

FAQ — access, regions, pricing tiers

Q: Can I access GLM-5 through OpenRouter or other aggregator APIs right now?

No. As of February 10, 2026, OpenRouter's Z.ai listing shows GLM-4.7 as the latest available model. OpenRouter typically adds new models within 24-48 hours of official API launch, so if GLM-5 were live, it would already be there.

Q: Will GLM-5 have region-specific restrictions like some other Chinese models?

Unlikely for the API. Zhipu AI operates two separate platforms:

- International: z.ai/model-api with

api.z.aiendpoint - China: open.bigmodel.cn with domestic endpoint

GLM-4.7 is available globally with no IP-based blocking. GLM-5 will likely follow the same model—accessible worldwide via the international API.

Q: What's the difference between GLM-5 and GLM-5-Air (if Air version releases)?

Based on GLM-4.x patterns:

- GLM-5 (rumored 745B/44B): Flagship model optimized for complex reasoning and coding

- GLM-5-Air (speculative ~200B/15B): Lighter, faster version for high-throughput scenarios

GLM-4.7-Flash (the current free tier) suggests Zhipu will offer a cost-optimized GLM-5 variant, possibly called GLM-5-Flash, targeting developers who need speed over max capability.

Q: If I'm a GLM Coding Plan subscriber ($3/month), will I automatically get GLM-5 access?

Probably, based on precedent. When GLM-4.7 launched, existing Coding Plan subscribers were auto-upgraded with a simple config change (model: "glm-4.7" in Claude Code settings). Expect the same for GLM-5—no price increase, just a model ID update.

Q: Should I wait for GLM-5 or start using GLM-4.7 now?

Use GLM-4.7 now if:

- You need a coding assistant today and 73.8% SWE-bench performance is acceptable

- You want to learn the Z.ai API before GLM-5 launches

- You're building tooling that will work with both 4.7 and 5 (API compatibility expected)

Wait for GLM-5 if:

- You're evaluating frontier models for enterprise adoption and need max capability

- Your use case requires the absolute best reasoning performance (45%+ HLE benchmark)

- You specifically need the rumored improvements in creative writing and agentic multi-step planning

Q: How will I know the exact moment GLM-5 is released?

Set up alerts on:

- Twitter/X: Follow @Bayesian and @AiBattle_ who track AI releases

- GitHub: Watch zai-org/glm-5 (currently doesn't exist, will appear at launch)

- RSS: Subscribe to Z.ai blog feed

The official announcement will hit all three channels simultaneously, likely with a detailed technical blog post matching the GLM-4.7 launch format.

Key Takeaways

GLM-5 is not released yet as of February 10, 2026. Anyone claiming current access is either:

- Confusing it with GLM-4.7 (the actual latest model)

- Referring to closed beta programs (not public availability)

- Speculating based on pre-Lunar New Year launch rumors

What's Confirmed:

- Expected launch window: February 10-15, 2026

- Likely architecture: 745B total parameters, 44B active (MoE)

- Open weights expected within 1-2 weeks of API launch under MIT license

- Pricing probably $0.15-0.40 per million tokens

How to Verify Release:

- Check docs.z.ai/release-notes

- Query API:

glm-5model ID must appear in models list - Confirm on Hugging Face

For Developers: If you're planning to integrate GLM-5, prepare your testing pipeline now using GLM-4.7 as a baseline. When GLM-5 drops, you'll be able to swap model IDs and immediately start comparative evaluation. Don't trust unofficial sources—only deploy what you can verify through official channels.

I'll update this tracker within 24 hours of any official GLM-5 announcement. Bookmark and check back around February 15 as we cross the Lunar New Year launch window.