Verdent Build Sprint 2, up to 700 credits just by prompting and sharing! Join it now!

Verdent Build Sprint 2, up to 700 credits just by prompting and sharing! Join it now! Devolver la alegría a la programación Concentrarse en crear

Su socio nativo de IA para la nueva forma de construir software.

Tiempo limitado GRATIS Prueba

Descargar para Mac Apple Silicon

Limpio · Rápido · Bueno

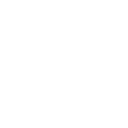

Describir lo que quiere. Verdent maneja el trabajo, le mantiene informado y devuelve resultados en los que puede confiar.

Colaboración rápida y enfocada con IA. Sin excesos. Sin distracciones. Chatear primero por diseño.

Agente avanzado

Rendimiento sólido y comprobado en trabajos de programación complejos.

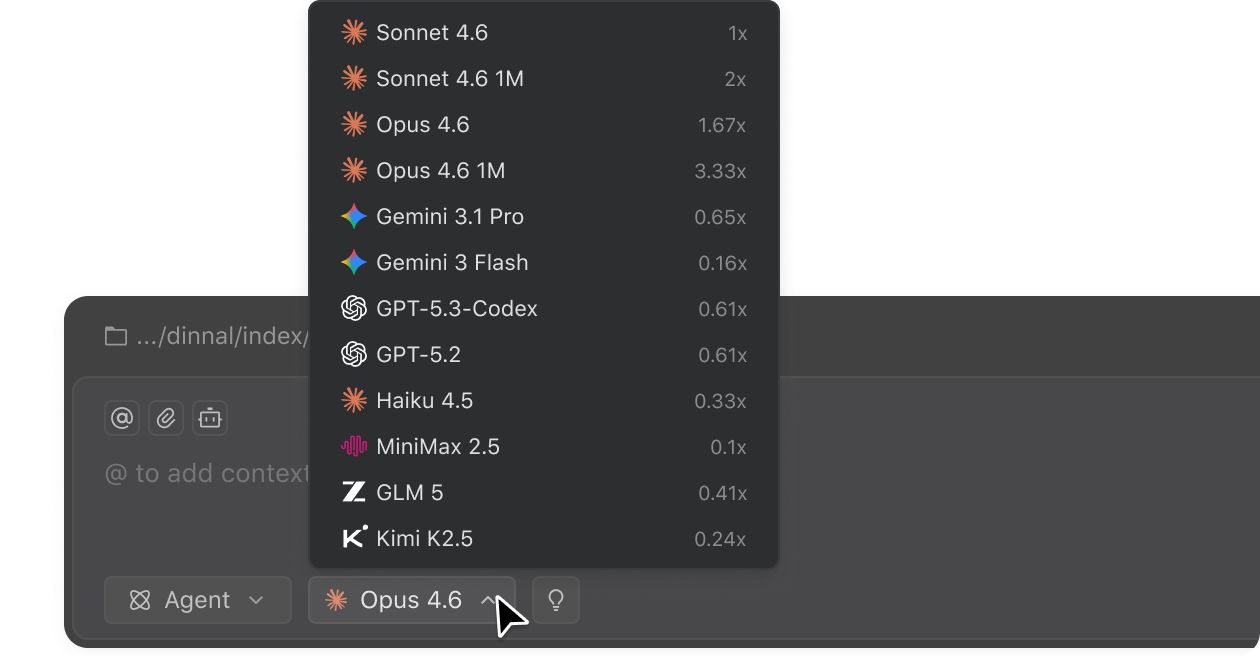

Acceso a modelos líderes

Elegir entre los mejores modelos de IA de hoy directamente dentro de Verdent.

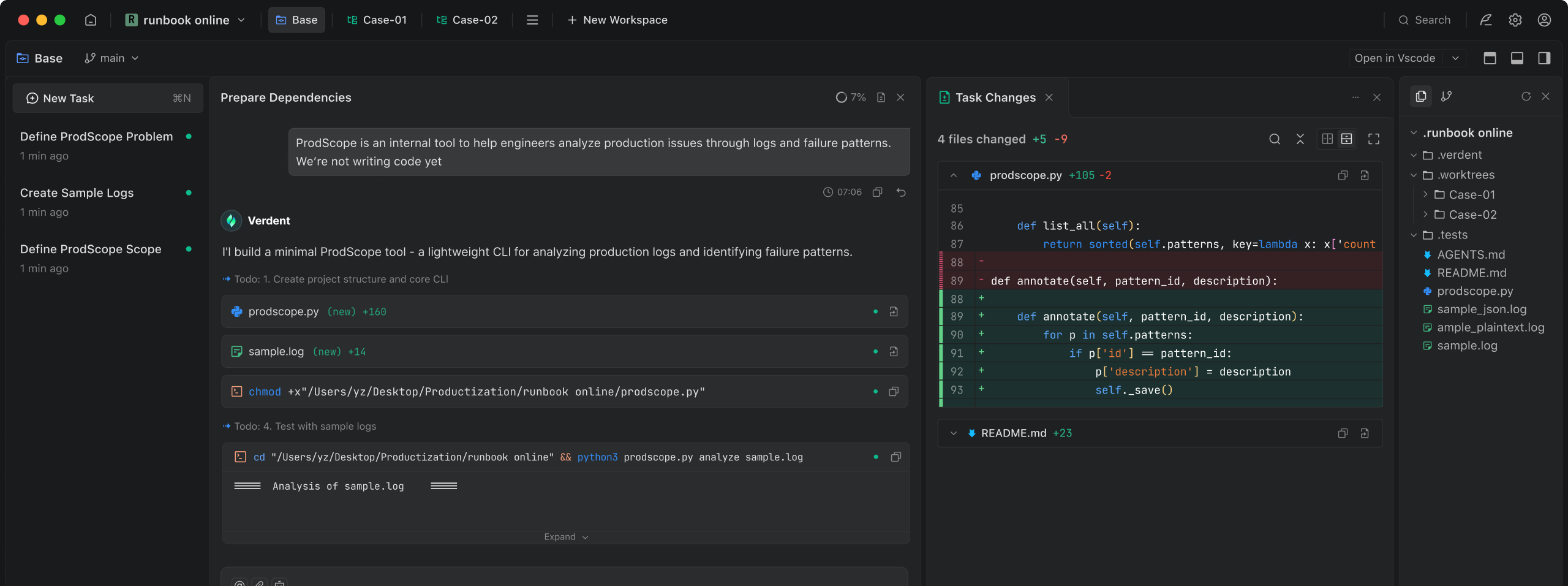

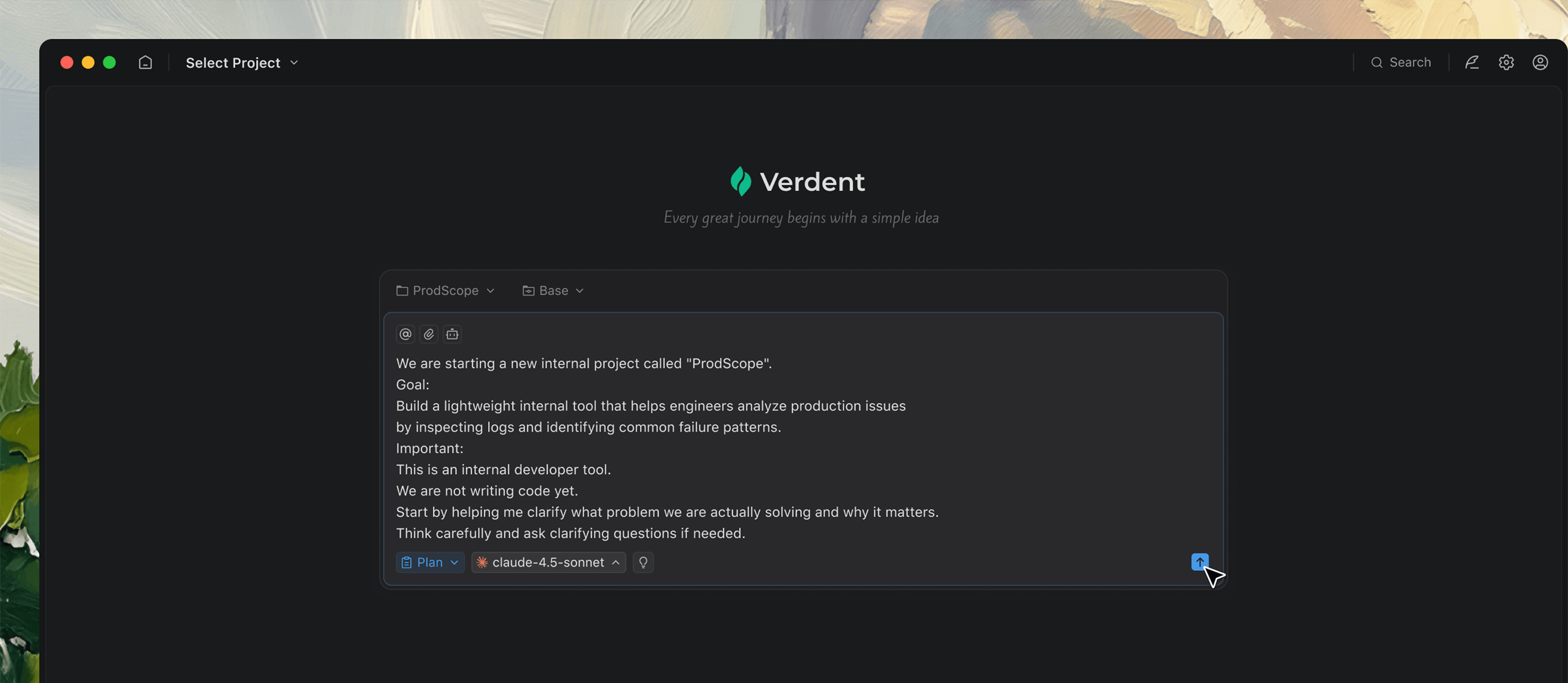

Pensar juntos

No todas las tareas comienzan con una idea clara. Verdent te ayuda a dar forma a una que realmente puedas usar.

Cuando tu idea aún es difusa, Verdent hace preguntas proactivamente para ayudarte a convertirla en una tarea clara.

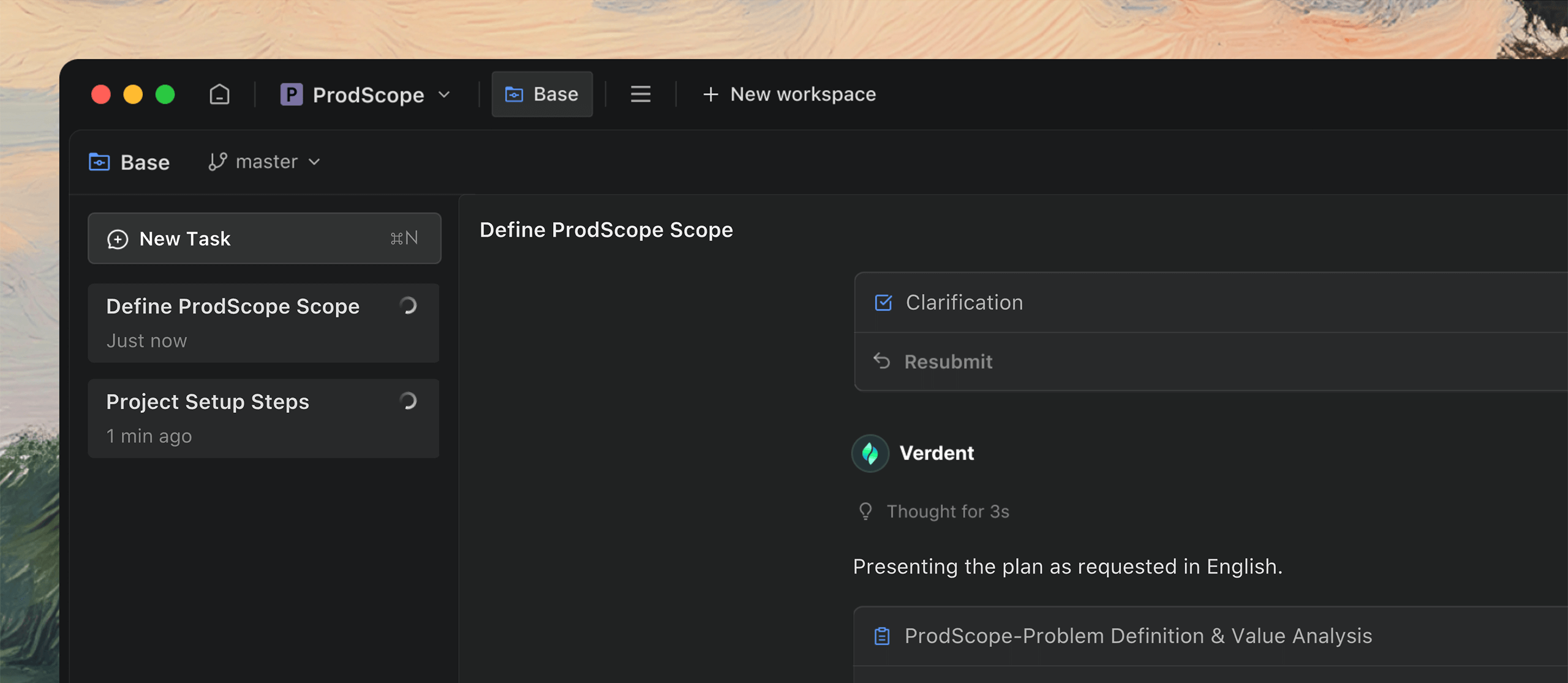

Diseñado para trabajo en paralelo

Rara vez el trabajo viene de una tarea a la vez. Verdent mantiene todo en movimiento en paralelo, para que no tenga que apresurarse para seguir el ritmo.

Crea múltiples tareas fácilmente a medida que surgen ideas, para que puedas seguir pensando mientras el código se ejecuta.

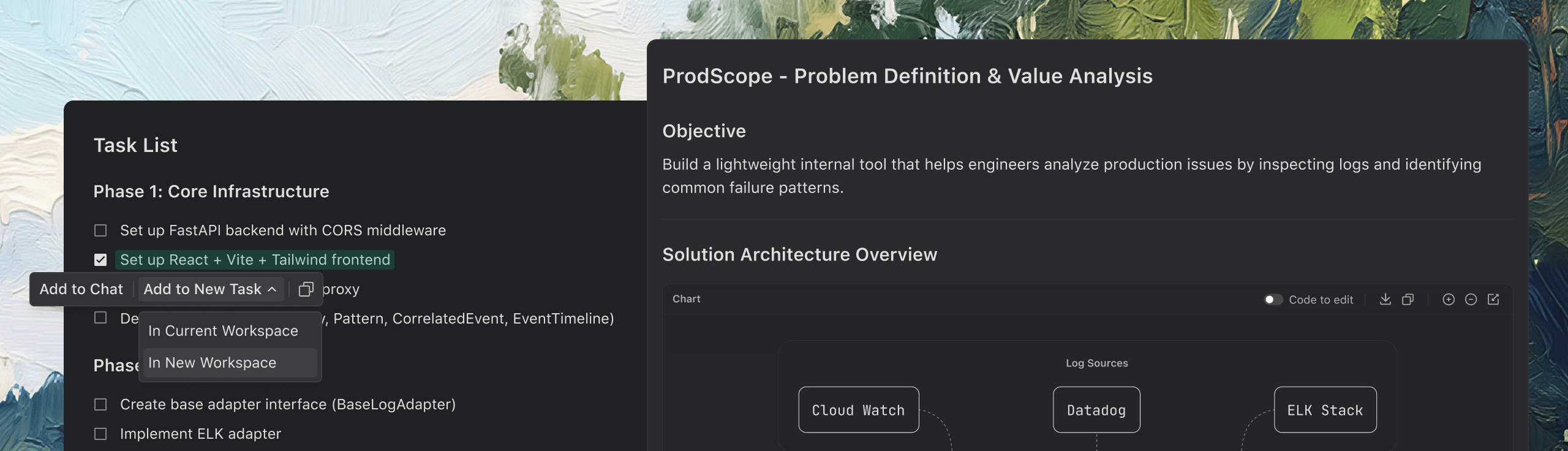

Más que la programación sola

También maneja documentación, análisis de datos, prototipos y más, incluso fuera de su especialidad.

Recopila la información que necesitas y convierte ideas dispersas en documentación detallada.

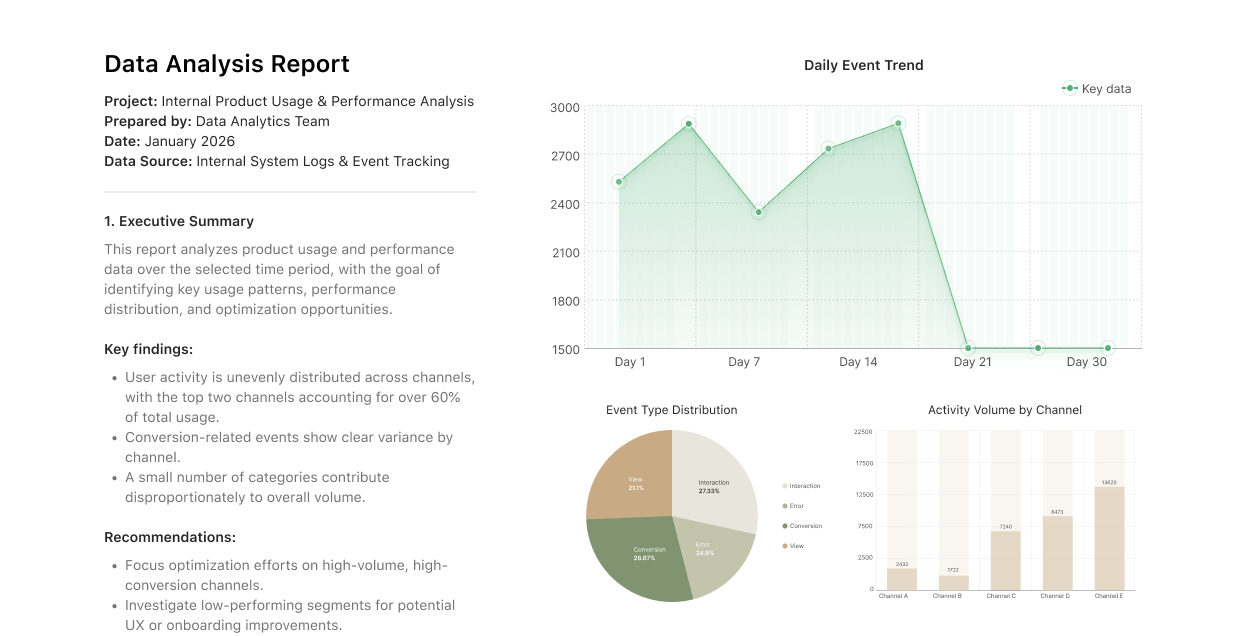

Análisis de datos

Convierte grandes conjuntos de datos en información práctica fácilmente.

Prototipos

Convierte rápidamente tus ideas en demos interactivas que puedes presentar.

Confiado por

Información y actualizaciones

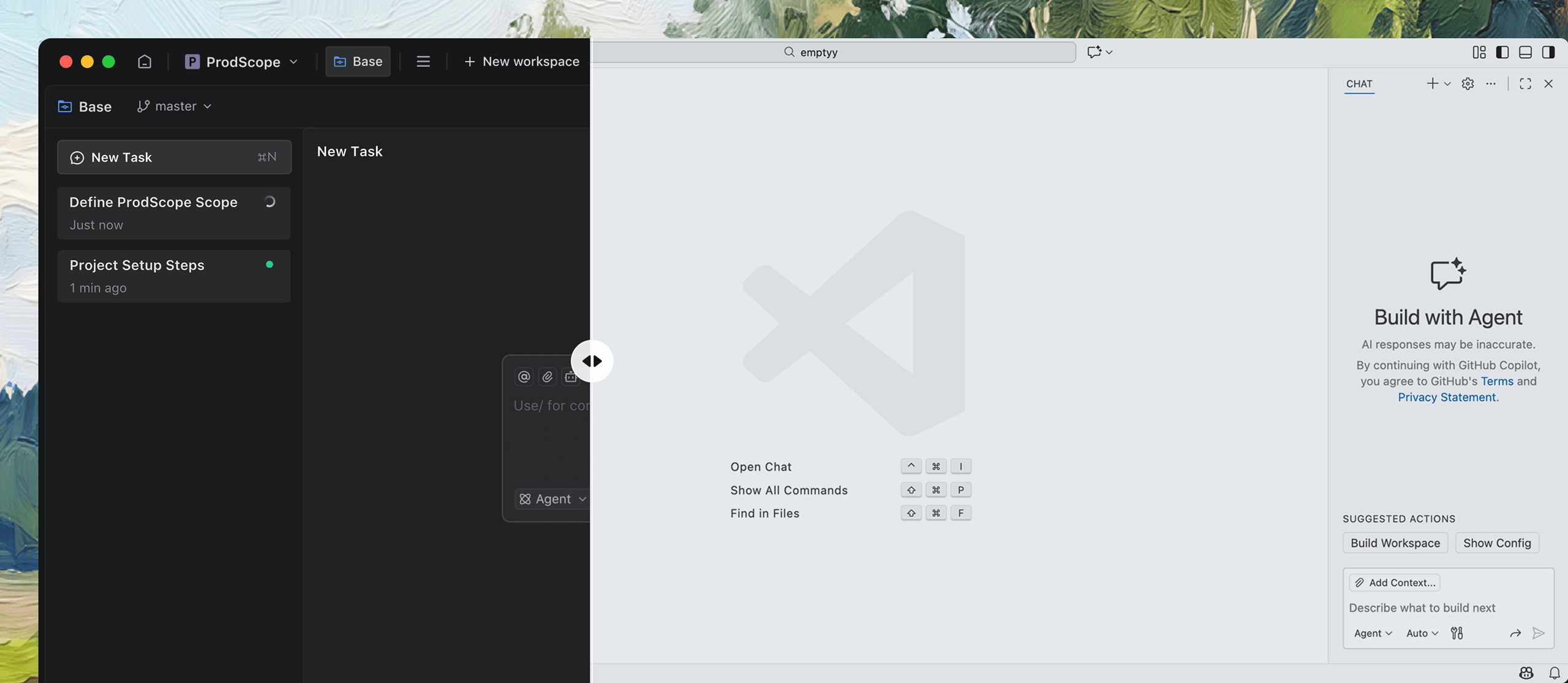

SWE-bench Verified Report

Leading the field with 76.1% single-attempt resolved rate on SWE-bench Verified.

November 1, 2025

What impact will AI have on the software industry?

Transforming software engineering: from code assistance to AI-native infrastructure.

October 31, 2025

How do we see the four levels of AI SWE?

Turning AI coding from helper to powerhouse across the entire software lifecycle.

October 30, 2025

Concéntrese en crear

Verdent se encarga del resto

Verdent se encarga del resto

Tiempo limitado GRATIS Prueba

Descargar para Mac Apple Silicon

Contáctenos: hi@verdent.ai

© 2026 Verdent AI, Inc. Todos los derechos reservados.

Amado por desarrolladores profesionales