다시 찾는 코딩의 즐거움 오직 창작에만 집중

새로운 방식의 소프트웨어 개발을 위한 AI 네이티브 파트너.

기간 한정 무료 체험판

Mac용 다운로드 Apple Silicon

깔끔하게 · 빠르게 · 제대로

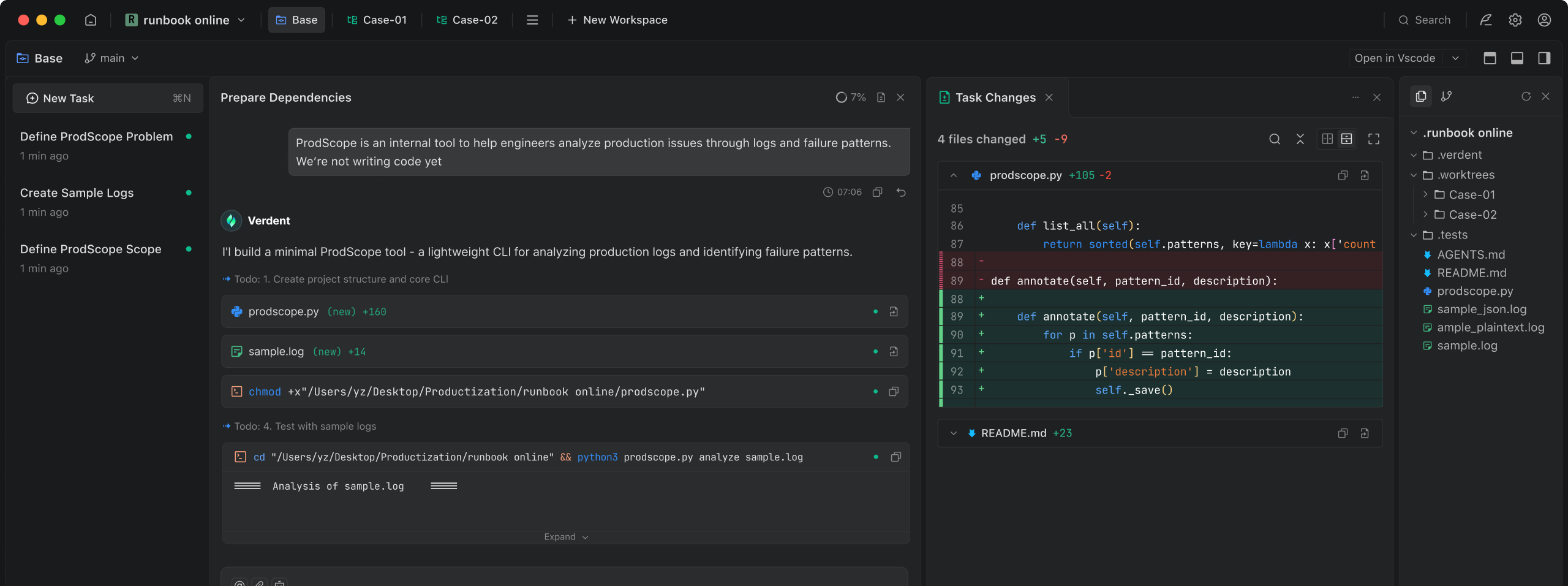

원하는 내용을 설명하기만 하세요. Verdent가 작업을 처리하고 진행 상황을 공유하며 신뢰할 수 있는 결과를 제공합니다.

AI와의 빠르고 집중된 협업. 불필요한 요소 없이, 방해 없이. 채팅 중심 설계.

고급 에이전트

복잡한 코딩 작업에서도 강력하고 검증된 성능을 제공합니다.

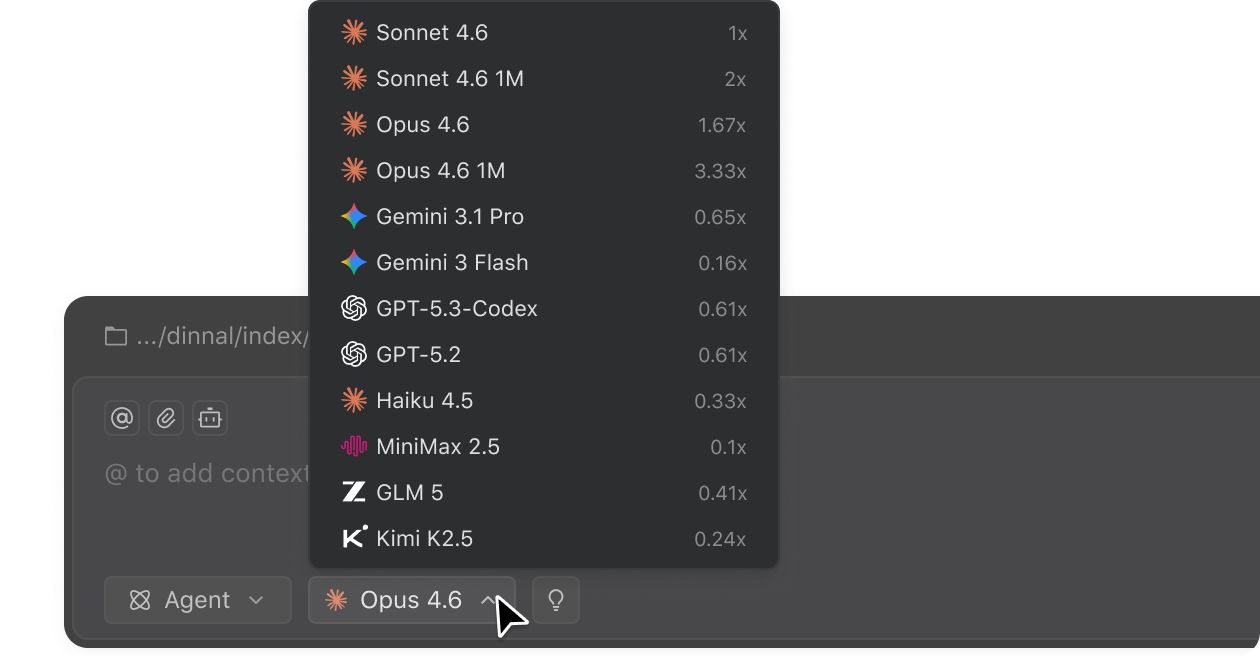

주요 모델 액세스

Verdent 안에서 오늘날 최고의 AI 모델을 선택하세요.

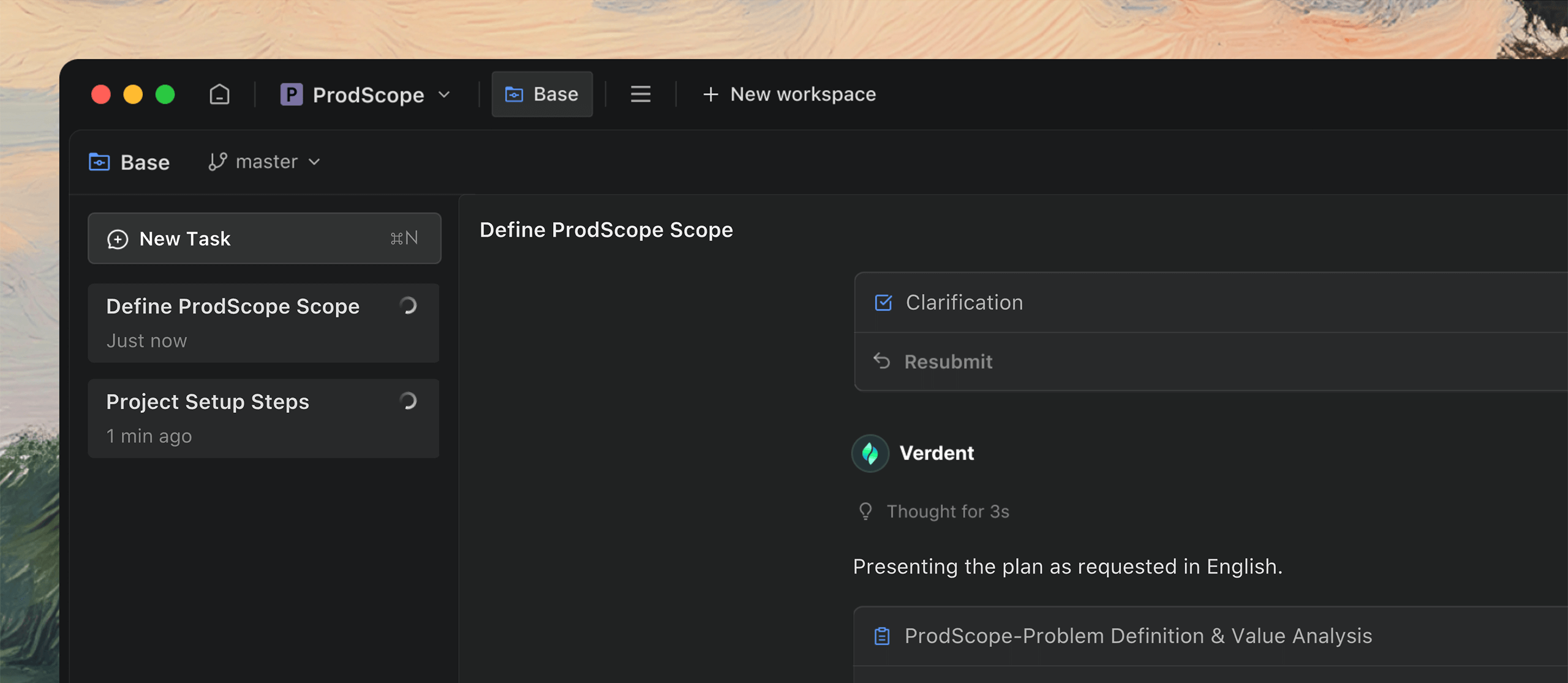

함께 생각하기

모든 작업이 명확한 아이디어로 시작되는 것은 아닙니다. Verdent가 실제로 사용할 수 있는 아이디어로 만들어 드립니다.

아이디어가 아직 모호할 때, Verdent는 적극적으로 질문하여 명확한 작업으로 정리하도록 도와줍니다.

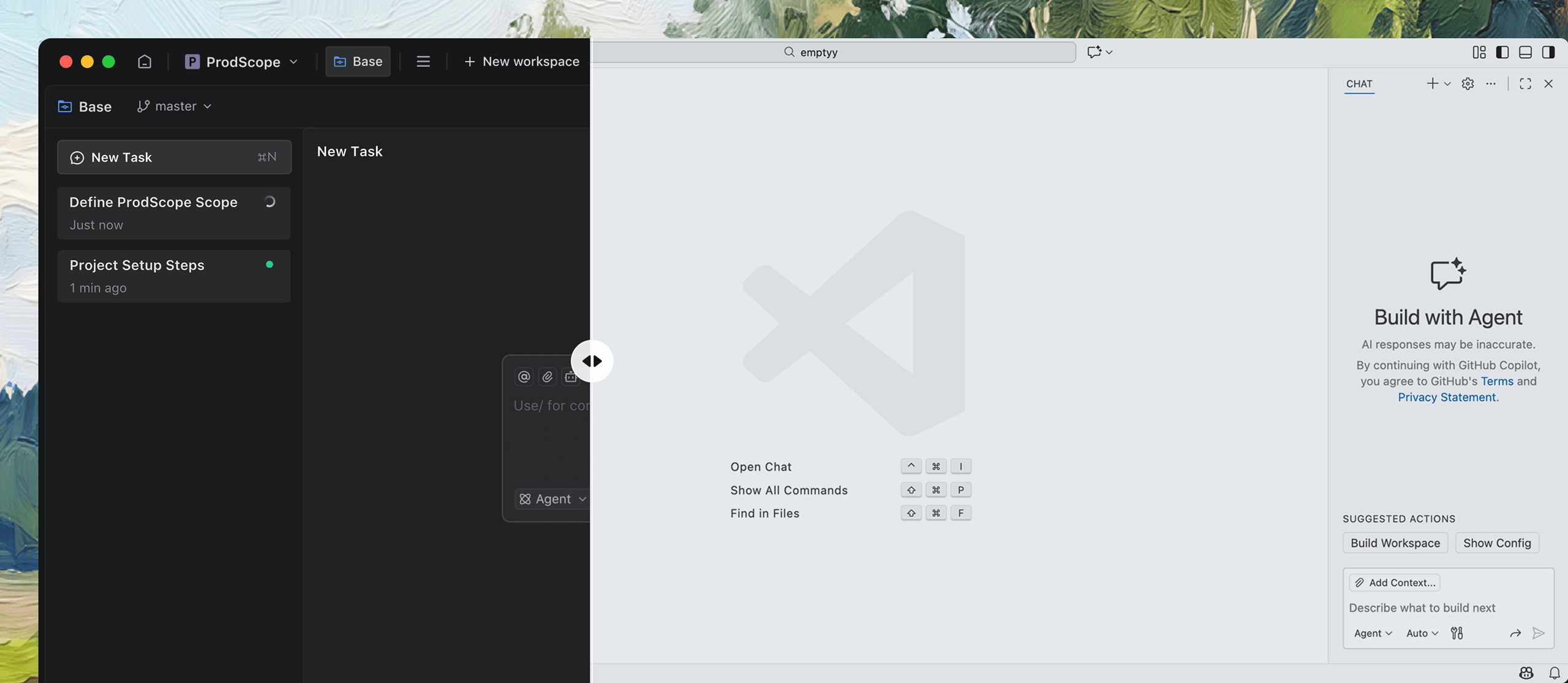

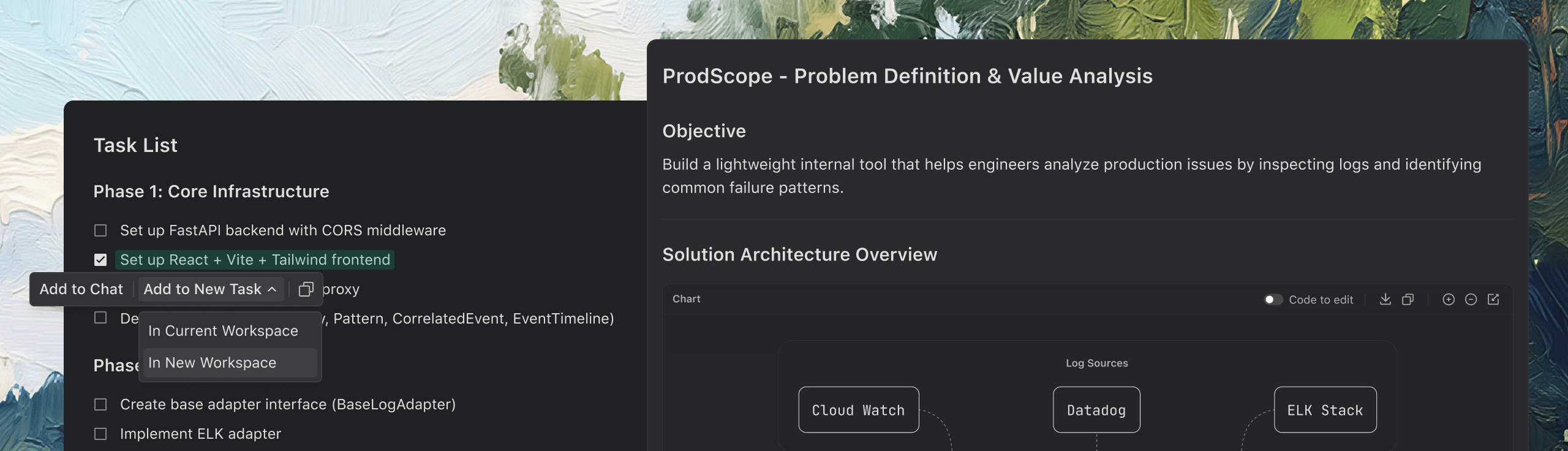

병렬 작업을 위해 설계됨

작업은 보통 한 번에 하나씩 오지 않습니다. Verdent는 모든 것을 병렬로 진행해, 따라잡느라 허둥댈 필요가 없습니다.

아이디어가 떠오르면 여러 작업을 간단히 생성하여 코드가 실행되는 동안에도 생각을 계속 이어가세요.

단순한 코딩 그 이상

전문 분야가 아니더라도 문서화, 데이터 분석, 프로토타입 등 다양한 작업을 처리합니다.

필요한 정보를 수집하고 흩어진 아이디어를 상세한 문서로 변환합니다.

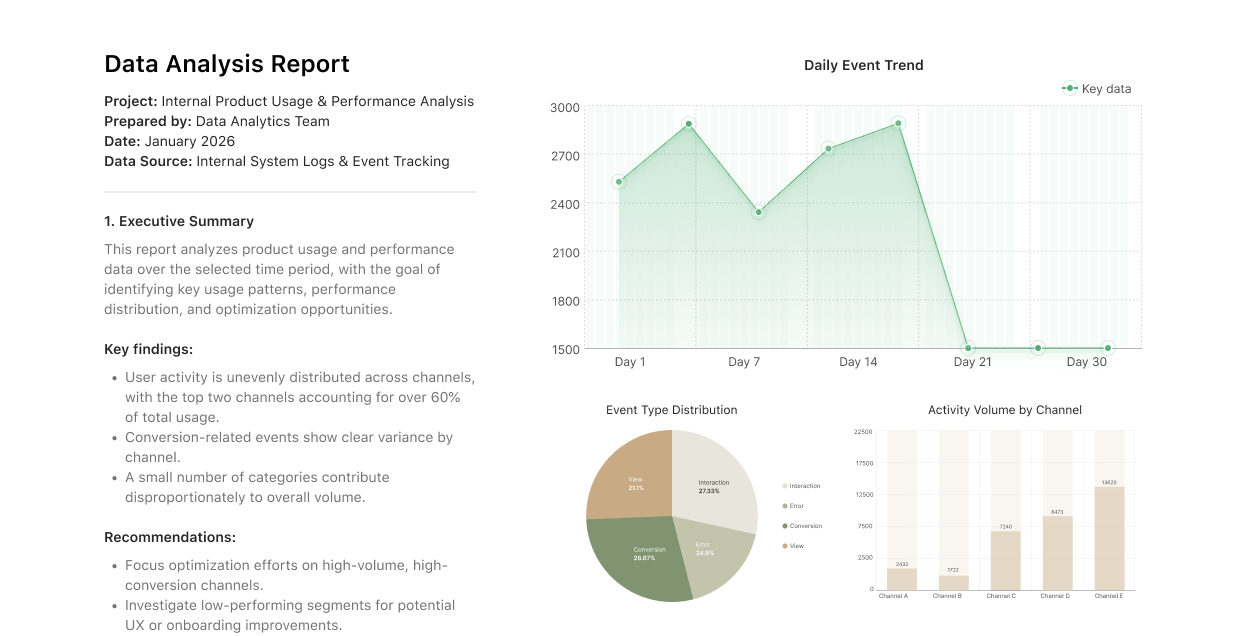

데이터 분석

대규모 데이터셋을 실용적인 인사이트로 쉽게 전환하세요.

프로토타입

아이디어를 발표 가능한 인터랙티브 데모로 빠르게 전환하세요.

신뢰받는

인사이트 및 업데이트

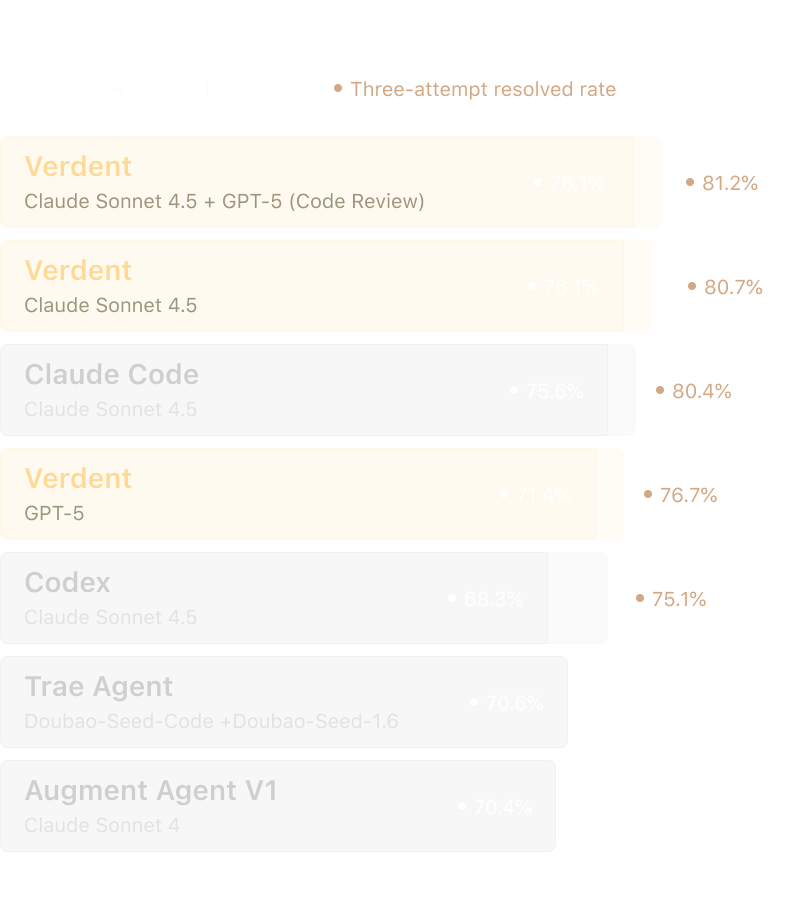

SWE-bench Verified Report

Leading the field with 76.1% single-attempt resolved rate on SWE-bench Verified.

November 1, 2025

What impact will AI have on the software industry?

Transforming software engineering: from code assistance to AI-native infrastructure.

October 31, 2025

How do we see the four levels of AI SWE?

Turning AI coding from helper to powerhouse across the entire software lifecycle.

October 30, 2025

오직 창작에만 집중

나머지는 Verdent가 해결

나머지는 Verdent가 해결

기간 한정 무료 체험판

Mac용 다운로드 Apple Silicon

전문 개발자들의 선택