I've been stress-testing AI coding tools in production environments for years. I don't do hype reviews. I don't do sponsored takes. I run real projects through these tools, track every dollar spent, and report what I find.

And what I've found over the past few months with Claude Code is... not great.

Anthropic has been making one baffling decision after another. The kind of decisions that make you wonder if anyone on the team is actually using their own product. So this month, I pulled the trigger — canceled my Claude Code subscription entirely and went all in on Codex.

Here's the full breakdown of what happened and why.

Anthropic's Self-Destruction Arc

Let's rewind a bit. The trouble didn't start overnight. It was a slow drip of frustrations that eventually turned into a flood.

First came the KYC verification requirements — a move that blindsided most individual developers. Then the model quality started slipping. Then the billing got weird. And through it all, Anthropic stayed quiet.

The Token Drain Nobody Could Explain

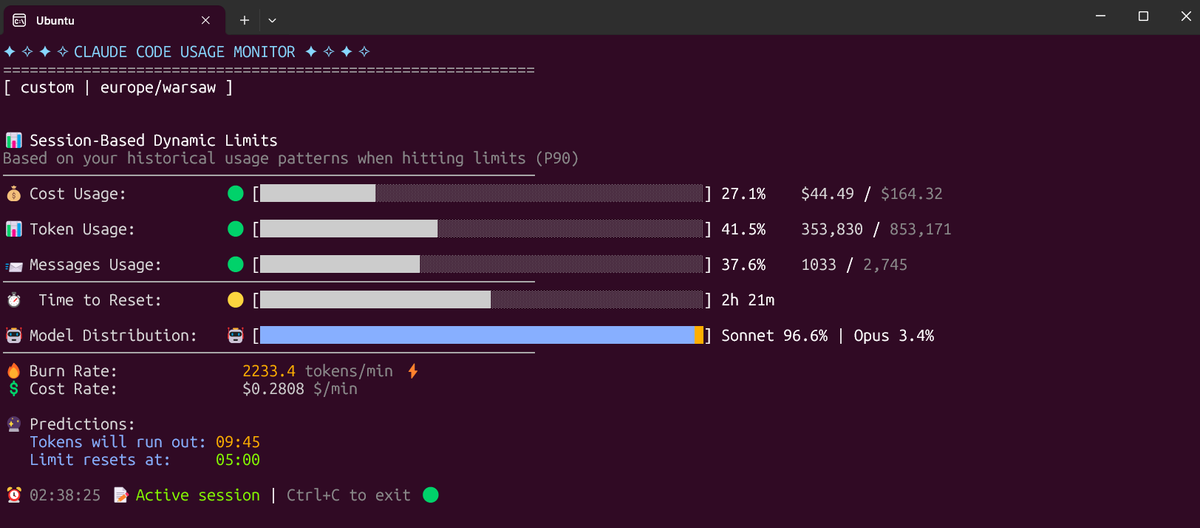

Around early 2026, Claude Code Max subscribers started noticing something was off. The same workload that used to last a full week was burning through quotas in three days. Some people had two Max plans running simultaneously, and both were drained within two weeks.

One developer reported watching 20% of their monthly allocation disappear during a single extended thinking cycle — ten minutes of the model spinning its wheels, and a fifth of the budget gone. Another got hit with $1,200 in extra usage charges over 48 hours. Fifteen minutes of bug fixing? Ten dollars.

Anthropic's Claude Code lead, Thariq, jumped on Twitter to do damage control, offering to review individual session logs one-on-one. The replies turned into a wall of complaints.

What the Logs Actually Showed

After collecting session data from frustrated users, the Claude Code team published their findings. The verdict: most of the mysterious token drain wasn't a bug. It was the architecture working as designed — just in ways nobody expected.

The #1 token killer: subagents.

Every time Claude Code spins up a subagent — a Task tool, a Plan/Explore agent, a custom subtask — it opens an entirely new session. No shared context with the parent. Independent token consumption. You think you hit Enter once. Behind the scenes, five subtasks are running extended thinking in parallel, each eating tokens independently.

One user's logs showed 169 subagent sessions in a single week. Total consumption: 226 million tokens. Average per session: 850,000 tokens. That's not a typo.

The #2 killer: automation scripts.

A monitoring task running every five minutes sounds harmless. A few thousand tokens per run. But over 24 hours, it compounds into something absurd. Watchers, auto-deploy hooks, custom harnesses — all of them quietly munching through your subscription even when you're not actively coding.

Multi-agent orchestration overall consumed roughly 7x the tokens of a normal session. Long conversations without compacting meant the context window ballooned with every new message. Frequent project switches forced full history reloads.

And the dashboard? A black box. No per-message breakdown. No subagent call chain visualization. No way to understand where the money went.

The community got fed up and built their own tools. A developer named kieranklaasse published a Claude token analysis script that reads the JSONL logs from ~/.claude/projects/, breaks down token usage by project, session, and subagent, and surfaces the first human prompt in each session so you can cross-reference whether the task was worth the cost.

python3 token_analysis.py

# or just the last 7 days

SINCE_DAYS=7 python3 token_analysis.pyThe fact that a random developer on GitHub had to build the transparency tool that Anthropic should have shipped from day one? That tells you everything.

The "Accidental" Degradation That Got Fixed Real Fast

This is the part that genuinely pissed me off.

Starting around March, Claude Code users noticed something had changed. Responses were less coherent. Task quality dropped. Code output got measurably dumber. Nobody at Anthropic acknowledged anything.

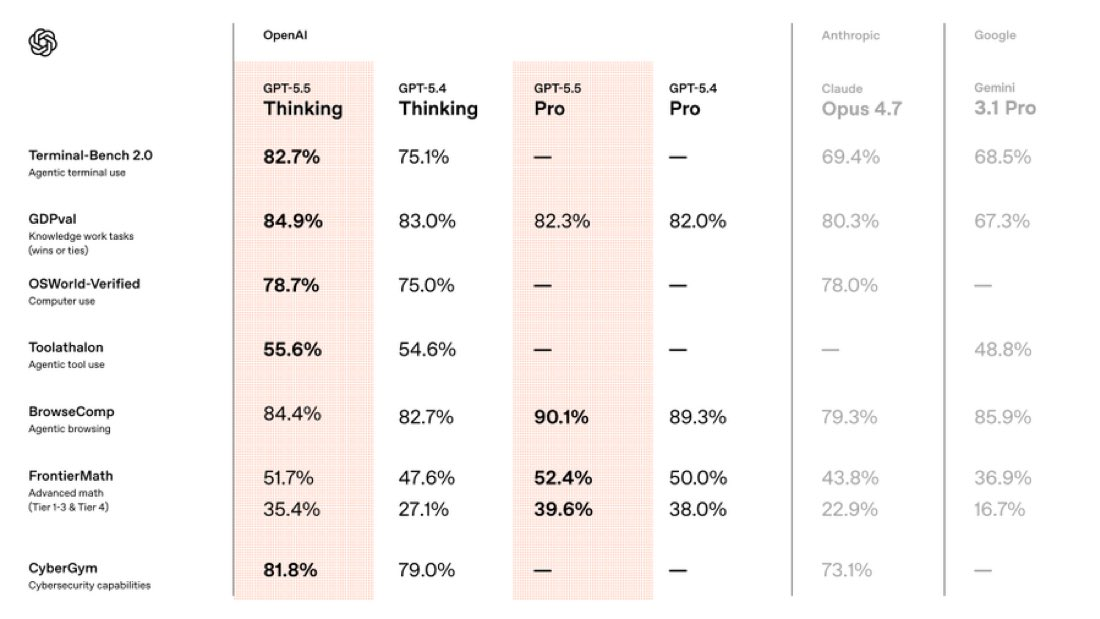

Then on April 24th, OpenAI released GPT-5.5.

The numbers were impressive — 82.7% on Terminal-Bench 2.0, 58.6% on SWE-Bench Pro, and token-per-latency holding steady against the previous generation. Every major metric hammering Claude Opus 4.7.

And here's the plot twist: within hours of that launch, Anthropic's official developer account posted a tweet admitting that Claude Code had been suffering from quality degradation for the past month. They announced it was fixed in v2.1.116+ and — in an unprecedented move — reset usage limits for all subscribers.

The root cause? Tasks that were supposed to run in High mode had been accidentally configured to run in Medium mode. For an entire month. And nobody at Anthropic caught it.

This was the second time a competitor model launch coincided with Anthropic suddenly "discovering" and fixing a performance issue. Once is bad luck. Twice starts to feel like a pattern. Makes you wonder — if GPT-5.5 hadn't dropped, would we still be running on the degraded version?

The biggest contribution of the GPT-5.5 launch might just be this: you finally got to use the full-power Claude you were paying for all along.

The Silent Feature Removal

As if that wasn't enough, Anthropic quietly pulled Claude Code access from the $20/month Pro plan. No announcement. No blog post. No email. Just a gray-scale rollout that removed the feature for a chunk of users without warning.

That's not how you treat paying customers. Period.

Why GPT-5.5 and Codex Changed the Equation

Let me walk through the numbers that matter, because there's real substance behind the hype.

Efficiency Over Raw Power

OpenAI's messaging around GPT-5.5 wasn't "look how smart we are." It was "look how much less it costs to get the same result." On the Artificial Analysis composite intelligence index, GPT-5.5 consumed roughly half the tokens of competing frontier coding models at equivalent intelligence levels.

That's not a benchmarking footnote. That's a direct hit to your monthly bill.

Latency Didn't Get Worse

This one surprised me. Bigger, smarter models usually mean slower responses. GPT-5.5's per-token latency matched GPT-5.4 in production serving. OpenAI's team analyzed weeks of Codex production traffic and applied optimized load balancing with partitioned heuristics to push token generation speed up 20%.

Quick tip: turn on Fast mode in Codex. The difference is noticeable.

Agent Capability Jump

Terminal-Bench 2.0 tests complex command-line workflows — planning, iteration, tool coordination. GPT-5.5 scored 82.7%. Claude Opus 4.7 scored 69.4%. Not close.

OSWorld, which measures a model's ability to independently operate real computer environments, was tighter: GPT-5.5 at 78.7% vs Opus 4.7 at 78.0%.

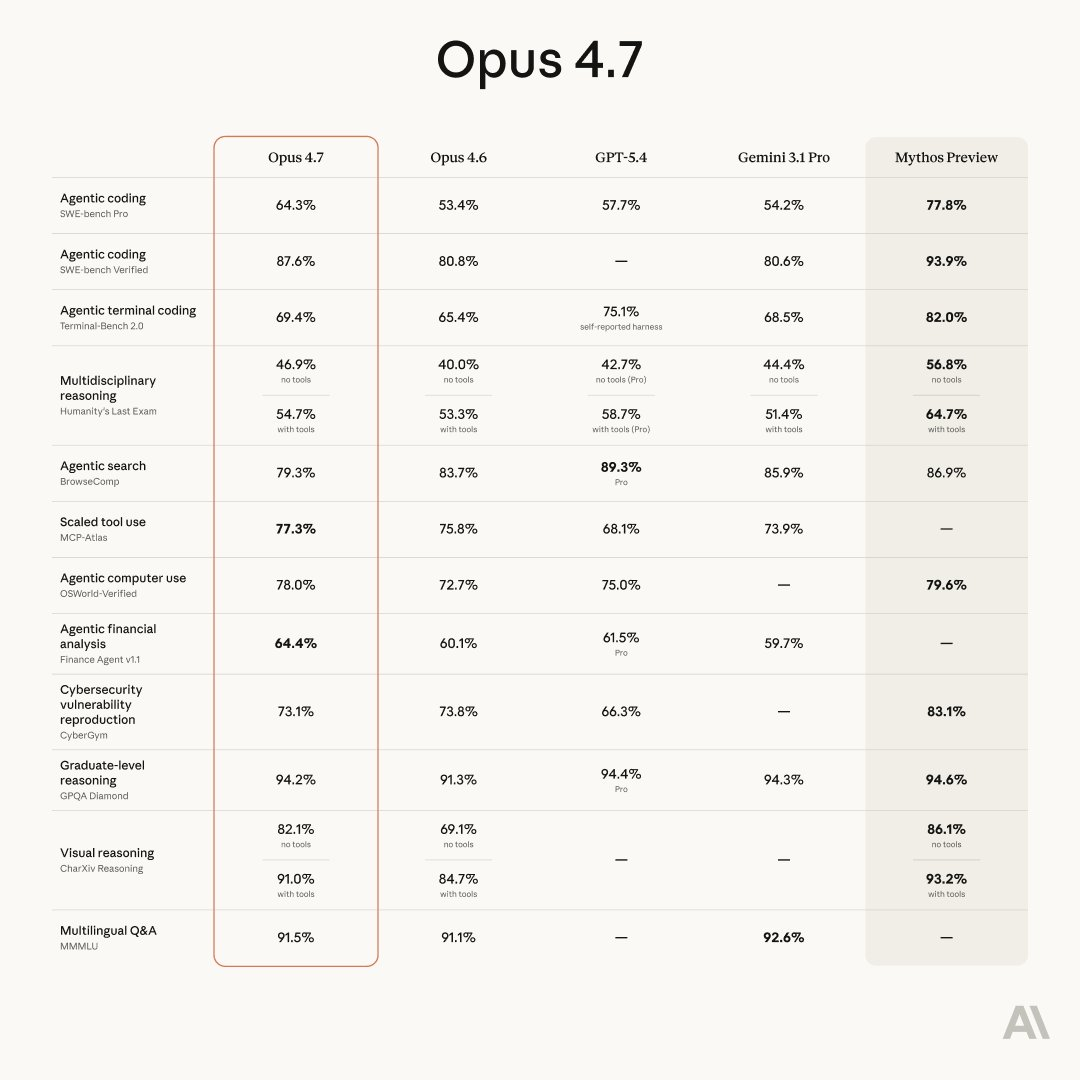

On SWE-Bench Pro, Opus 4.7 still leads with 64.3% vs GPT-5.5's 58.6%. But OpenAI flagged memorization concerns with that benchmark in their own results table, so take that gap with a grain of salt.

The Research Signal

GeneBench, which tests multi-stage genetics data analysis, saw GPT-5.5 hit 25.0% (up from 19.0% for GPT-5.4). OpenAI also mentioned that an internal GPT-5.5 build with custom harness helped discover a new proof related to Ramsey numbers, verified in Lean.

That's not "chatbot does party tricks." That's real mathematical contribution.

Pricing Reality

API standard pricing: $5/million input tokens, $30/million output tokens, 1 million token context window. GPT-5.5 Pro tier runs $30 input, $180 output. Sticker prices are up. But because task completion requires fewer tokens, actual per-task cost increase is smaller than the rate card suggests.

Codex in Practice: What Sold Me

I've been running Codex CLI daily for the past month. Here's what made the difference in my actual workflow.

Speed is king for iteration loops. When I'm debugging, I need fast feedback cycles. Codex in Fast mode gives me tight turnaround without the ten-minute thinking spirals I was seeing in Claude Code.

China-region friendly. This matters to a significant chunk of the developer community. No account bans. No IP anxiety. OpenAI has been remarkably chill about access, and they regularly reset weekly quotas without anyone asking. Compare that to Anthropic's increasingly aggressive account restrictions.

The desktop app is actually good now. Codex APP has been getting multi-daily updates. Computer Use, built-in browser, SSH — these are shipped features, not roadmap promises. The interaction design feels like someone at OpenAI actually uses the tool for real work.

Migration from Claude Code is embarrassingly easy. This deserves its own section.

Migrating from Claude Code to Codex: The Full Walkthrough

I was bracing for a painful transition. Custom instructions, skills, MCP configurations, session history — I had a lot of stuff built up in Claude Code over months of use.

Turns out OpenAI already thought about this. They published a detailed migration guide, and the tooling is baked directly into the Codex app.

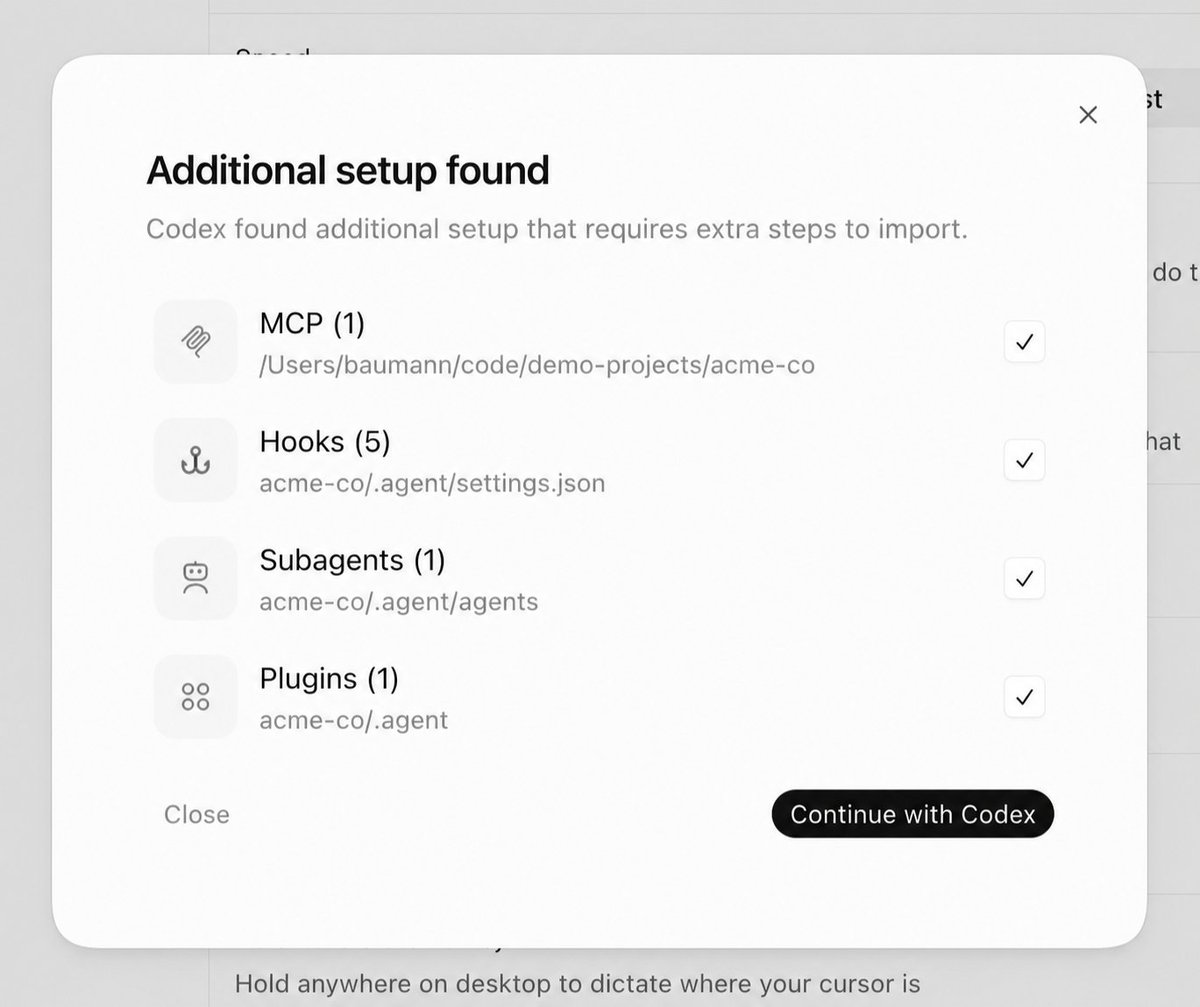

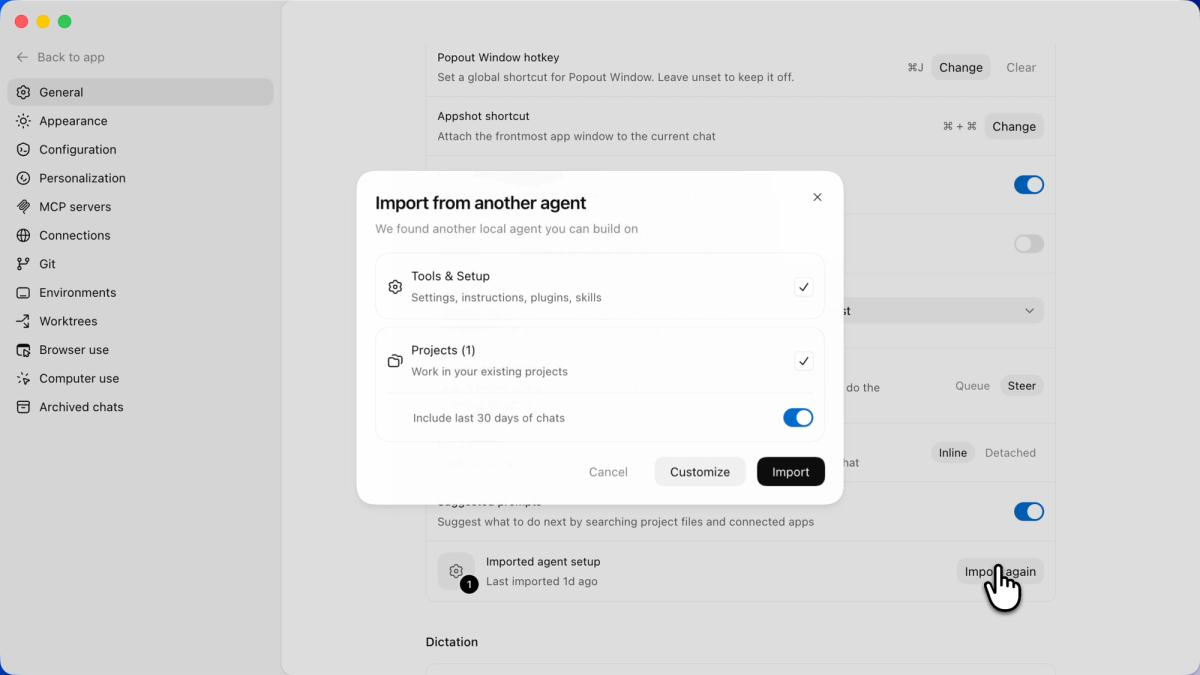

Method 1: Built-In Import

Open Codex settings. Go to the General page. Find "Import other agent setup." Click Import. Select what you want to migrate.

That's it. I'm not exaggerating. The whole process is smoother than transferring data to a new phone.

The coverage is thorough:

- Instruction files and configuration

- Skills

- Last 30 days of session history

- MCP server configurations

- Hooks

- Slash commands

- Sub-agents

- Settings file conversion (

settings.json→config.toml)

They basically reverse-engineered Claude Code's entire setup structure and built a one-click converter. Brutal competitive move.

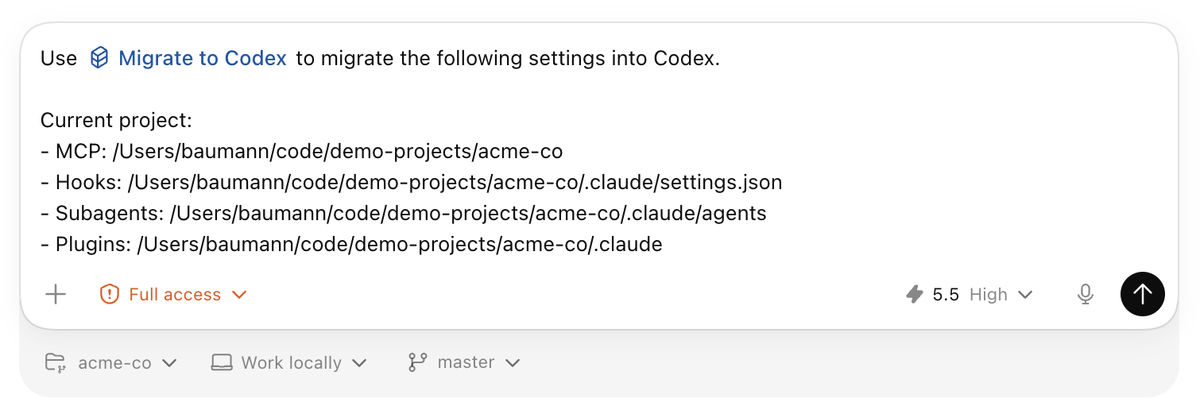

Method 2: The migrate-to-codex Skill

For edge cases where the automatic import doesn't have a clean mapping, Codex ships a dedicated skill called migrate-to-codex. When activated, it spins up a separate thread, lists everything that wasn't automatically handled, and walks you through manual resolution.

The skill distinguishes between user-level and project-level configurations, which is especially useful if you're a CLI-only user managing multiple repos.

Post-Migration Checklist

Don't just trust the import blindly. I'd recommend verifying these after migration:

- Tool permissions in skills and agent configs — these don't always map 1:1

- MCP configurations with custom auth or environment variables — paths and secrets need manual re-entry

- Hooks that depend on specific file paths or behaviors — semantics may shift

- Prompt templates that reference Claude-specific parameters or file structures

Took me about 20 minutes of manual checking after the auto-import. Not bad for a full toolchain migration.

My Setup Going Forward

Let me be transparent about what my stack looks like now.

Primary coding agent: Codex with GPT-5.5. This handles all my heavy lifting — refactoring, feature development, debugging, test generation. Fast mode stays on unless I'm doing deep architectural planning.

Claude Code via third-party access: still in the mix. I canceled the official Anthropic subscription, but I'm still using Claude through third-party providers for specific workflows that are deeply integrated. Some things are hard to rip out overnight, and Claude's raw intelligence on certain reasoning tasks is still strong.

Claude free tier for daily chat. For non-coding conversations, research questions, document review — the free web version of Claude handles it fine. No need to pay for that.

Codex is the main event now. It's where I spend 80%+ of my coding time. The speed, the transparency, the stability, the lack of billing surprises — it all adds up.

The Bottom Line

If you're still subscribing to Claude Code and feeling the friction — the opaque billing, the unexplained quality swings, the features disappearing without notice — I'd seriously recommend giving Codex with GPT-5.5 a real shot.

The migration is painless. The performance is there. The pricing is competitive. And honestly? OpenAI just seems to care more about keeping developers happy right now. Regular updates. Transparent communication. No account ban anxiety.

Anthropic built something remarkable with Claude. The model itself is still impressive. But the product wrapping around it — the billing, the trust, the communication — that's where they've lost me.

I don't say this lightly. I used Claude Code daily for months. But when a tool starts costing me money I can't track, running at half power without telling me, and removing features I'm paying for without a word?

That's not a partner. That's a liability.

Give Codex a try. Start with the CLI or the desktop app. Run one real project through it. You'll feel the difference.

And if you're having trouble with Codex payment access, there are subscription options available through alternative channels that work globally.