I've wasted more hours reviewing bad AI-generated plans than I'd like to admit. You know the pattern — you tell your coding agent "build me X," it spits out a 2,000-word brainstorming doc, you read through it, half of it's wrong, you correct it, it regenerates, and now you've burned 40 minutes before a single line of code was written.

Last month I stumbled onto a two-tool combo that completely flipped this process. Five lines of code for the first tool. A single CLI command for the second. Together they've cut my planning overhead by roughly 70% — and the code that comes out the other end is closer to what I actually wanted on the first pass.

Let me walk you through it.

The Real Problem With Vibe Coding

Here's a thing that bugs me about how most people talk about AI coding: the bottleneck isn't code generation. These models can write code all day. The bottleneck is that the code they write isn't what you had in mind.

And it's usually your fault, not theirs.

You have this fuzzy vision in your head. You give the agent a vague prompt. The agent fills in the blanks with its own assumptions. You get the output and think "no, that's not what I meant." Rinse, repeat.

The standard fix is a brainstorming or planning phase — have the agent draft a proposal, you review it, iterate, review again. Tools like superpowers build this into their workflow.

The problem? These planning phases are heavy. Long outputs. Slow iterations. And you're spending serious cognitive energy evaluating whether the agent's proposal is right — which is almost as hard as just building the thing yourself.

What if the agent didn't propose anything? What if it just... asked you?

Grill-Me: 5 Lines That Changed How I Start Every Task

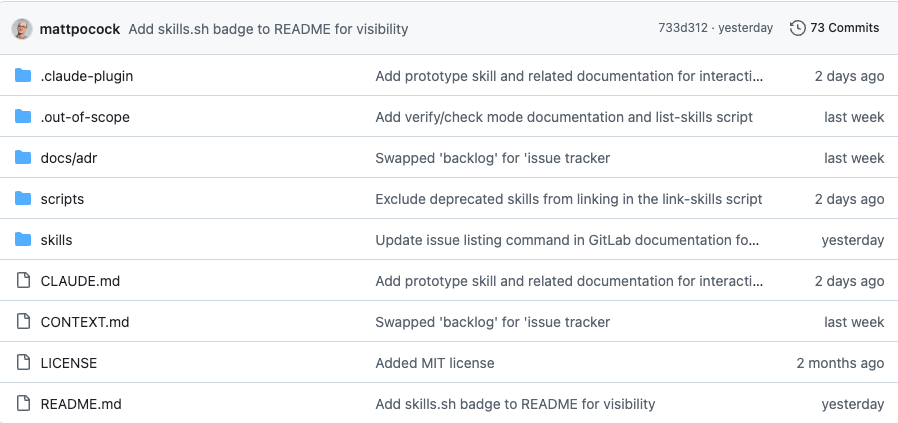

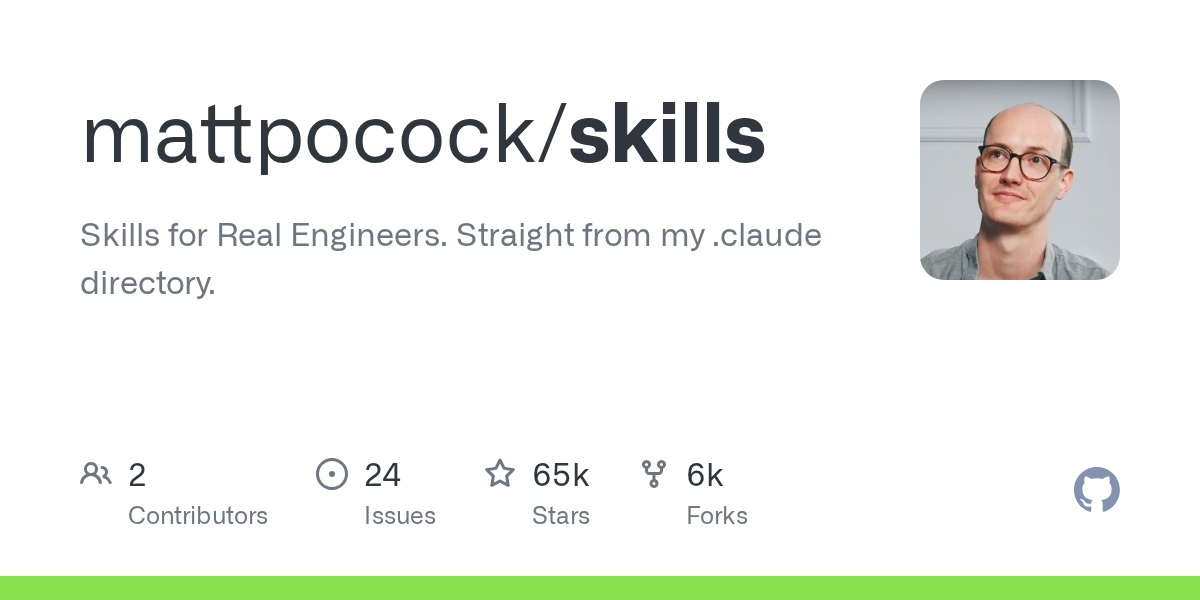

Grill-me is a Claude Code skill created by Matt Pocock. The entire thing is a few sentences long. No framework. No config files. No dependencies. Just a prompt instruction that tells the agent to interview you about your requirements before touching any code.

The idea behind it is dead simple: before the agent writes anything, it interrogates you. Not with a form, not with a checklist — with a conversation. It walks through every design decision, one fork at a time, and doesn't stop until both of you are on the same page. Matt Pocock's original prompt tells the agent to suggest its own answer for each question, and to go dig through your codebase whenever it can find the answer there instead of bothering you.

That's the whole thing. A handful of sentences. And it's kind of brilliant.

Here's what it actually feels like in practice:

Micro-decisions, not macro-reviews. Instead of dumping a 30-paragraph plan in your lap, the agent surfaces one question at a time. "Should we use a singleton here or dependency injection?" You answer, it moves on. Your brain stays in quick-judgment mode, never switching to "read and evaluate a wall of text" mode.

The agent does the thinking, you do the steering. Every question comes with a recommendation. "I'd suggest using PostgreSQL for this — sound right?" You're not staring at a blank prompt trying to articulate your vision from scratch. You're reacting to a concrete proposal. That's a completely different kind of mental effort.

Codebase-aware, not codebase-ignorant. If the answer already exists somewhere in your repo — a naming convention, a config pattern, an existing abstraction — the agent finds it and moves on. I've seen it resolve three or four questions in a row without asking me anything, just by reading my code. That's time I didn't have to spend.

Drop-in, not lock-in. It's a plain skill file. No framework dependency. No required project structure. You invoke it when you want it, ignore it when you don't. I've used it inside Claude Code, Codex, and Cursor without changing anything else about my setup.

The r/vibecoding community on Reddit gave this thing 13k+ upvotes worth of discussion. The takeaway that keeps coming up: traditional brainstorming puts the agent in the driver's seat and you in the reviewer's seat, which is mentally exhausting. Grill-me reverses the roles — you're the decision-maker, the agent is the interviewer. Much lighter on the brain.

Trellis: Keeping the Agent on the Rails

Asking the right questions is half the battle. The other half is not losing the plot during execution.

If you've ever had a coding agent start strong and then drift sideways over a long session — forgetting constraints, contradicting earlier decisions, slowly corrupting its own context — you know the problem. Long-running agent tasks need guardrails, or the quality degrades with every passing minute.

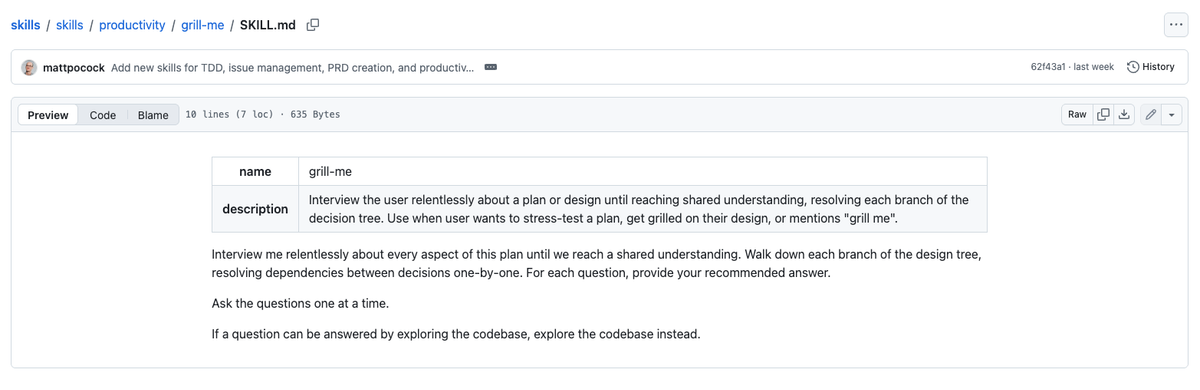

Trellis is a CLI framework by Mindfold that adds structured planning on top of whatever coding agent you're already using. Think of it as the project manager sitting between your requirements and your agent's execution.

The part I care about most is context governance. In a long agent session, the model's understanding of what it's supposed to be doing gradually rots. Early constraints get forgotten. Decisions get contradicted. Trellis solves this by maintaining a structured task tree that the agent references throughout execution — a persistent source of truth that doesn't degrade with conversation length.

Each task in the tree comes with an explicit objective and acceptance criteria. Not "build the auth system" but "implement JWT refresh token rotation with 7-day expiry, returning 401 on expired tokens and 403 on invalid tokens." The agent knows what "done" looks like before it writes the first line.

And it plays well with others. One trellis init generates plans for Claude Code, Codex, and Cursor simultaneously. You're not choosing sides — you're adding structure to whatever tool you already use.

The Full Workflow: How I Actually Use This

The theory sounds nice. Here's what it looks like on a Tuesday afternoon when I have a feature to ship.

I start by telling the agent to grill me. I describe the feature in two or three sentences — deliberately vague, because that's the point. The agent immediately starts probing. "Are we building this as a standalone service or a module inside the existing app?" "What's the auth model — do we inherit from the parent or does this need its own?" "I found a similar pattern in /src/services/billing.ts — should I follow that convention?" Each question takes me 5-10 seconds to answer. The whole interrogation runs about 10 minutes. By the end, the agent has a clearer picture of what I want than any spec doc I've ever written.

Then I switch to Trellis. I run trellis init in the project root and feed it the consensus from the grill-me session. Trellis turns that into a task tree — not a vague "phase 1, phase 2" outline, but concrete tasks with objectives and acceptance criteria. "Create the database migration for the new subscriptions table. Columns: id, user_id, plan_type, started_at, expires_at. Add index on user_id." That level of specificity.

With the plan locked, I let the agent execute. If I'm feeling confident about the requirements (which I usually am after a good grill-me session), I'll flip on dangerously-skip-permissions and let it run unsupervised. I've had sessions go 40 minutes straight — the agent working through the task tree, writing code, running tests, fixing failures, moving to the next task. I go make coffee. Maybe two coffees.

The deliverable at the end is usually 85-90% of what I need. A few tweaks, not a tear-down. Compare that to the old flow where the first agent output was maybe 50% right and I'd spend another hour in revision ping-pong.

Why This Feels So Different From Agent Planning

I want to get specific about the mechanics here, because the difference isn't just "it's faster." It's a different kind of mental activity entirely.

When I used brainstorming-style planning, the loop went like this: the agent produces a long document → I read it carefully → I figure out which parts are wrong → I explain the corrections → the agent revises → I re-read the revision. At every step, I'm doing evaluation work. "Is this architecture right? Did it understand the constraint I mentioned? Is this edge case covered?" That's hard thinking. It's tiring. And it takes 30-40 minutes for a complex feature.

The grill-me loop is: the agent asks "should we do X or Y?" → I say "X" → next question. Sometimes I say "actually, neither — here's what I need" and give a one-sentence redirect. But most of the time, the agent's suggestion is close enough that I just confirm.

It hit me about a week in why this feels so much lighter. It's the difference between writing an essay and taking a multiple-choice test. Both cover the same material. One is creative generation from scratch. The other is recognition and selection. My brain can do the second one for an hour without getting tired.

Installation

Both tools take under two minutes to set up.

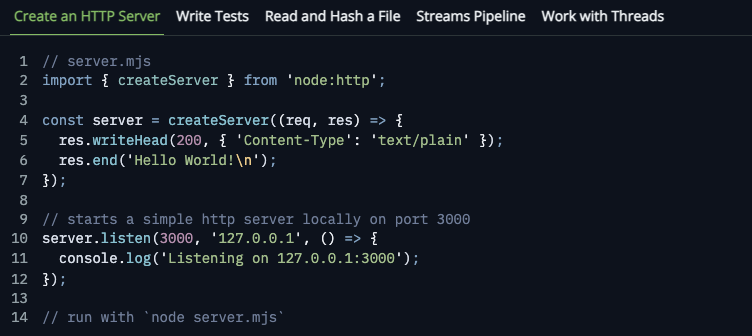

Grill-me — if you're on Claude Code with a skill manager:

npx skills@latest add mattpocock/skills --yesQuick heads up: the sub-path install (npx skills@latest add mattpocock/skills/grill-me) doesn't work. You need to install the full repo. The installer auto-configures for Claude Code, Codex, Cursor, and Gemini CLI.

Trellis — requires Node.js. Global install, then init per project:

npm install -g @mindfoldhq/trellis@beta

cd your-project

trellis init --claude --codex

A Note on dangerously-skip-permissions

This flag does exactly what it sounds like — it lets the agent skip all permission prompts and execute freely. File reads, writes, shell commands, everything. No human confirmation in the loop.

The upside is obvious: uninterrupted flow. The agent can chew through a complex task for 30 minutes straight without waiting for you to click "allow."

The risk is also obvious: the agent might do something you didn't expect.

My rule of thumb: the clearer your requirements, the safer it is to skip permissions. If you've done a thorough grill-me session and your Trellis plan has tight acceptance criteria, the agent has very little room to go rogue. On a brand new project you don't understand well? Don't use this mode. Start supervised.

Who Should Bother With This

Be honest with yourself about whether you need this level of structure.

If your coding agent tasks are short and self-contained — "write me a sort function," "add a loading spinner" — you don't need a requirements interrogation phase. Just prompt and go. Grill-me adds value when the task is complex enough that a vague prompt leads to a wrong output. Multi-file features, architectural decisions, anything where "build me X" has more than one reasonable interpretation.

If you're already running long agent sessions and noticing quality degradation as the conversation grows — that's Trellis's sweet spot. The context management alone is worth the install.

And if you're the kind of developer who bounces between Claude Code, Codex, and Cursor depending on the task — both tools are platform-agnostic. Set them up once, use them everywhere.

One real limitation: these are CLI tools for developers. If you need visual project management or you're not comfortable in a terminal, this isn't your workflow.

Project Status

Grill-me is mature. Matt Pocock has published video walkthroughs, the community has battle-tested it across thousands of sessions, and it works reliably across multiple agent platforms.

Trellis is still in beta. The core concept — task decomposition plus context governance plus multi-platform output — is the strongest I've seen in this category. But expect some rough edges. If you're not ready for beta software, you can absolutely use grill-me on its own for the requirements phase and handle execution with whatever tool you're already comfortable with.

Either way, the five lines that make up grill-me have saved me more time than tools with ten thousand lines of code. Sometimes the smallest intervention at the right moment is all you need.

Try grill-me on your next project. Spend 10 minutes answering questions instead of 40 minutes reviewing proposals. You'll wonder why you ever did it the other way.