Verdent Build Sprint 2, up to 700 credits just by prompting and sharing! Join it now!

Verdent Build Sprint 2, up to 700 credits just by prompting and sharing! Join it now! Bringen Sie die Freude am Programmieren zurück Fokus auf das Erschaffen

Ihr KI-nativer Partner für die neue Art der Softwareentwicklung.

Limitiert GRATIS TESTEN

Für Mac herunterladen Apple Silicon

Sauber · Schnell · Gut

Beschreiben Sie, was Sie brauchen. Verdent übernimmt die Arbeit, hält Sie auf dem Laufenden und liefert Ergebnisse, denen Sie vertrauen können.

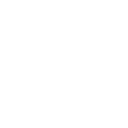

Schnelle, fokussierte Zusammenarbeit mit KI. Kein Ballast. Keine Ablenkungen. Chat-basiert konzipiert.

Erweiterter Agent

Starke, bewährte Performance bei komplexen Programmieraufgaben.

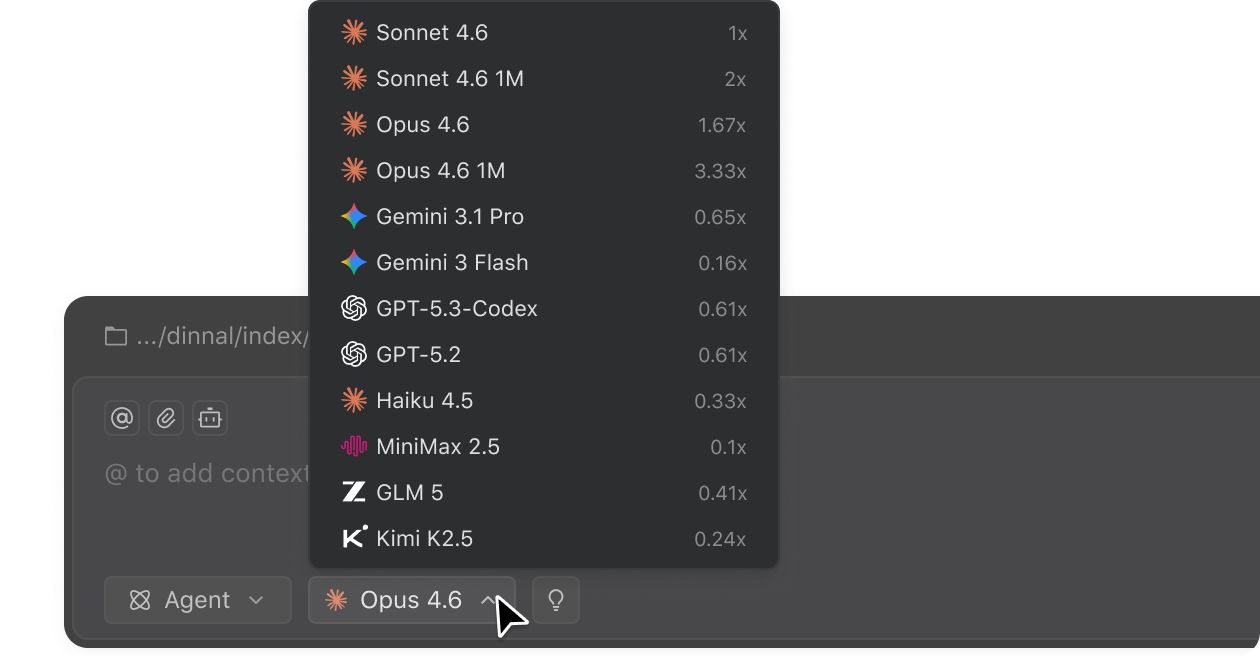

Zugriff auf führende Modelle

Wählen Sie aus den besten KI-Modellen von heute – direkt in Verdent.

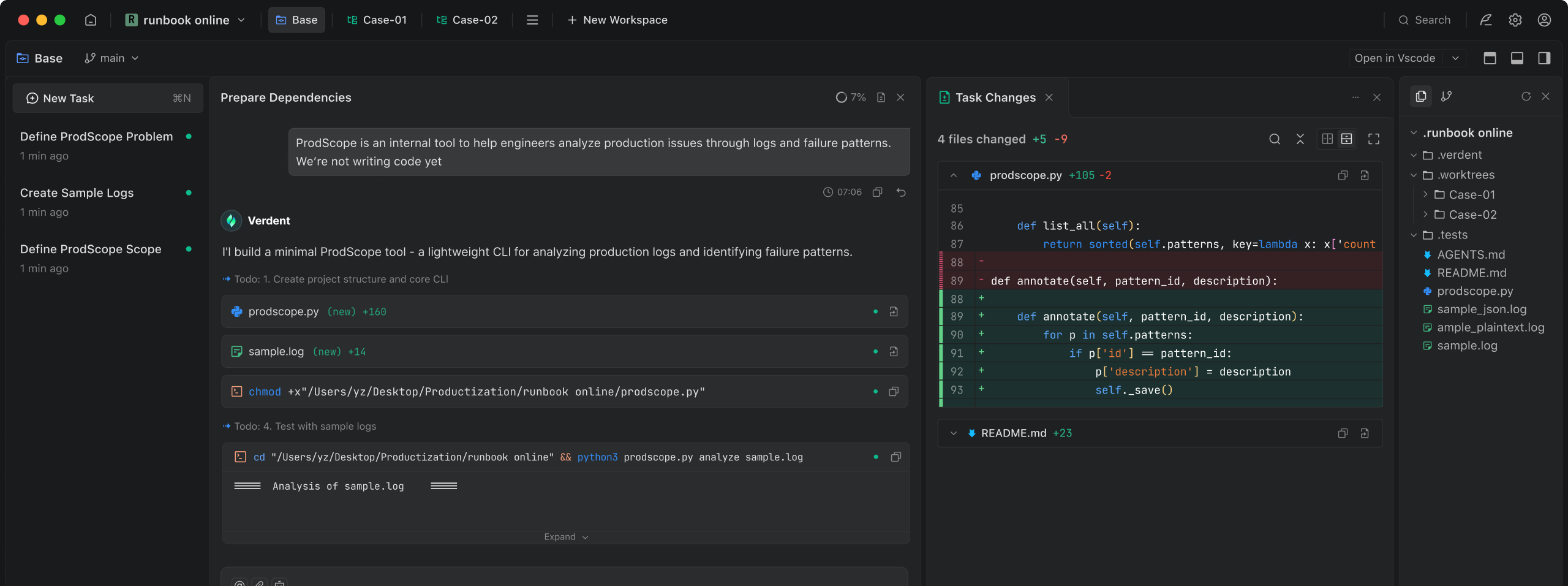

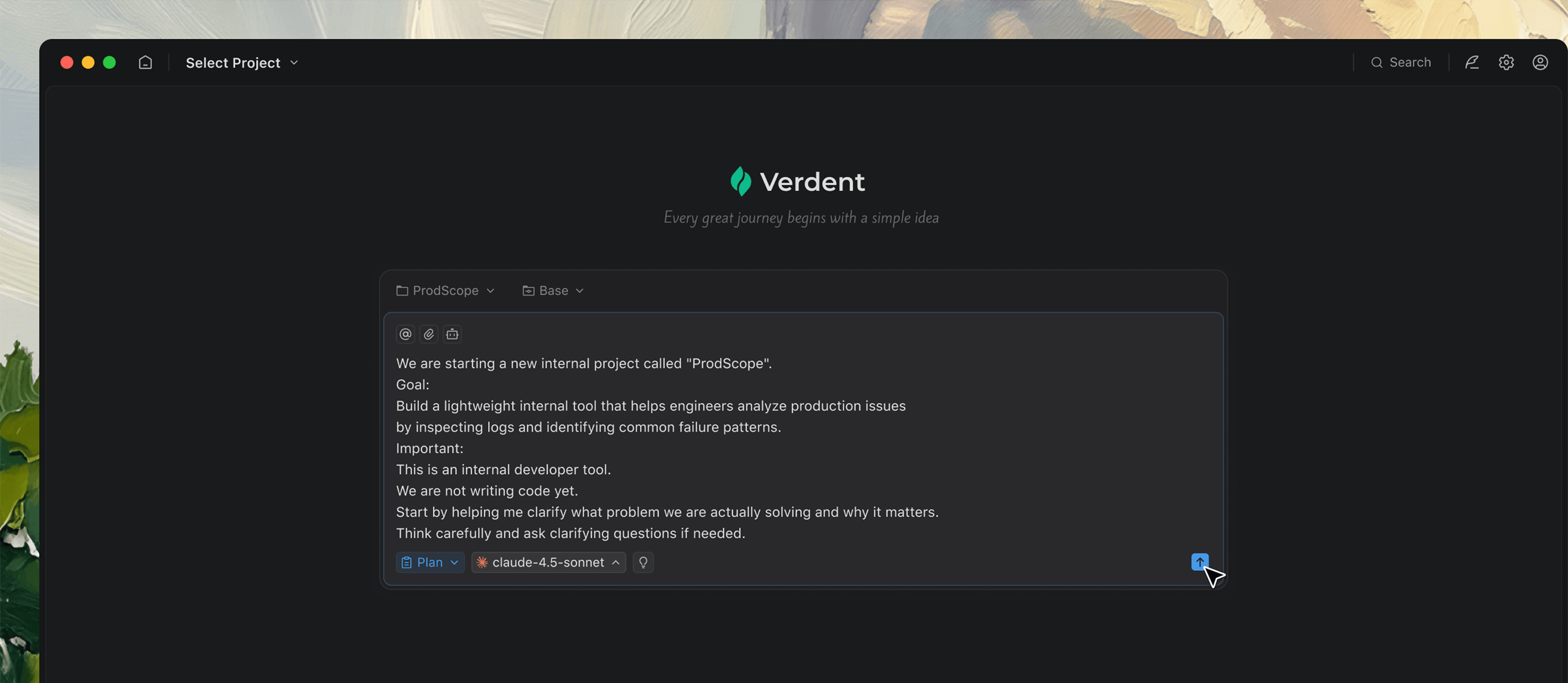

Gemeinsam denken

Nicht jede Aufgabe beginnt mit einer klaren Idee. Verdent hilft Ihnen, eine zu formen, die Sie tatsächlich nutzen können.

Wenn Ihre Idee noch unklar ist, stellt Verdent proaktiv Fragen, um Ihnen zu helfen, sie in eine klare Aufgabe zu verwandeln.

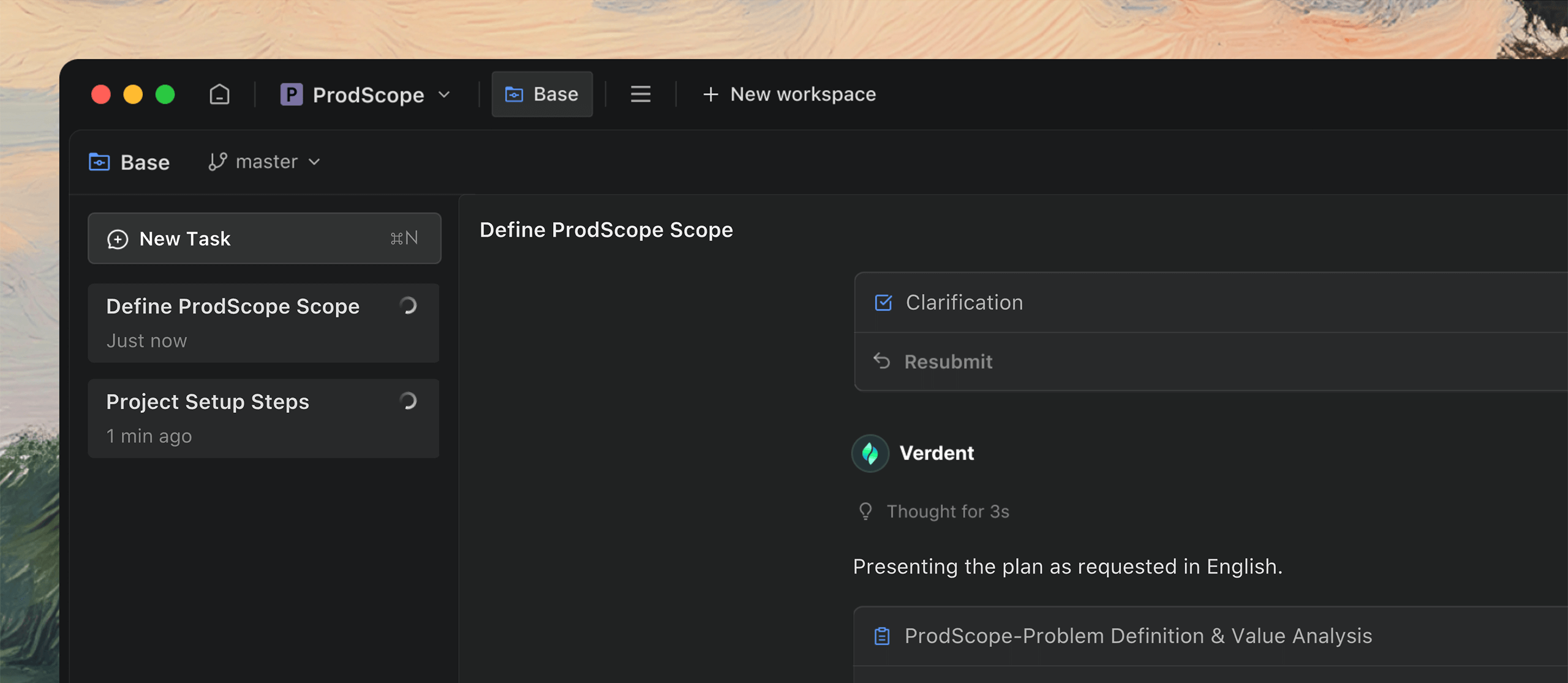

Für paralleles Arbeiten entwickelt

Arbeit kommt selten Aufgabe für Aufgabe. Verdent hält alles parallel in Bewegung, damit Sie nicht ins Hintertreffen geraten.

Erstellen Sie einfach mehrere Aufgaben, wenn Ideen aufkommen, damit Sie weiterdenken können, während der Code ausgeführt wird.

Mehr als nur Programmieren

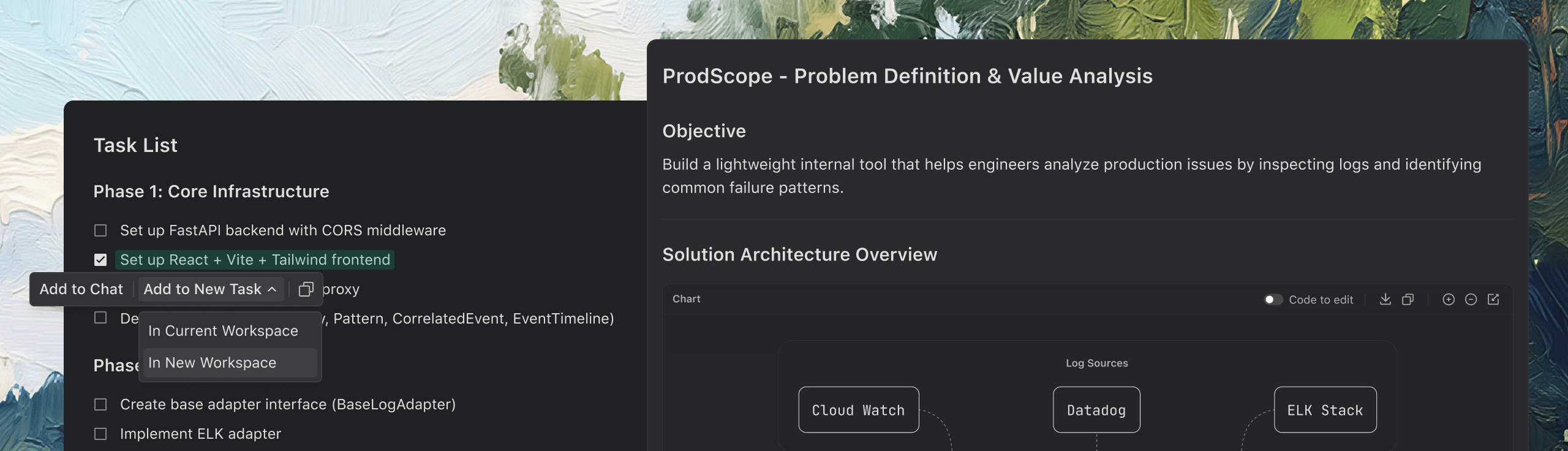

Unterstützt auch Dokumentation, Datenanalyse, Prototypen und mehr – selbst außerhalb Ihres Fachgebiets.

Sammelt die benötigten Informationen und verwandelt verstreute Ideen in detaillierte Dokumentation.

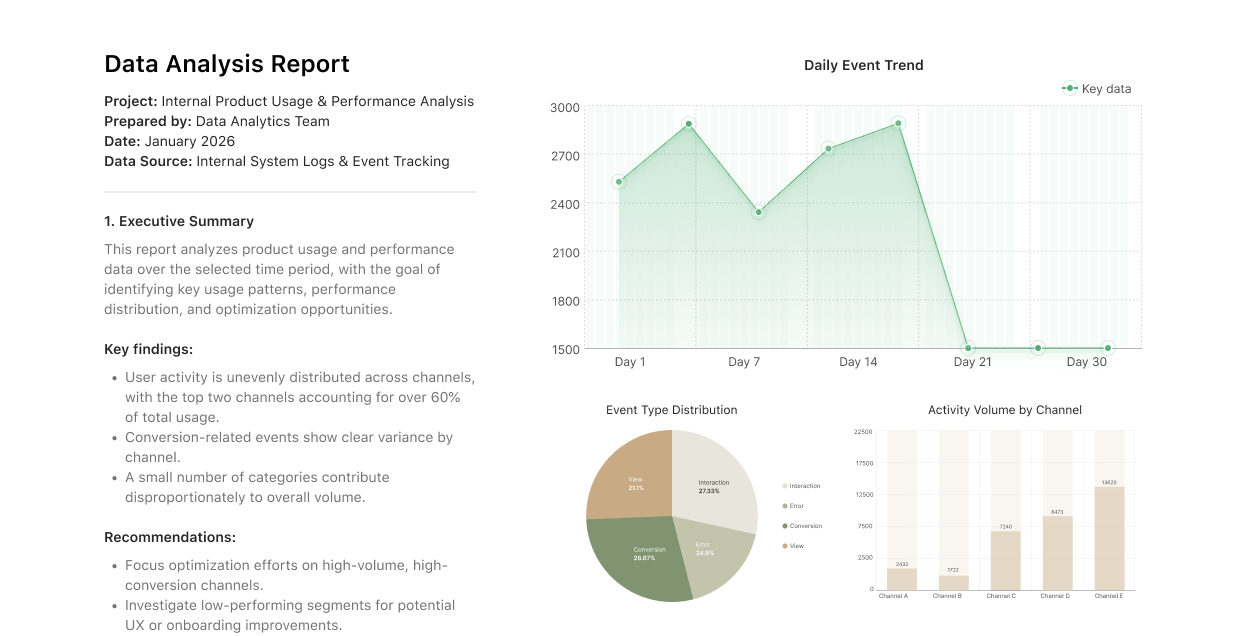

Datenanalyse

Verwandeln Sie große Datensätze einfach in praktische Erkenntnisse.

Prototypen

Verwandeln Sie Ihre Ideen schnell in interaktive Demos, die Sie präsentieren können.

Vertraut von

Einblicke und Aktualisierungen

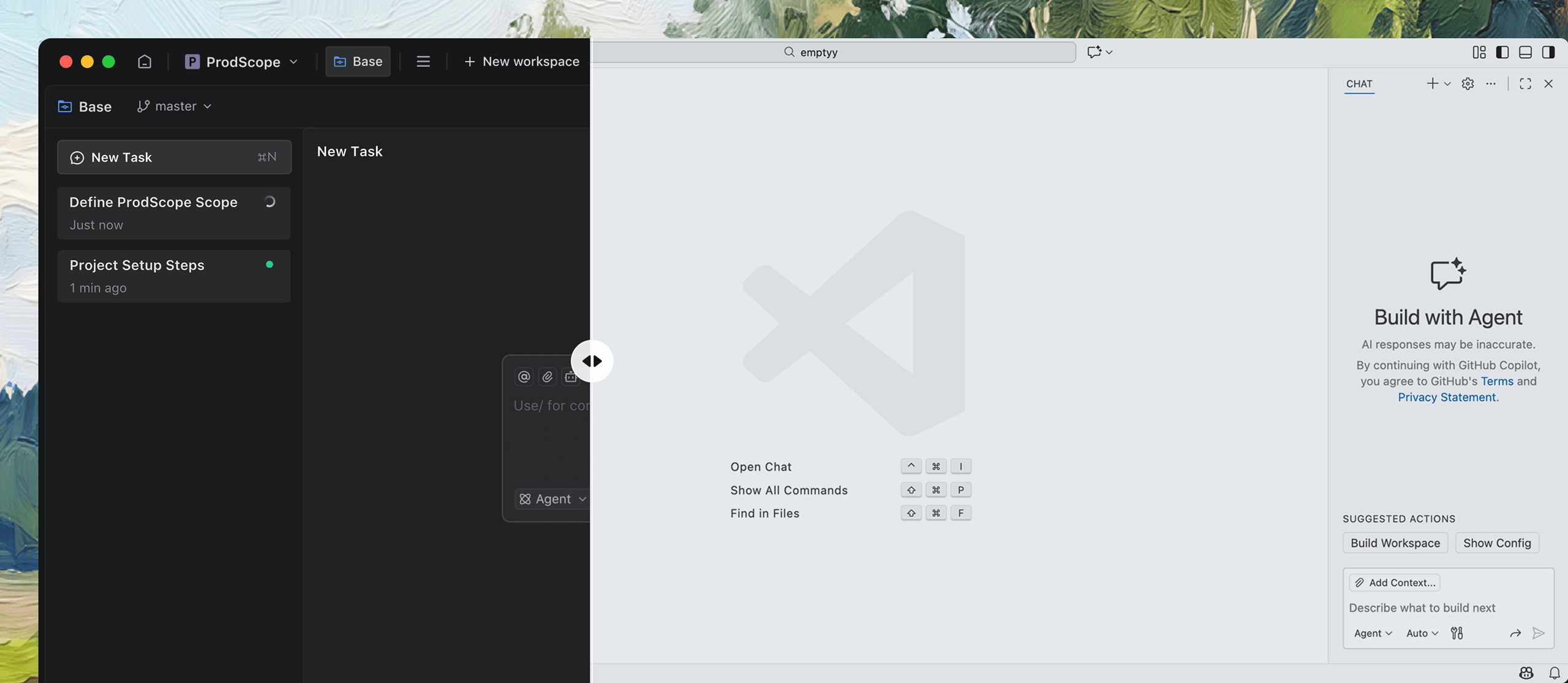

SWE-bench Verified Report

Leading the field with 76.1% single-attempt resolved rate on SWE-bench Verified.

November 1, 2025

What impact will AI have on the software industry?

Transforming software engineering: from code assistance to AI-native infrastructure.

October 31, 2025

How do we see the four levels of AI SWE?

Turning AI coding from helper to powerhouse across the entire software lifecycle.

October 30, 2025

Fokus auf das Erschaffen

Verdent kümmert sich um den Rest

Verdent kümmert sich um den Rest

Limitiert GRATIS TESTEN

Für Mac herunterladen Apple Silicon

Kontakt: hi@verdent.ai

© 2026 Verdent AI, Inc. Alle Rechte vorbehalten.

Von professionellen Entwicklern geschätzt